Unsupervised Learning Fundamentals

Master pattern discovery without labels - from clustering to anomaly detection

Your streaming service knows your taste in movies better than most of your friends - not because someone studied you, but because a machine found patterns in your behavior it was never told to look for. That is unsupervised learning: algorithms that discover hidden structure in raw data with no labels, no guidance, and no predefined answers. It is how machines uncover what humans never thought to ask about.

What Makes This Tutorial Different

Unsupervised learning is the future of AI. Most of human and animal learning is unsupervised - we learn how the world works by observing it, not by being told what everything is. The real intelligence breakthrough will come when we crack unsupervised learning at scale. That's where the magic happens.

What is Unsupervised Learning?

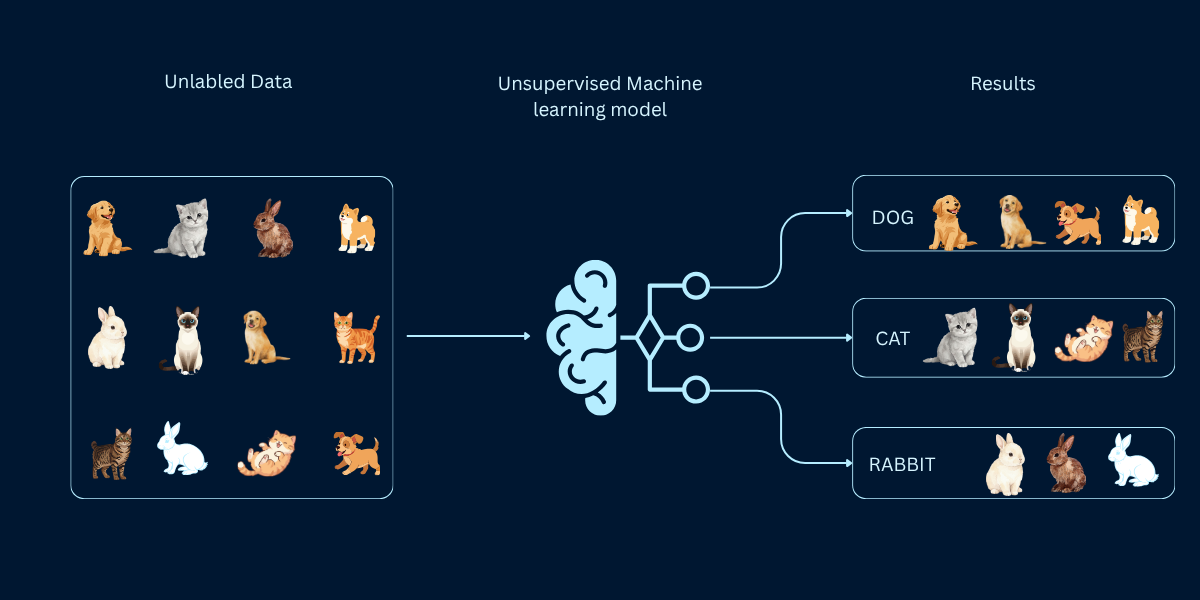

Imagine organizing thousands of photos on your computer without creating any folders or labels first. You just look at the images and naturally group similar ones together: vacation photos here, family gatherings there, pet pictures in another pile. That's essentially what unsupervised learning does—it finds patterns and groups in data without being told what categories to look for.

Unsupervised learning is fundamentally different from supervised learning. There are no "correct answers" provided during training. Instead, the algorithm explores the data on its own, discovering hidden structures, natural groupings, unusual patterns, and relationships that might not be obvious to human observers. This makes it both challenging and incredibly valuable for discovering insights you didn't even know existed.

How Unsupervised Learning Works

Unsupervised learning follows a fundamentally different process than supervised learning. Instead of learning from labeled examples, the algorithm explores the data to find patterns, structures, and relationships on its own. Here's the four-step process:

- Data Collection: Gather raw, unlabeled data without any predefined categories or target variables. For example: customer purchase histories, website clickstreams, or sensor readings.

- Pattern Discovery: The algorithm analyzes the data to identify hidden structures. This could be natural groupings (clustering), unusual data points (anomaly detection), essential features (dimensionality reduction), or item relationships (association rules).

- Result Interpretation: The discovered patterns must be interpreted by domain experts to determine business relevance. Unlike supervised learning where accuracy is clear, unsupervised results require human validation and understanding.

- Insight Application: Apply the discovered patterns to make decisions, generate recommendations, detect anomalies, or simplify data for further analysis.

The critical difference from supervised learning: there's no automatic way to validate that the patterns are correct or meaningful. Success depends on combining algorithmic pattern discovery with human domain expertise to interpret and act on the findings.

Unsupervised vs Supervised Learning: The Core Distinction

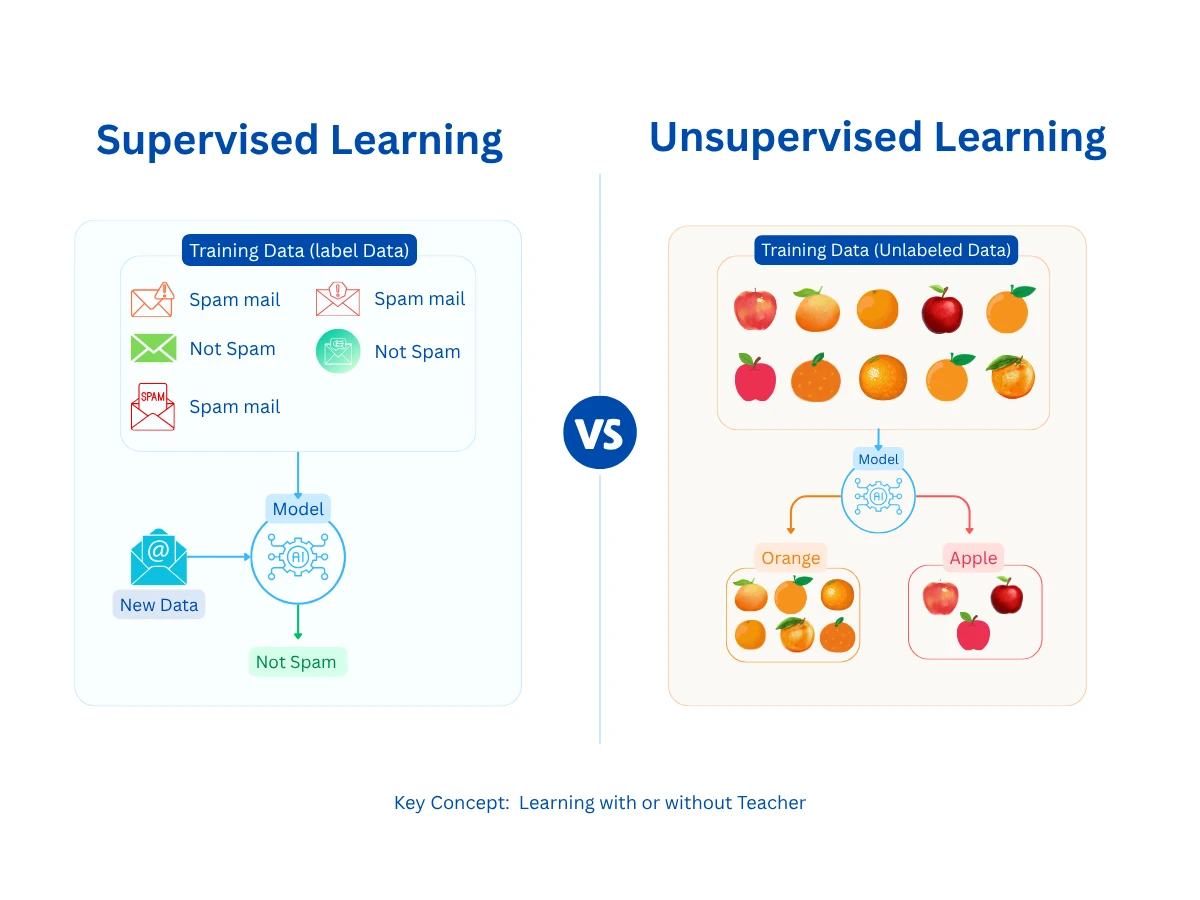

The fundamental difference between supervised and unsupervised learning lies in the training data. Supervised learning requires labeled examples with known outcomes (inputs and outputs), while unsupervised learning works with raw data to discover patterns without guidance.

| Aspect | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Training Data | Labeled examples with known outcomes | Unlabeled data without predefined categories |

| Goal | Predict outcomes for new data | Discover hidden patterns and structures |

| Learning Process | Learn from correct answers (supervision) | Explore data independently (no supervision) |

| Validation | Compare predictions to actual outcomes | Domain expert interpretation required |

| Common Uses | Spam detection, price prediction, diagnosis | Customer segmentation, fraud detection, data compression |

| Example Question | Is this email spam? (Yes/No) | What natural customer groups exist? (Unknown) |

| Success Metric | Prediction accuracy (clear measure) | Business value of discovered insights (subjective) |

When to Use Which Approach

Unsupervised Learning

Like walking into a library where all the books are piled together with no shelves or signs. You start grouping them naturally - these look like novels, these feel like science books. No one told you the categories. You found them yourself.

Complete Breakdown

Discovering hidden patterns and structures in unlabeled data without predefined categories or guidance

How It Works

- Algorithm receives data without any labels or answers

- Discovers natural groupings, patterns, or anomalies in the data

- Identifies relationships humans might not have noticed

- Groups similar data points or detects unusual outliers

Real-World Examples

Best For

Limitations

Common Algorithms

Authoritative Sources

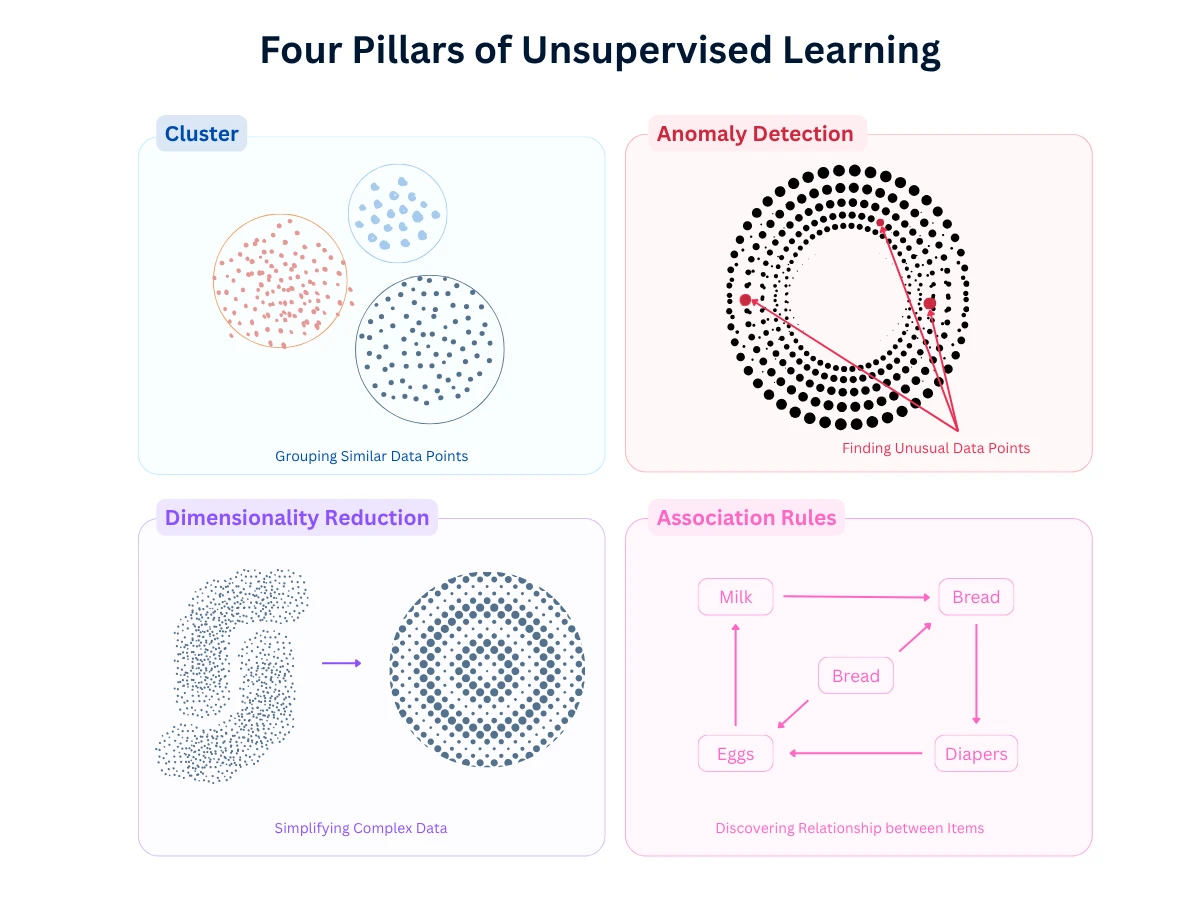

The Four Core Approaches of Unsupervised Learning

Unsupervised learning encompasses four main categories, each solving different types of problems. Understanding when to use each approach is critical for successful implementation.

| Learning Category | Business Question Answered | Strategic Value | Implementation Complexity | Industry Leaders |

|---|---|---|---|---|

| Clustering | What natural groups exist in our data? | Customer segmentation, market analysis | Medium | Amazon customer personas, Spotify music clustering |

| Anomaly Detection | What's unusual or unexpected in our data? | Fraud prevention, system monitoring | High | PayPal fraud detection, Netflix content monitoring |

| Dimensionality Reduction | What are the essential features in complex data? | Data visualization, feature engineering | High | Google search ranking, Facebook news feed optimization |

| Association Mining | What items frequently occur together? | Recommendation systems, cross-selling | Medium | Amazon 'Customers who bought', Netflix content recommendations |

The Validation Challenge

When to Use Unsupervised Learning: Problem Suitability Framework

Not every problem is suitable for unsupervised learning. The critical requirement is having a clear goal for pattern discovery and the domain expertise to interpret results. Here's how to determine which approach fits your problem:

| Your Problem | Recommended Approach | Why It Works | Example Application |

|---|---|---|---|

| Find natural customer groups | Clustering | Discovers segments without predefined categories | Amazon customer personas, market segmentation |

| Detect unusual transactions | Anomaly Detection | Identifies outliers that deviate from normal patterns | PayPal fraud detection, system monitoring |

| Simplify complex data | Dimensionality Reduction | Reduces features while preserving information | Netflix user preference compression, data visualization |

| Find item relationships | Association Rules | Discovers which items frequently occur together | Amazon recommendations, market basket analysis |

| Explore unknown patterns | Clustering + Visualization | Reveals structures you didn't know to look for | Discovering new customer behaviors, trend analysis |

| Prepare data for other models | Dimensionality Reduction | Removes noise and redundant features | Feature engineering for supervised learning |

When NOT to Use Unsupervised Learning

Clustering: Discovering Natural Groups in Data

Clustering is the most common unsupervised learning approach. It automatically groups similar data points together without being told what the categories should be. Think of it as organizing your closet by grouping similar items together—shoes with shoes, shirts with shirts—but without any labels telling you what goes where.

In business applications, clustering discovers customer segments, product categories, or market opportunities that weren't obvious from traditional analysis. Amazon uses clustering to group customers by behavior patterns. Spotify clusters songs to create personalized playlists. Netflix discovered thousands of content micro-genres through clustering that drive their recommendation engine.

Why This Visual? Choosing the right clustering algorithm depends on your business need, data characteristics, and scale requirements. This interactive tool helps you select the optimal algorithm for your specific scenario—from Amazon's customer segmentation to PayPal's fraud detection.

K-Means

Partition-BasedHierarchical Clustering

Hierarchy-BasedDBSCAN

Density-BasedGaussian Mixture

ProbabilisticSpectral Clustering

Graph-Based| Algorithm | Best For | Strengths | Limitations |

|---|---|---|---|

| K-Means | Customer segmentation, market analysis | Fast, scalable, easy to interpret | Need to specify number of clusters, assumes spherical clusters |

| Hierarchical | Taxonomy creation, nested groups | Shows relationships between clusters, no need to specify k | Doesn't scale well, sensitive to noise |

| DBSCAN | Anomaly detection, irregular shapes | Finds clusters of any shape, identifies outliers automatically | Sensitive to parameters, struggles with varying densities |

| Gaussian Mixture | Soft segmentation, overlapping groups | Probabilistic cluster assignment | Requires specifying number of clusters, computationally expensive |

Deep Dive Available

Anomaly Detection: Finding Unusual Patterns and Outliers

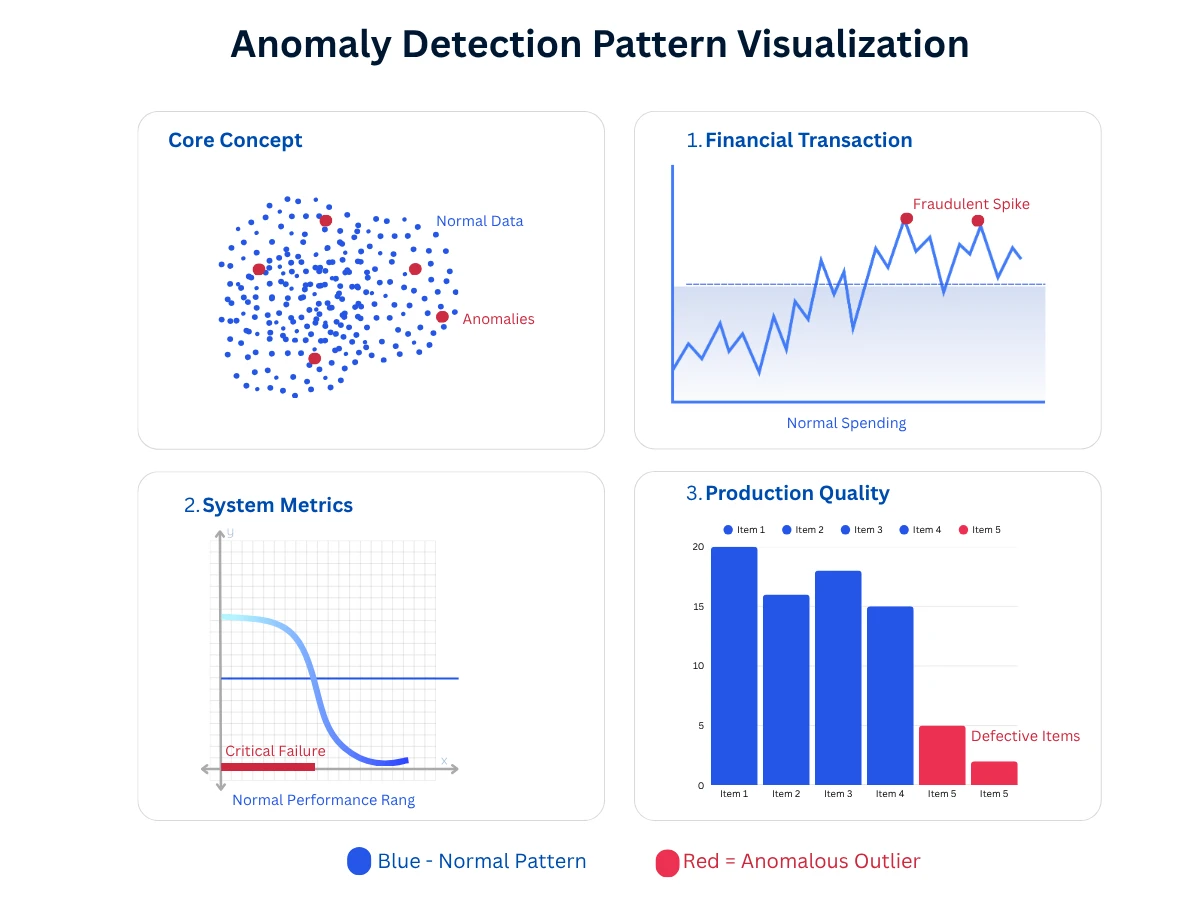

Anomaly detection (also called outlier detection) identifies data points that deviate significantly from normal patterns. It's like a security guard who knows what "normal" looks like and alerts you when something unusual happens—whether that's fraud, system failures, or quality defects.

In practice, anomaly detection powers fraud prevention (PayPal blocking suspicious transactions), system monitoring (Netflix detecting streaming issues), quality control (manufacturing defect detection), and even opportunity discovery (identifying unusual customer behaviors that signal high value).

| Method | Best For | How It Works | Example Use |

|---|---|---|---|

| Statistical Methods | Simple outlier detection | Flags points beyond normal distribution (e.g., 3 standard deviations) | Fraud detection, quality control |

| Isolation Forest | High-dimensional data | Isolates anomalies by random splitting (outliers are easier to isolate) | System monitoring, log analysis |

| One-Class SVM | Learning normal behavior | Learns boundary around normal data, flags points outside | Equipment failure prediction |

| Autoencoders | Complex pattern anomalies | Neural network learns to reconstruct normal data, fails on anomalies | Cybersecurity threat detection |

The False Positive Challenge

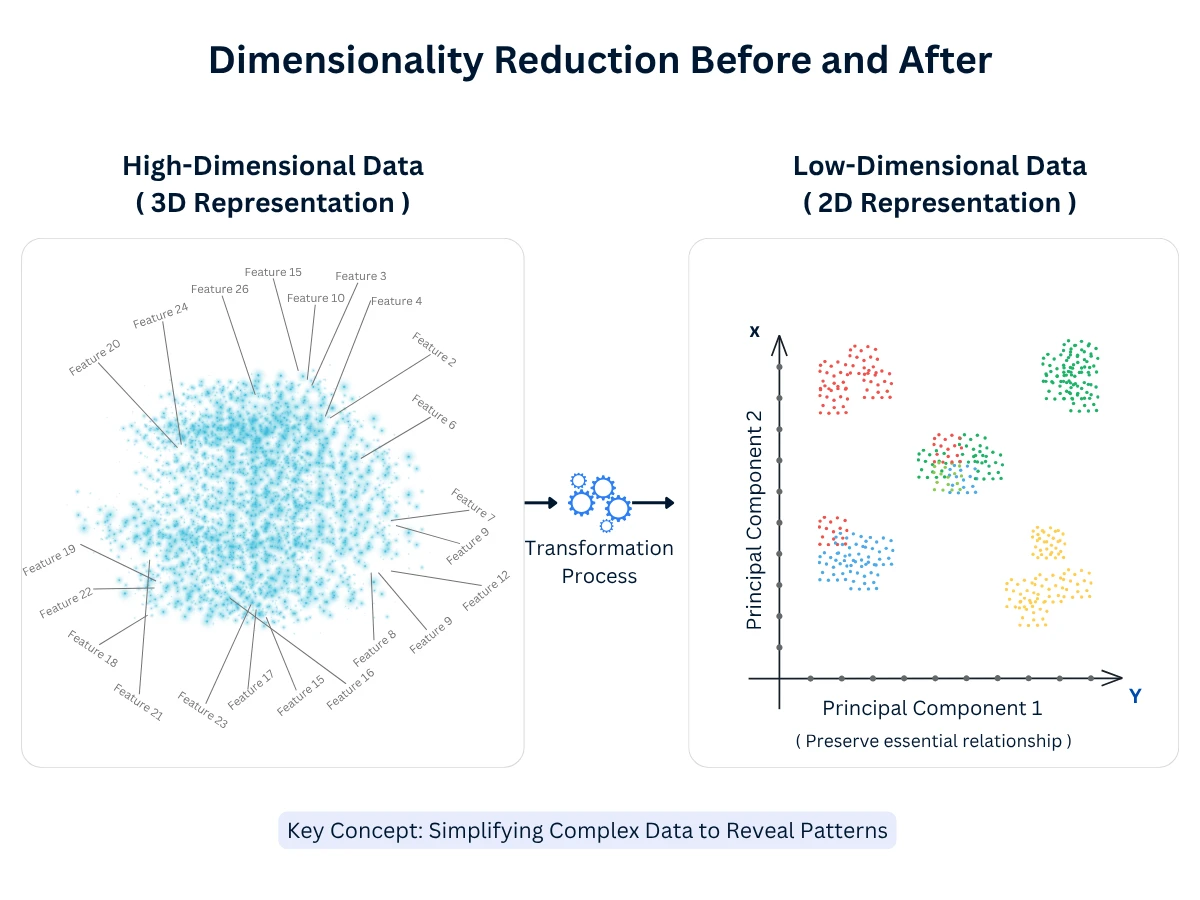

Dimensionality Reduction: Simplifying Complex Data

Dimensionality reduction transforms high-dimensional data (hundreds or thousands of features) into lower-dimensional representations (2D or 3D) while preserving the essential information. Think of it as creating a simplified map from complex terrain—you lose some detail but keep the important relationships.

This technique enables visualization of complex data (plot 100-dimensional customer data in 2D), reduces computational costs (faster processing with fewer features), removes noise (eliminate redundant or irrelevant features), and improves other machine learning models (better input features lead to better predictions).

| Technique | Best For | How It Works | Typical Use Case |

|---|---|---|---|

| PCA (Principal Component Analysis) | Data preprocessing, visualization | Finds directions of maximum variance in data | Reducing features before modeling, data compression |

| t-SNE | Visualization of clusters | Preserves local structure, emphasizes clusters | Visualizing customer segments, exploring patterns |

| UMAP | Fast visualization, large datasets | Preserves both local and global structure | Interactive data exploration, embedding visualization |

| Autoencoders | Non-linear reduction, feature learning | Neural network learns compressed representation | Image compression, anomaly detection preprocessing |

Deep Dive Available

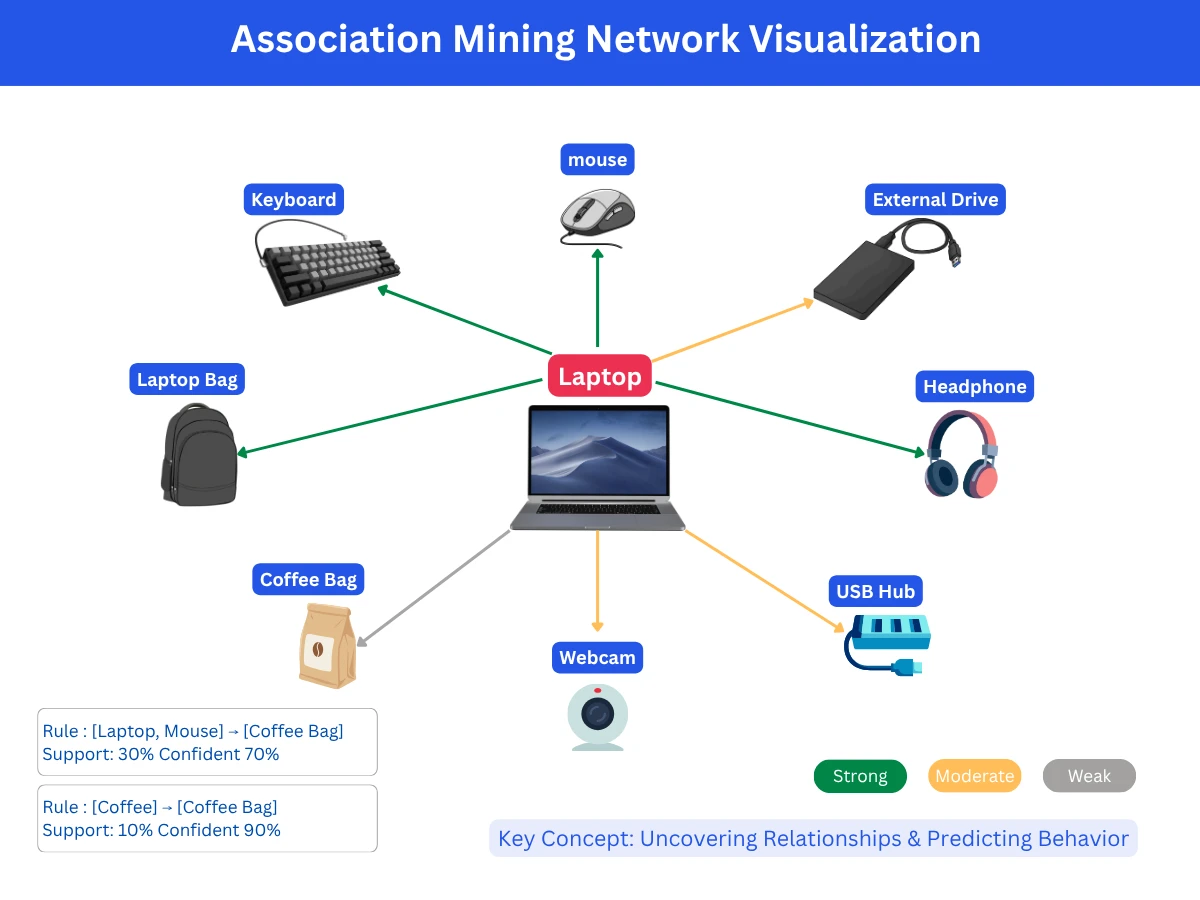

Association Mining: Discovering Item Relationships

Association rule mining (also called market basket analysis) discovers relationships between items or events in transaction data. The classic example is "customers who bought bread also bought butter," but modern applications go far beyond simple product recommendations to reveal complex patterns in customer behavior, content relationships, and sequential patterns.

Amazon's "Frequently bought together" feature uses association mining. Netflix discovers content relationships to improve recommendations. Grocery stores use it for store layout optimization. The key insight: these relationships emerge from the data itself, revealing patterns that might not be obvious from business intuition alone.

| Application Type | What It Discovers | Common Algorithms | Example Use |

|---|---|---|---|

| Market Basket Analysis | Items frequently bought together | Apriori, FP-Growth | Product recommendations, store layout optimization |

| Sequential Pattern Mining | Temporal order of events/purchases | GSP, PrefixSpan | Customer journey analysis, next-purchase prediction |

| Collaborative Filtering | User-item preference patterns | User-based CF, Item-based CF | Content recommendations (Netflix, Spotify) |

| Web Usage Mining | Page visit patterns and click sequences | Web path analysis | Website navigation optimization, personalization |

Deep Dive Available

Common Pitfalls: Top Mistakes That Derail Projects

Analysis of unsupervised learning projects reveals consistent failure patterns. Here are the top mistakes and how to avoid them:

- Lack of Domain Expertise: Discovering technically valid patterns that have no business meaning. SOLUTION: Always involve domain experts in pattern interpretation and validation.

- Poor Data Quality: Garbage in, patterns out - discovering patterns in noise or biased data. SOLUTION: Establish data quality standards (>95% completeness, representative samples).

- Wrong Evaluation Mindset: Expecting objective accuracy scores like supervised learning. SOLUTION: Define business-relevant success criteria before starting (customer engagement, fraud reduction, etc.).

- Ignoring Temporal Changes: Patterns discovered today may not hold tomorrow - customer behavior shifts, markets evolve. SOLUTION: Monitor pattern stability and retrain models regularly.

- Spurious Correlations: Finding coincidental relationships that don't generalize. SOLUTION: Validate patterns across different time periods and datasets before acting.

- Scale Underestimation: Algorithms that work on samples fail on full production data. SOLUTION: Test at production scale before deployment.

The 73% Failure Rate

Your Unsupervised Learning Journey: What's Next

Now that you understand unsupervised learning fundamentals, you're ready to dive deeper into specific techniques. Here's the recommended learning path through our unsupervised learning section:

- Unsupervised Learning Fundamentals (this page, 16 min): Core concepts, four approaches, when to use each, data quality requirements

- Clustering Methods (15 min): K-means, hierarchical, DBSCAN, Gaussian mixture models, algorithm selection, evaluation metrics

- Dimensionality Reduction (15 min): PCA, t-SNE, UMAP, autoencoders, choosing dimensions, visualization techniques

- Association Rules (12 min): Market basket analysis, Apriori algorithm, support and confidence, recommendation systems

Supporting topics covered in other sections include data preparation (feature scaling, normalization), evaluation strategies (silhouette scores, elbow method), and model deployment. The complete unsupervised learning journey takes about 60 minutes and provides production-ready knowledge.

Frequently Asked Questions

01 What's the difference between supervised and unsupervised learning?

Supervised learning uses labeled data (input-output pairs) to predict outcomes. Unsupervised learning works with unlabeled data to discover hidden patterns. Use supervised when you know what you want to predict; use unsupervised when you want to discover unknown structures or groupings in your data.

02 Which clustering algorithm should I use?

Start with K-means if you can estimate the number of clusters (fast, scalable, interpretable). Use hierarchical clustering when you want to see relationships between groups (good for small datasets). Choose DBSCAN for irregular shapes or when you need automatic outlier detection. Gaussian mixture models work well when clusters overlap.

03 How do I validate unsupervised learning results?

Unlike supervised learning, there's no automatic accuracy metric. Combine technical metrics (silhouette score, inertia) with business validation: Do the discovered clusters make sense? Can domain experts interpret them? Do they lead to actionable insights? Always validate with business experts, not just data scientists.

04 How much data do I need for unsupervised learning?

Minimum 100-1000 data points for basic clustering; 10,000+ for production systems. The key is having enough examples to reveal meaningful patterns. Quality matters more than quantity - 1,000 high-quality, representative samples beat 100,000 biased or noisy ones.

05 Is unsupervised learning harder than supervised learning?

Technically, algorithms can be simpler. The challenge is interpretation - there's no right answer to validate against. You need strong domain expertise to determine if discovered patterns are meaningful or spurious. Success depends more on business understanding than algorithm sophistication.

06 Can I use unsupervised learning with labeled data?

Yes! Many successful applications combine both. Use unsupervised learning to discover patterns first (clustering, dimensionality reduction), then apply supervised learning to those discovered patterns. This hybrid approach often outperforms using either alone.

07 What are the main business applications of unsupervised learning?

Customer segmentation (finding natural customer groups), fraud detection (identifying unusual patterns), recommendation systems (discovering item relationships), data compression (reducing features while preserving information), and exploratory analysis (understanding data structure before modeling).

08 How long does it take to implement unsupervised learning?

Typical timeline: 8-16 weeks. Data exploration and quality assessment (2-4 weeks), algorithm experimentation (2-3 weeks), pattern validation with domain experts (2-6 weeks), production deployment (2-3 weeks). Simple exploratory projects can be faster; strategic business applications take longer due to validation requirements.

Ready to Dive Deeper?

You now understand unsupervised learning fundamentals and the four core approaches. Your next step is exploring specific techniques in depth based on your problem type.

Your Next Step

Unsupervised learning is not about finding patterns in data—it's about discovering opportunities in reality. The companies that master pattern discovery without supervision will discover competitive advantages that supervised learning can never provide.