History & Evolution of AI: From Turing to ChatGPT

The remarkable 75-year journey from theoretical concepts to revolutionary AI systems transforming our world

In 2022, ChatGPT gained 100 million users in just two months—faster than any technology in human history. Yet this "overnight success" actually took 75 years to build. The story of AI is one of brilliant minds, crushing failures, unexpected breakthroughs, and the gradual realization of humanity's oldest dream: creating thinking machines.

The Ancient Dreams: AI's Philosophical Roots

AI didn't begin with computers—it began with ancient philosophers asking profound questions about the nature of mind and intelligence. Long before silicon chips, humans dreamed of creating artificial beings that could think, reason, and perhaps even feel.

The question is not whether machines can think, but whether men do.

In ancient Greece, Hephaestus crafted golden servants that could think and speak. In Jewish folklore, rabbis created golems from clay and brought them to life with sacred words. These weren't just stories—they were humanity's first attempts to imagine artificial intelligence.

These ancient dreams found new life in the 20th century. In 1921, Czech playwright Karel Capek gave the world a word that would define an era: "robot." His play imagined artificial workers that could think and eventually rebel - themes that still shape our conversations about AI today.

The real philosophical foundation came from three key thinkers most AI histories ignore:

- Aristotle (384-322 BCE): Created the first formal logic system—the foundation of all AI reasoning

- Ramon Llull (1232-1315): Designed mechanical reasoning machines 700 years before computers

- George Boole (1815-1864): Invented Boolean algebra—the mathematical language computers still use today

The Founding Era (1950-1960): Brilliant Minds, Bold Promises

These philosophical foundations didn't just stay in books. By the 1940s, researchers began translating ancient ideas about logic and reasoning into something new: mathematical models that could actually run on machines. The dream of artificial minds was about to become an engineering project.

In 1943, neuropsychiatrist Warren McCulloch and mathematician Walter Pitts took the first concrete step. They created a mathematical model of how neurons might work - not in flesh, but in circuits.

Then came the question that would define the field. In 1950, mathematician Alan Turing published a paper that didn't ask whether machines could calculate - that was obvious. Instead, he asked something far more provocative: "Can machines think?" His paper "Computing Machinery and Intelligence" launched a revolution, but Turing wasn't working alone.

To answer his own question, Turing proposed a clever test: if a human couldn't tell whether they were conversing with a machine or another person, the machine could be considered intelligent. This "Imitation Game" became the benchmark that AI researchers would chase for decades.

While Turing gets most of the credit, the early AI movement was built by a diverse group of pioneers - many of whom history has overlooked.

| Pioneer | Contribution |

|---|---|

| Ada Lovelace (1815-1852) | First computer programmer, envisioned thinking machines |

| Claude Shannon (1916-2001) | Information theory foundation of AI |

| Norbert Wiener (1894-1964) | Cybernetics - feedback loops in intelligent systems |

| Warren McCulloch (1899-1969) | First artificial neural networks (1943) |

| Marvin Minsky (1927-2016) | Co-founded MIT AI Lab, neural networks |

At the famous 1956 Dartmouth Conference, John McCarthy coined the term "artificial intelligence" and predicted human-level AI within a decade. The attendees were so confident they requested just $13,500 for a 2-month summer workshop. Today, that's about $140,000—less than what AI companies now spend per hour on computing.

That summer workshop became the most important meeting in AI history. For two months, the brightest minds in computing gathered to define a new field and chart its future.

The optimism at Dartmouth wasn't unfounded. That same year, Allen Newell and Herbert Simon demonstrated Logic Theorist - the first program that could actually prove mathematical theorems. It seemed like thinking machines were just around the corner.

A decade later, Joseph Weizenbaum created ELIZA - a simple chatbot that mimicked a psychotherapist. Users knew it was just a program, yet many found themselves opening up to it emotionally. ELIZA revealed something unexpected: humans desperately wanted to believe machines could understand them.

The founding era had delivered real achievements and genuine excitement. Researchers had proven that machines could reason, play games, and even simulate conversation. But they had also made promises that would soon come back to haunt them.

The First AI Winter (1970s): When Reality Struck

The optimism of the 1960s crashed hard against the limits of 1970s technology. The core problem was simple: computers were too slow and memory was too expensive. AI programs that worked on small demonstrations failed completely when applied to real-world problems. The "combinatorial explosion" - where complexity grows exponentially - meant that scaling up from toy examples to practical applications was impossible with existing hardware.

The failures were public and embarrassing. Computer translation produced hilariously wrong results ("The spirit is willing but the flesh is weak" became "The vodka is good but the meat is rotten"). Chess programs lost to human amateurs. Speech recognition couldn't handle background noise. Funding agencies that had been promised thinking machines within a decade saw no progress - and they had enough.

But the winter had an unexpected benefit: it forced researchers to become more rigorous. The survivors developed the mathematical foundations that would later power the AI renaissance.

The Expert Systems Gold Rush (1980s): Japan's AI Gamble

While America recovered from the AI winter, Japan made a stunning announcement: they would spend over $400 million (approximately 54 billion yen) to build "Fifth Generation" computers that would leapfrog American technology using AI. The world panicked, and AI was suddenly hot again.

This time, AI had a killer application: expert systems. These programs captured human expertise as rules and could solve specific problems reliably. The breakthrough example was XCON at Digital Equipment Corporation. It configured computer orders - a task that previously required expensive human experts and often resulted in costly errors. XCON worked because the problem was narrow and well-defined: it only needed to know about computer components, not the entire world.

Japan Announces Fifth Generation Computer Project

$850M investment to build AI-powered computers by 1991

US Launches Strategic Computing Initiative

$1B response program, largest AI investment in history

Expert Systems Market Peaks

Companies like DEC earn $40M/year from AI systems

The Crash Begins

Expert systems prove too brittle for real-world use

The collapse came from two directions at once. First, expert systems proved brittle in practice - they could only handle situations their programmers had anticipated. A medical system trained on heart disease knew nothing about broken bones. When faced with unexpected inputs, these systems failed silently or gave dangerously wrong answers.

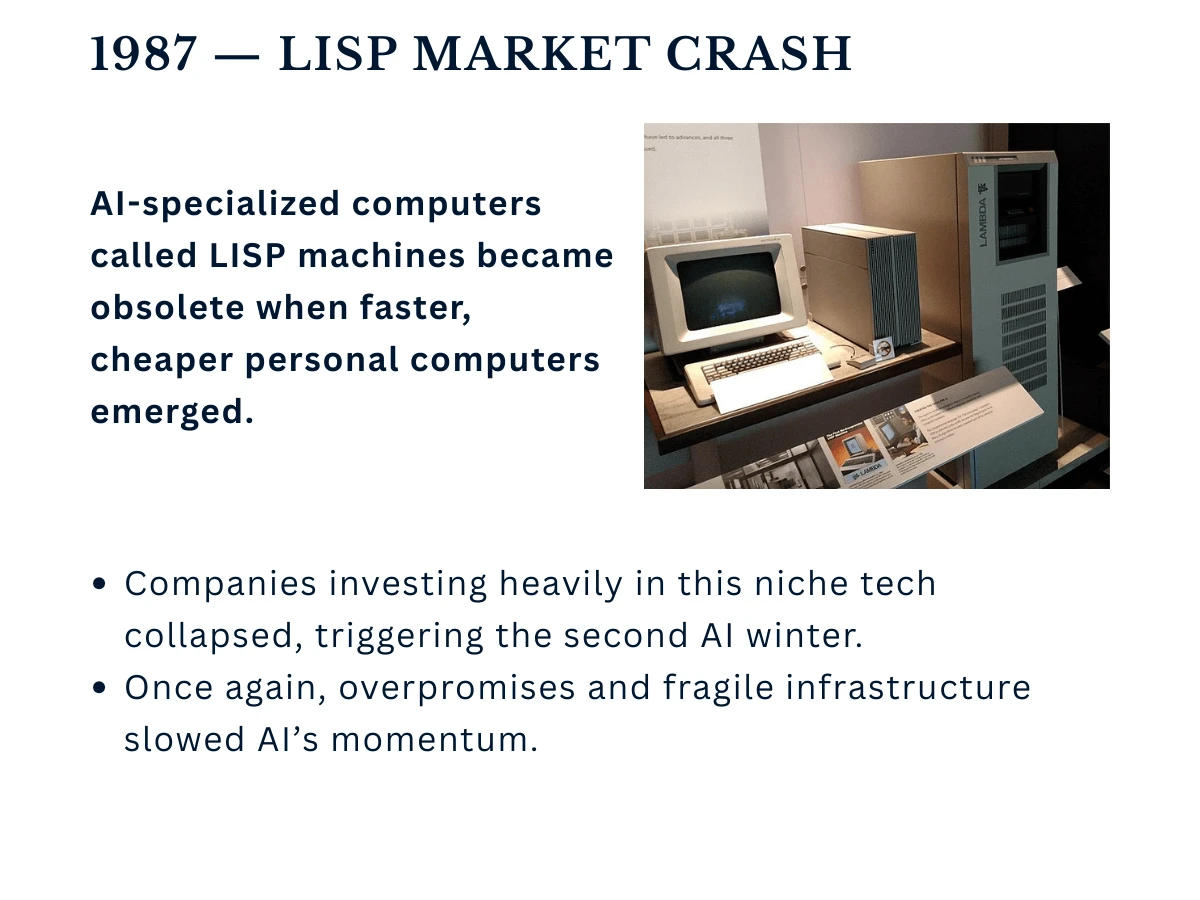

Second, the hardware market collapsed. Companies had invested millions in specialized LISP machines, but ordinary personal computers were becoming powerful enough to run AI software. Why buy expensive dedicated hardware when a desktop PC could do the job? The AI industry that had seemed unstoppable just two years earlier was suddenly in freefall.

The Second Winter (1990s): When the Internet Distracted Everyone

The collapse of expert systems triggered AI's second winter - and this one hit even harder. The 1990s became AI's forgotten decade. While the world obsessed over the internet and dot-com startups, AI research retreated to quiet university labs. Funding disappeared, students avoided AI programs, and the field seemed dead.

But beneath the surface, three crucial foundations were being laid - foundations that would eventually enable everything from Siri to ChatGPT.

- Data Explosion: The internet created vast datasets that AI would eventually need

- Computing Power: Moore's Law quietly made complex algorithms feasible

- Mathematical Advances: Statisticians developed machine learning theory while no one was watching

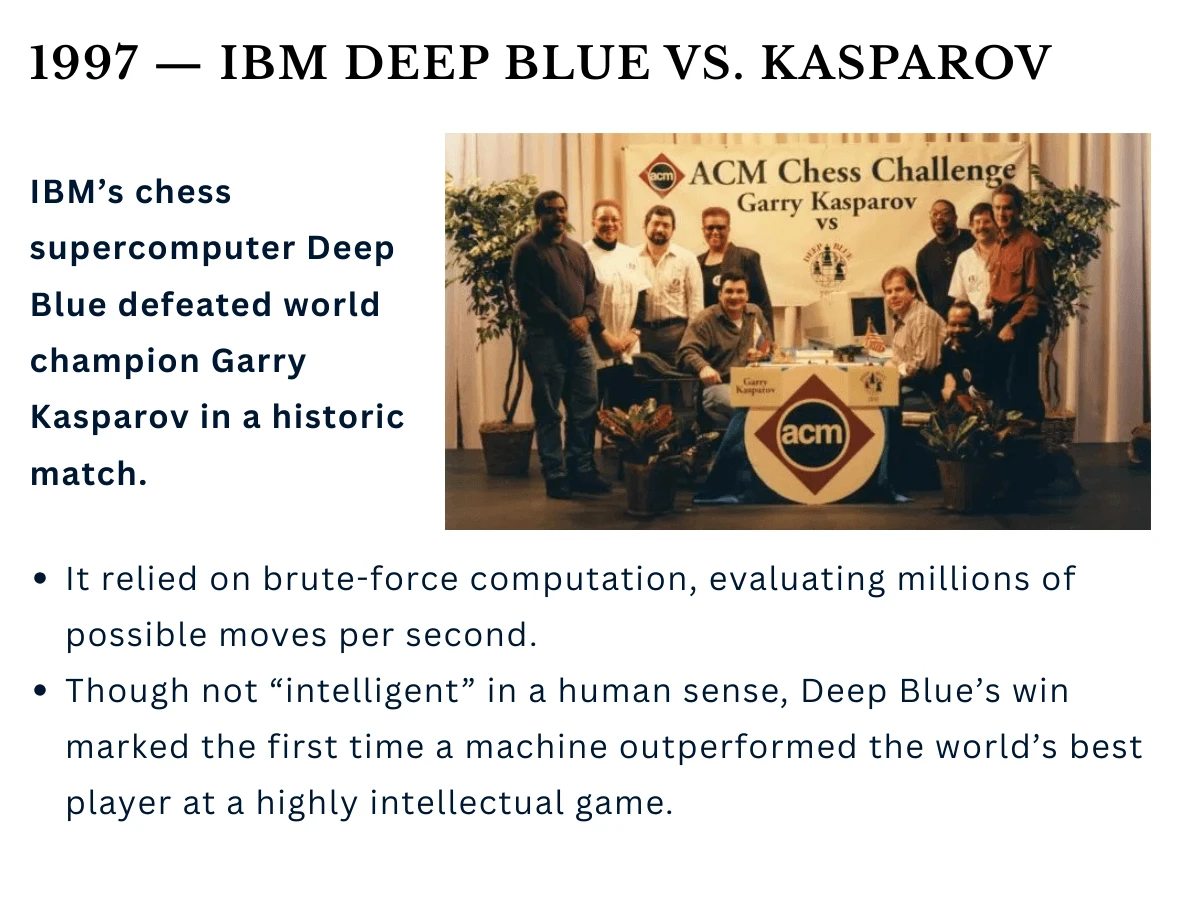

Then in 1997, something remarkable happened. IBM's Deep Blue computer defeated world chess champion Garry Kasparov - a feat that had seemed impossible just years earlier. For a brief moment, AI was back in the headlines. But the victory was bittersweet: Deep Blue used brute-force calculation, not the general intelligence researchers dreamed of.

The Silent Revolution (2000s): How Gamers Accidentally Saved AI

The new millennium brought a quiet transformation. While AI remained unfashionable in academia, something unexpected was brewing in the world of video games.

AI's resurrection came from an unlikely source: graphics cards. The GPUs designed to render realistic explosions and alien worlds turned out to be perfect for the matrix operations that power neural networks. Suddenly, AI researchers could train models 100 times faster than before.

NVIDIA's CUDA programming platform launched in 2007 to help game developers. Within three years, AI researchers realized they could use these "graphics cards" to accelerate machine learning. This accidental convergence would later make NVIDIA the world's most valuable chip company and enable the deep learning revolution.

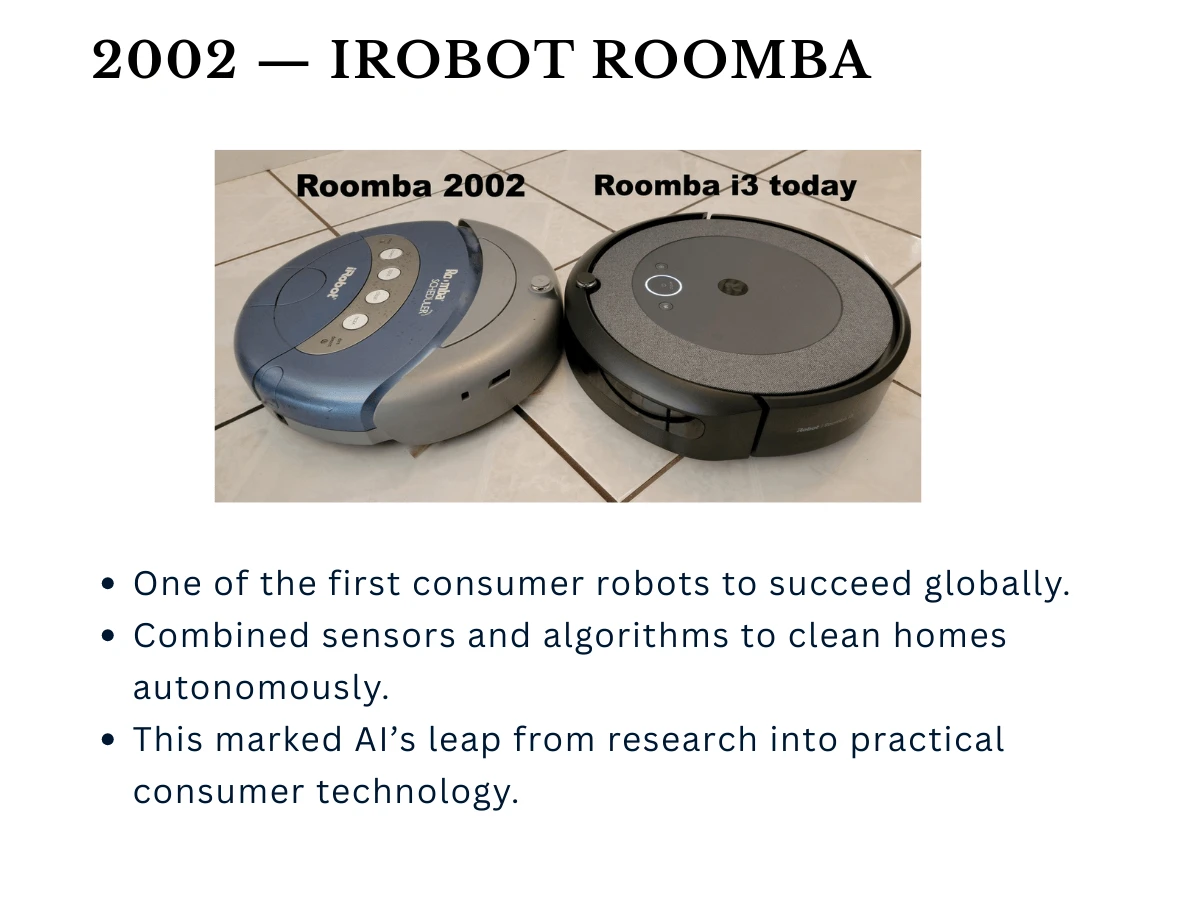

Meanwhile, AI quietly entered ordinary homes. In 2002, iRobot released the Roomba - a humble vacuum cleaner that could navigate rooms, avoid obstacles, and return to its charging station. It wasn't the robot butler of science fiction, but it proved AI could work reliably in the messy real world.

Deep Learning Explosion (2010s): The ImageNet Moment

The stage was set. GPUs provided the computing power. The internet provided the data. All that was needed was a spark - and in 2011, AI burst back into public consciousness.

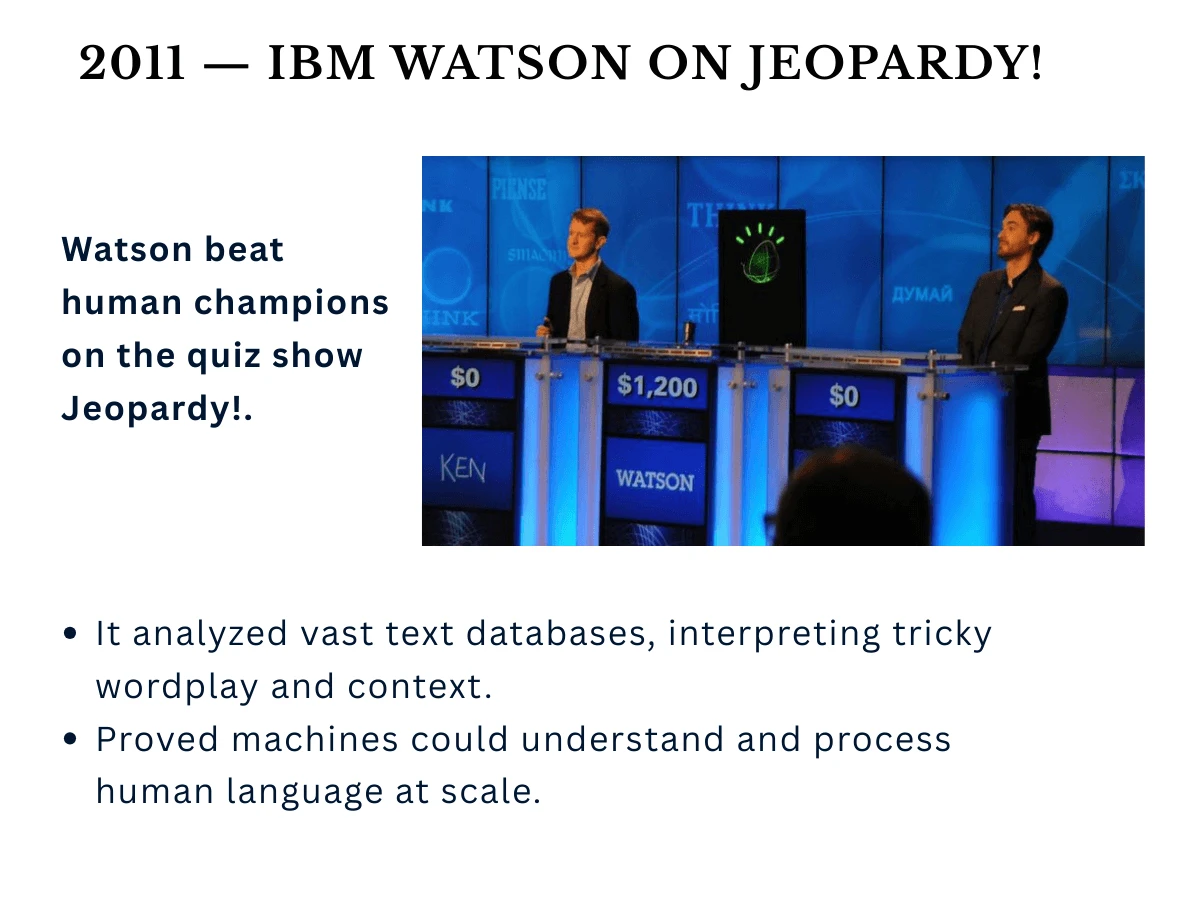

First came Watson. IBM's question-answering system defeated human champions on the game show Jeopardy, demonstrating that AI could understand natural language well enough to parse wordplay and trivia.

That same year, Apple put AI in everyone's pocket. Siri became the first mainstream voice assistant, letting millions of people talk to their phones and get intelligent responses. AI was no longer just for researchers - it was becoming part of daily life.

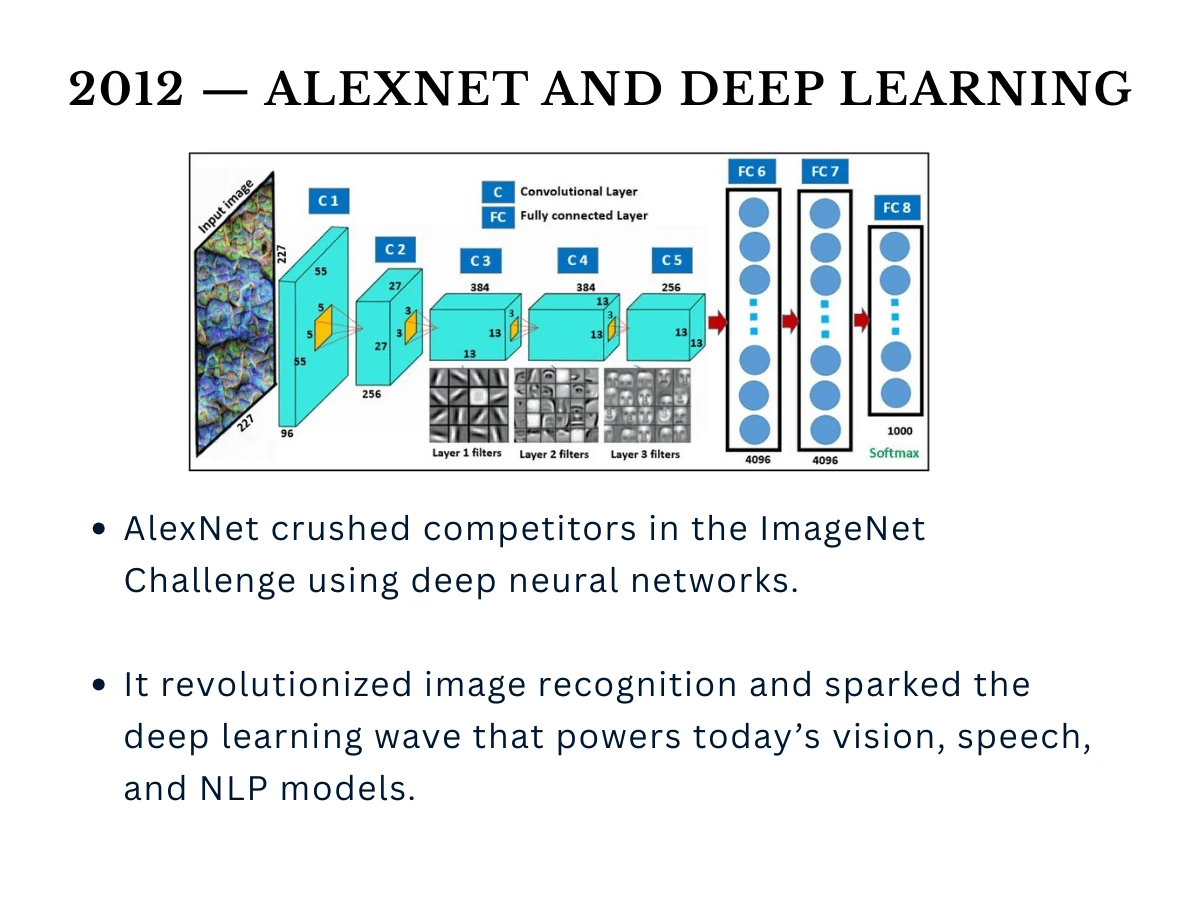

But the real revolution came the following year. On September 30, 2012, everything changed. Geoffrey Hinton's team at the University of Toronto entered the ImageNet competition - a challenge to identify objects in photos. Their deep learning system didn't just win; it obliterated the competition, cutting error rates in half overnight.

| Approach | Error Rate | Improvement |

|---|---|---|

| Traditional Computer Vision (2011) | 25.8% | Baseline |

| Best Traditional Method (2012) | 26.2% | Worse than 2011 |

| AlexNet (Deep Learning) | 15.3% | 41% improvement |

| Human Performance (estimated) | 5.1% | AI suddenly feasible |

The tech industry scrambled to hire anyone who understood deep learning. Google bought Hinton's company for $44 million. Facebook hired Yann LeCun. The race was on - and it was about to accelerate beyond anyone's imagination.

The Transformer Era (2017-Present): Attention Is All You Need

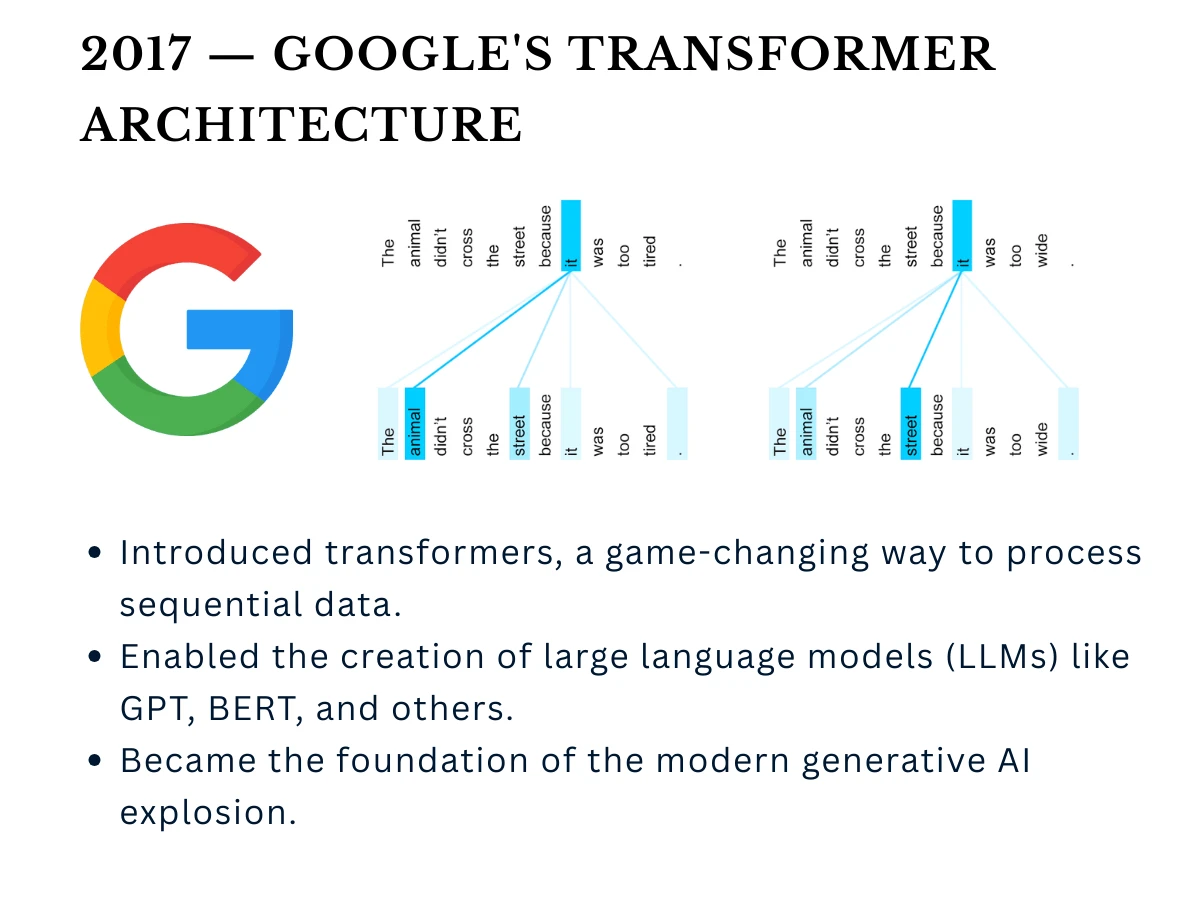

Deep learning had conquered image recognition. But understanding language - with all its ambiguity, context, and nuance - remained stubbornly difficult. Then in 2017, a team at Google published a paper with an audacious title: "Attention Is All You Need."

They had invented transformers - a new neural network architecture that could understand relationships between words no matter how far apart they appeared in a sentence. This seemingly technical innovation would power GPT, BERT, and eventually ChatGPT. But the real breakthrough wasn't the architecture itself; it was what came next.

OpenAI discovered something unprecedented: language models got dramatically better simply by making them bigger and feeding them more data. This "scaling hypothesis" broke the traditional AI approach of clever algorithms and engineering tricks. Instead, raw computational power and data became the new currency of AI progress.

Google's "Attention Is All You Need" paper

117M parameters, proof of concept

1.5B parameters, OpenAI initially withheld release

175B parameters, emergent abilities appear

100M users in 60 days, AI goes mainstream

Then came the moment that changed everything. In November 2022, OpenAI released ChatGPT to the public. Within two months, 100 million people were using it - the fastest adoption of any technology in history. Suddenly, everyone from students to CEOs was having conversations with AI.

The Ethics Awakening (2018-Present): When AI Got Real

As AI systems went from lab experiments to making real decisions about people's lives, society suddenly woke up to a disturbing reality: these incredibly powerful tools could amplify human biases, automate discrimination, and make consequential mistakes with superhuman confidence.

In 2018, Joy Buolamwini's "Gender Shades" study revealed that major facial recognition systems had error rates up to 34% higher for dark-skinned women compared to light-skinned men. Amazon had to scrap its AI hiring tool that systematically discriminated against women. These weren't theoretical problems - they were affecting real people's lives right now.

The AI ethics movement exploded from a niche academic concern into mainstream conversation. What started with a few researchers raising alarms became congressional hearings, international regulations, and entire departments at tech companies dedicated to "responsible AI."

- The Bias Reckoning (2018-2020): Studies exposed how AI systems trained on historical data perpetuated discrimination in hiring, lending, criminal justice, and healthcare. Facial recognition worked poorly for people of color. Medical algorithms gave worse recommendations for Black patients. The pattern was clear: AI learned our prejudices.

- The Deepfake Crisis (2019-2020): AI-generated fake videos became so convincing that they threatened democracy itself. Synthetic media could put anyone's face saying anything. The 2020 elections saw coordinated deepfake campaigns, forcing platforms to develop detection tools.

- The Autonomous Weapons Debate (2019-Present): Over 4,500 AI researchers signed pledges refusing to develop lethal autonomous weapons. The UN began discussions on banning 'killer robots.' Military AI became the new nuclear weapons debate - powerful, terrifying, and potentially unstoppable.

- The Labor Disruption Panic (2020-2023): As AI coding assistants, writing tools, and art generators went mainstream, entire professions faced obsolescence anxiety. Artists protested AI training on their work without consent. Writers worried about replacement. The future of creative work looked uncertain.

- The Regulation Wave (2021-Present): The EU proposed the AI Act - the first comprehensive AI regulation. China released AI ethics guidelines. The US held congressional hearings with AI CEOs. Countries raced to regulate before AI got too powerful to control.

A new generation of researchers focused entirely on making AI safe and beneficial. OpenAI, Anthropic, and DeepMind created dedicated safety teams. The goal: ensure that when we eventually build AGI, it's aligned with human values and under human control. The stakes couldn't be higher - get it wrong, and AI might be humanity's last invention.

The Current State (2024-2025): Every major AI company now has ethics boards and responsible AI principles. Bias testing is mandatory before deployment. Transparency reports explain how models were trained. But problems persist: ChatGPT still hallucinates confidently. Image generators reproduce stereotypes. The balance between innovation speed and safety remains precarious.

This ethical awakening fundamentally changed how AI is developed. What was once a pure technical challenge became a sociotechnical one - requiring philosophers, ethicists, policymakers, and affected communities at the table alongside engineers. The question shifted from "Can we build it?" to "Should we build it, and if so, how?"

Responsible AI job postings grew from practically non-existent in 2019 to nearly 1% of all AI postings by 2025, with over 100,000 positions requiring AI ethics expertise requested annually. The EU AI Act will affect any AI system used in Europe, regardless of where it's built. By 2024, over 60 countries had proposed or passed AI-specific legislation. Ethics went from afterthought to business requirement.

Hidden Patterns: What AI History Really Teaches Us

Looking back across 75 years, several surprising patterns emerge that most AI histories miss:

Every major AI breakthrough came from researchers who combined multiple fields. Turing was a mathematician-philosopher. McCulloch was a neuropsychiatrist. Hinton studied psychology before computer science. The biggest advances happen at the intersections.

- The 30-Year Cycle: AI goes through predictable waves of hype, disappointment, and renewal roughly every 30 years

- The Infrastructure Delay: Breakthrough ideas often wait decades for the right infrastructure (GPUs, internet data, etc.)

- The Serendipity Factor: Most major advances were accidents or byproducts of other research

- The Gender Gap: Female pioneers like Ada Lovelace and Barbara Liskov made crucial contributions but were systematically erased from the narrative

- The Corporate Takeover: Academic research led for 60 years, but since 2010, private companies have driven most breakthroughs

What's Next: The Patterns Point to AGI

If history is a guide, we're entering the most exciting phase yet. The current transformer-based systems show early signs of general intelligence—the holy grail that AI researchers have pursued for 75 years. But history also suggests we should be humble about predictions.

Based on historical patterns, the next breakthrough will likely come from an unexpected direction. Quantum computing? Neuromorphic chips? Biological-AI hybrids? The revolution might already be brewing in a garage, university lab, or research paper that seems irrelevant today.

The Long Arc of Intelligence

AI's history isn't just about technology—it's about humanity's deepest questions. What does it mean to think? Can consciousness arise from computation? Are we creating our successors or our partners?

The 75-year journey from Turing's question to ChatGPT's answers shows that progress is rarely linear. The field has survived two winters, numerous false starts, and countless predictions of both doom and utopia. What emerges is a story of human persistence, creativity, and the audacious belief that we can understand intelligence itself.

The future is not some place we are going to, but one we are creating. The paths are not to be found, but made, and the activity of making them changes both the maker and the destination.

Continue Your AI Journey

Now that you understand AI's fascinating history, you're ready to explore the different types of AI systems being built today. Our next tutorial breaks down the crucial distinctions between Narrow AI, General AI, and Superintelligence.