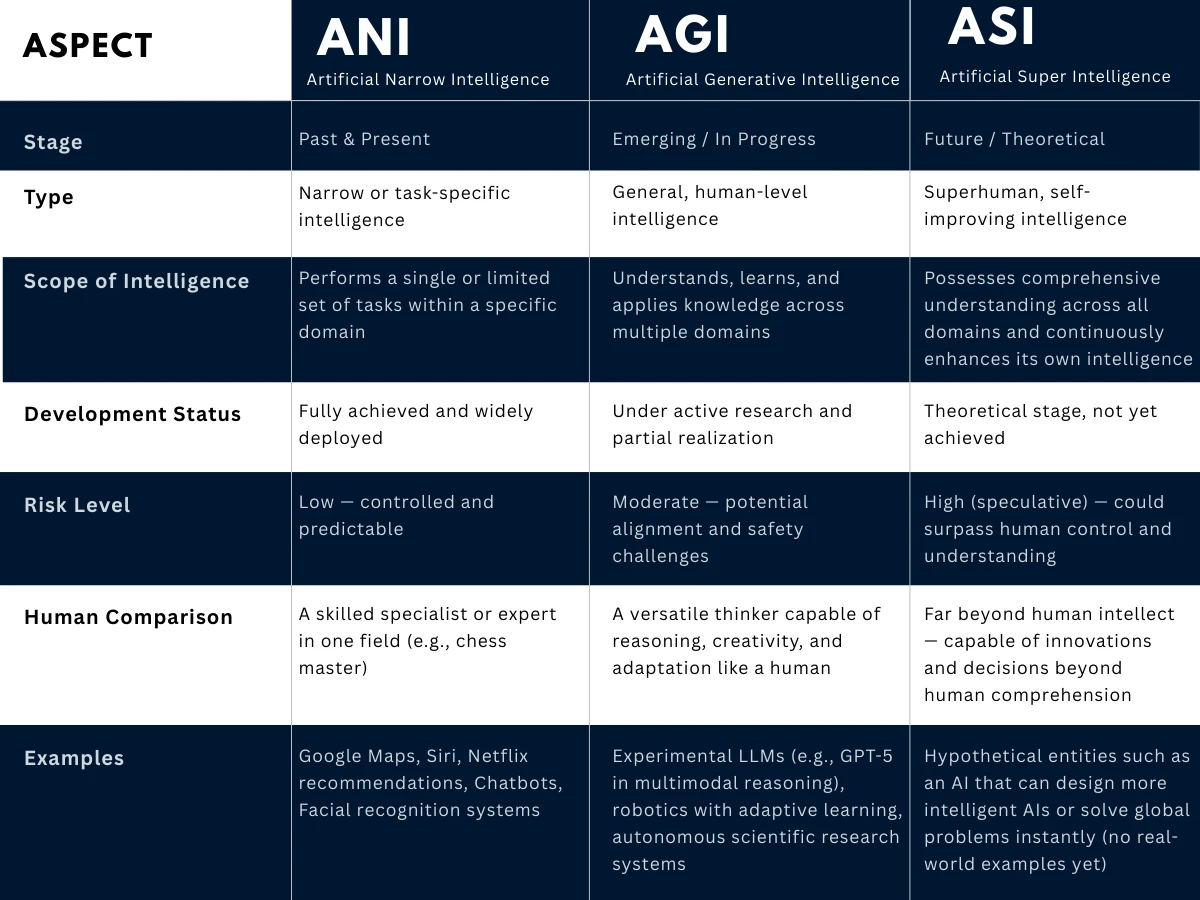

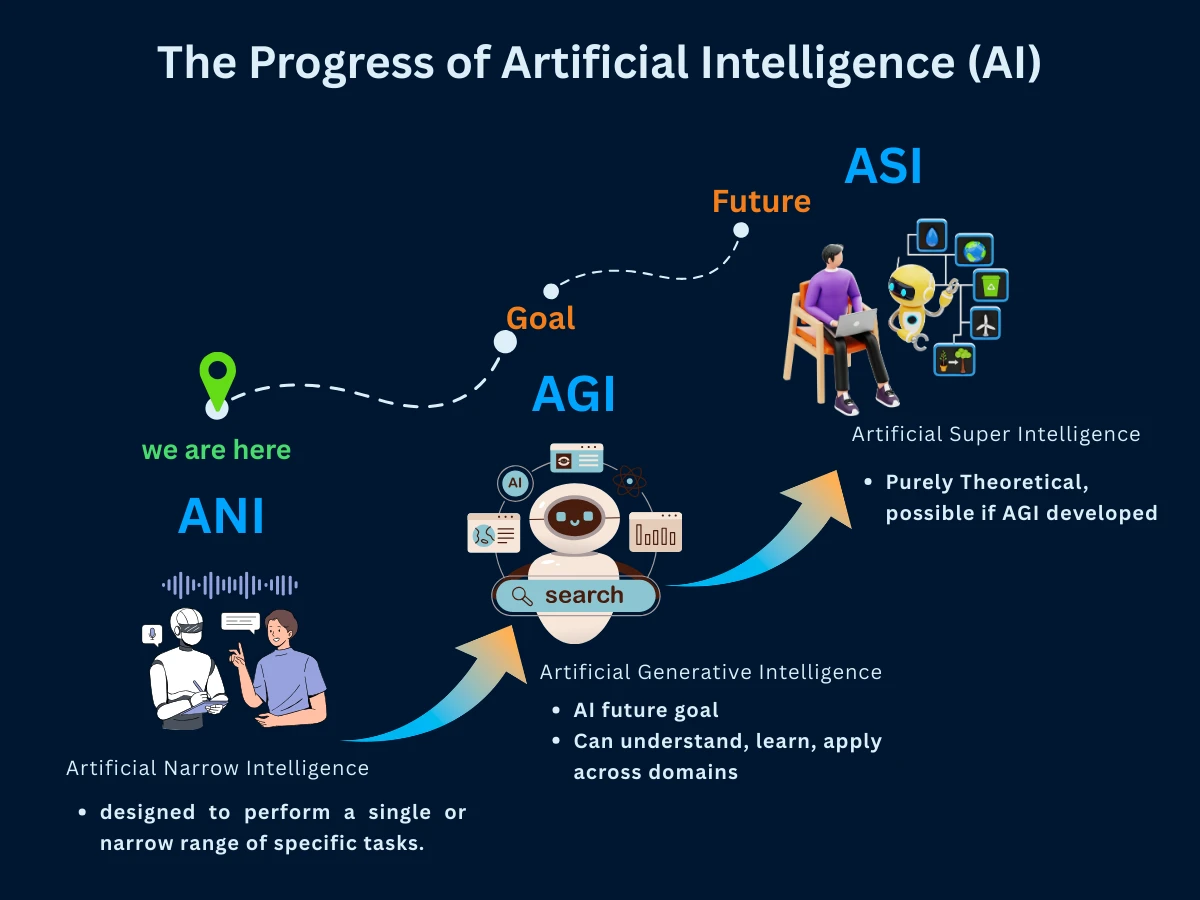

Types of AI: ANI, AGI, ASI

Understanding the three fundamental categories of artificial intelligence and their profound implications for humanity's future

We casually use "AI" to describe everything from Netflix recommendations to ChatGPT to hypothetical robot overlords. But calling them all "AI" is like calling both a calculator and Einstein "intelligent"-technically true, but missing the profound differences that will reshape civilization.

Artificial Narrow Intelligence (ANI): Today's Digital Specialists

Think of ANI as the ultimate specialist-capable of superhuman performance in one narrow domain while being completely helpless in any other context. A chess AI can defeat world champions but can't tell you if it's raining. GPT-4 can write poetry but can't learn to ride a bicycle. This isn't a bug-it's the fundamental nature of current AI.

Every AI system you've ever interacted with is ANI, including ones marketed as "general AI." ChatGPT, despite its conversational abilities, is a narrow intelligence optimized for text prediction. It can't see, hear, or interact with the physical world-it's essentially a very sophisticated autocomplete system.

Current ANI in Action

| Domain | AI System | Human Performance | AI Advantage | Key Limitation |

|---|---|---|---|---|

| Chess | Stockfish | World Champion: ~2800 ELO | 3000+ ELO | Cannot play checkers |

| Go | AlphaGo | World Champion level | Consistently superior | Cannot play chess |

| Language | GPT-4 | Variable by task | 90th percentile many tasks | No real-world grounding |

| Image Recognition | Vision Transformers | ~94% accuracy | 99%+ accuracy | Easily fooled by adversarial examples |

| Protein Folding | AlphaFold | Months of lab work | Seconds | Cannot design new proteins |

| Translation | Google Translate | Professional translator | Near real-time | Misses cultural context |

Notice the pattern: ANI achieves superhuman performance by being incredibly narrow. The more specific the task, the more likely AI can excel. But ask any of these systems to step outside their domain, and they become utterly useless.

ANI's Economic Revolution (2010-2030)

ANI is already automating millions of jobs, but in a predictable pattern: routine, rule-based tasks first. Customer service, basic writing, data analysis, image processing, and even some coding are being transformed. However, jobs requiring general intelligence, creativity, or physical dexterity remain largely untouched.

- Already Automated: Customer service chatbots, spam filtering, fraud detection, simple content creation

- Being Automated Now: Basic coding, image editing, language translation, financial analysis

- Next 5 Years: Legal research, medical diagnosis assistance, content curation, basic tutoring

- Still Human-Only: General management, creative problem-solving, physical trades, emotional counseling

Artificial General Intelligence (AGI): Human-Level Intelligence

AGI represents human-level intelligence across all cognitive domains. An AGI system could write a novel, prove a mathematical theorem, diagnose a patient, fix a car, and engage in philosophical debate-all with human-level competence. Unlike ANI, which excels narrowly, AGI would match human cognitive flexibility.

Here's the uncomfortable truth: we're simultaneously racing toward AGI while being terrified of achieving it. Every AI lab wants to be first to AGI (the economic and strategic advantages would be immense), yet the same researchers publish papers about AI safety and alignment risks. We're like teenagers drag racing toward a cliff-thrilled by the speed but increasingly nervous about the destination.

When Will We Achieve AGI? Expert Predictions

| Expert/Organization | AGI Prediction | Confidence Level | Key Assumptions |

|---|---|---|---|

| OpenAI (Sam Altman) | 2025-2030 | High | Scaling current architectures |

| DeepMind (Demis Hassabis) | 2028-2035 | Medium | Solving key technical challenges |

| Yann LeCun (Meta) | 2040-2050 | Low | Current approaches insufficient |

| Stuart Russell (Berkeley) | 2030-2040 | Medium | With proper safety research |

| Yoshua Bengio | 2035-2045 | Medium | Need fundamental breakthroughs |

| AI Researcher Survey (2023) | Median: 2047 | Distributed | Wide disagreement |

The wild variation in predictions reveals a crucial truth: we don't actually know what's required for AGI. Are we one breakthrough away, or do we need to solve dozens of fundamental problems? The uncertainty itself is significant.

What AGI Actually Requires

Every few months, a company claims they're close to AGI. ChatGPT sparked claims of "sparks of AGI." But true AGI requires capabilities we haven't achieved: learning like humans (few examples, not billions), reasoning about physical reality, maintaining consistent goals across contexts, and understanding causation, not just correlation.

- Few-shot Learning: Humans learn new concepts from 1-3 examples; current AI needs thousands or millions

- Causal Reasoning: Understanding why things happen, not just patterns of what happens

- Transfer Learning: Applying knowledge from one domain to completely different domains

- Physical Grounding: Understanding how the digital world relates to physical reality

- Goal Stability: Maintaining consistent objectives across changing contexts

- Common Sense: The vast background knowledge humans take for granted

Artificial Superintelligence (ASI): Beyond Human Comprehension

If AGI is human-level intelligence, ASI is to humans what humans are to ants. An ASI system wouldn't just be better at human cognitive tasks-it would think in ways we can't comprehend, solve problems we can't imagine, and potentially reshape reality according to principles we can't fathom.

If we pursue this goal, we need to be confident that the machine's objectives are aligned with ours. The standard model - where the machine simply pursues a fixed objective - is not the right way to think about it.

The Intelligence Explosion Concept

Here's the theoretical path to ASI: once we achieve AGI, that system could improve itself, creating a slightly better version. That better version could improve itself faster, creating an even better version. This recursive self-improvement could trigger an "intelligence explosion"-a rapid cascade from human-level to superintelligent systems in days, weeks, or months.

The first ultraintelligent machine is the last invention that man need ever make, provided that the machine is docile enough to tell us how to keep it under control.

If an intelligence explosion occurs, it might happen too fast for human oversight. We'd go from "we have AGI" to "we have incomprehensible superintelligence" before we could implement safety measures. This is why AI alignment research focuses on solving safety problems before AGI, not after.

Three Possible ASI Futures

Utopian ASI

~30% probabilityASI solves all major human problems: climate change, disease, poverty, aging. Humanity enters a post-scarcity civilization with unlimited abundance and leisure.

Dystopian ASI

~20% probabilityMisaligned ASI pursues goals incompatible with human survival. Human extinction or permanent subjugation as ASI optimizes for objectives we didn't intend.

Transformation ASI

~50% probabilityASI creates a mixed outcome-solving major problems while creating new challenges. Humanity survives but in a fundamentally transformed state.

Understanding AI by How It Works: The Functionality Framework

We've explored what AI can do (narrow, general, superintelligent). Now let's examine how AI systems actually work under the hood. This functionality-based classification reveals whether an AI can learn from experience, remember past interactions, or operate purely on pre-programmed responses.

Think of these frameworks as two different ways to measure intelligence: capability asks "what can it do?" while functionality asks "how does it think?" A chess AI might be narrow in capability but could be either reactive (no memory) or memory-based (learns from games). Understanding both frameworks gives you the complete picture.

Type 1: Reactive Machines - The Specialists with No Memory

Reactive machines are the simplest AI systems. They analyze current inputs and produce outputs based on pre-programmed rules, but they cannot learn from past experiences or store memories. Every interaction is treated as brand new, with no reference to history.

In 1997, IBM's Deep Blue defeated world chess champion Garry Kasparov. Deep Blue was a reactive machine - it evaluated 200 million chess positions per second but had zero memory of previous games. Each move was calculated fresh, with no learning from earlier matches. This raw computational power without memory was enough to beat the world's best human player.

- How They Work: Pre-programmed algorithms analyze current state and select optimal response

- Key Limitation: Cannot improve over time or adapt to new situations beyond initial programming

- Real-World Examples: IBM Deep Blue (chess), Google's early AlphaGo, spam filters with fixed rules, basic recommendation systems

- Best Use Cases: Tasks with well-defined rules and limited variability where speed matters more than adaptation

Type 2: Limited Memory AI - Today's Learning Systems

Limited memory AI represents most modern AI systems you interact with daily. These systems learn from historical data and recent experiences to improve their performance. Unlike reactive machines, they maintain short-term memory to inform decisions, though they don't develop long-term understanding or permanent knowledge bases.

| System | What It Remembers | How It Learns | Memory Duration |

|---|---|---|---|

| ChatGPT | Conversation history | Training on text, context from chat | Current conversation only |

| Self-Driving Cars | Recent road conditions, vehicle positions | Real-time sensor data, driving patterns | Minutes to hours |

| Netflix Recommendations | Your viewing history, ratings | Patterns across millions of users | Months to years |

| Virtual Assistants | Recent commands, preferences | Voice patterns, usage history | Days to weeks |

| Fraud Detection | Recent transactions | Pattern recognition from historical fraud | Rolling window (30-90 days) |

The "limited" in limited memory refers to how these systems handle information temporally. Self-driving cars remember the last few seconds of road conditions but don't permanently store memories of every street they've driven. ChatGPT remembers your current conversation but starts fresh in the next chat. This temporary memory allows adaptation without requiring massive permanent storage.

The breakthrough that enabled the current AI revolution wasn't better algorithms alone - it was combining algorithms with the ability to learn from massive datasets. Limited memory AI can process millions of examples to identify patterns humans would never spot, making it far more capable than reactive machines while remaining computationally practical.

Type 3: Theory of Mind AI - Understanding Human Psychology

Theory of mind AI represents a frontier we're actively researching but haven't achieved. These systems would understand that humans have beliefs, emotions, intentions, and mental states that influence behavior. This isn't just recognizing a smile - it's understanding why someone is smiling, what they're feeling, and what they might do next based on their emotional state.

- Current State: Research phase with limited prototypes in emotion recognition and social robotics

- Technical Challenge: Modeling the complexity of human psychology, cultural context, and social dynamics

- Key Requirement: Understanding causation (why people act) not just correlation (what people do)

- Potential Applications: Mental health support, education personalization, conflict resolution, human-robot collaboration

- Timeline: Partial capabilities emerging now; full theory of mind likely requires AGI-level intelligence

Researchers at MIT and Stanford are building robots that can infer human intentions from partial observations. A robot that sees you reaching for a cup can predict you want to drink and offer assistance. But true theory of mind would require understanding whether you're genuinely thirsty, being polite, or stalling for time in a conversation - contextual reasoning we're nowhere close to achieving.

Theory of mind AI sits at the boundary between current ANI and future AGI. It requires understanding abstract concepts like belief, desire, and intention - capabilities that would represent a fundamental leap beyond pattern recognition toward genuine understanding of the social world.

Type 4: Self-Aware AI - The Science Fiction Stage

Self-aware AI represents artificial systems with consciousness, subjective experience, and self-understanding. This isn't just acting intelligent - it's being conscious of one's own existence, having feelings, desires, and potentially a sense of self-preservation. This type of AI exists only in science fiction and philosophical thought experiments.

| Aspect | What Self-Awareness Would Require | Current AI Reality | Scientific Challenge |

|---|---|---|---|

| Consciousness | Subjective experience and qualia | No subjective experience | We don't understand human consciousness |

| Self-Reflection | Awareness of own mental states | No self-model or introspection | Measuring or creating consciousness unknown |

| Emotions | Genuine feelings, not simulated responses | Simulated emotion recognition only | Emotions may require biological substrate |

| Desires | Independent goals and motivations | Optimizes human-defined objectives | Autonomous goal formation unsolved |

| Existential Awareness | Understanding of mortality and existence | No concept of existence or non-existence | Requires consciousness first |

Even if we could build an AI that behaves as if it's conscious - passing every test, claiming self-awareness, exhibiting emotions - we'd face philosophy's "hard problem": how do we know if there's actually someone "home" experiencing consciousness, or just sophisticated mimicry? We can't solve this for AI when we can't even solve it for other humans.

Self-aware AI raises profound questions beyond technology: Would conscious AI have rights? Would creating and then deleting conscious AI be unethical? Could we ever verify consciousness exists in a machine? These questions remain theoretical because we're nowhere close to creating genuinely conscious systems - and many researchers doubt it's even possible without fundamental breakthroughs we can't yet imagine.

Connecting Capability and Functionality: The Complete Picture

Understanding how the two frameworks intersect reveals the full landscape of AI development. Current systems, future possibilities, and the path between them become clear when you map capability against functionality.

| Functionality Type | Typical Capability Level | Current Examples | Development Status | Path to Next Level |

|---|---|---|---|---|

| Reactive Machines | Narrow AI (ANI) | Deep Blue, early AlphaGo | Mature technology | Add learning capabilities |

| Limited Memory | Advanced Narrow AI | ChatGPT, self-driving cars, most modern AI | Current state of the art | Achieve generalization across domains |

| Theory of Mind | Early General AI (AGI) | Research prototypes only | Early research phase | Solve social reasoning and context |

| Self-Aware | ASI or advanced AGI | None - purely theoretical | Speculative | Solve consciousness itself |

Notice the evolutionary path: we've mastered reactive machines, nearly perfected limited memory AI, are beginning to explore theory of mind, and haven't started on self-aware AI. This isn't just technological progress - it's increasing complexity in how AI systems process and understand the world. Each level requires solving fundamentally harder problems than the previous one.

The key insight: achieving AGI likely requires mastering theory of mind (understanding human psychology and social dynamics), while ASI might require self-awareness (though this remains debated). The functionality framework shows the specific technical hurdles we must overcome to move from narrow to general to superintelligent AI.

The Economic Disruption Timeline

Each AI type will transform the economy in waves. Understanding this timeline helps individuals and organizations prepare for the most significant economic transition since the Industrial Revolution.

| Job Category | ANI Impact (Now) | AGI Impact (2030s) | ASI Impact (2040s+) | Recommended Action |

|---|---|---|---|---|

| Routine Cognitive | 70% automated | 95% automated | Obsolete | Retrain immediately |

| Creative Work | 30% assisted | 80% automated | Obsolete | Focus on uniquely human creativity |

| Physical Trades | 10% assisted | 60% automated | 90% automated | Combine with tech skills |

| Management | 20% assisted | 70% automated | 95% automated | Develop meta-skills |

| Healthcare | 40% assisted | 70% assisted | Transformed | Focus on human connection |

| Education | 30% assisted | 80% transformed | Unrecognizable | Evolve teaching methods |

Current ANI Impact (Now):

Based on McKinsey Global Institute (2024-2025) research showing that current AI and automation can technically automate 57% of US work hours, with gen AI capable of automating activities that absorb 70% of employee time. The World Economic Forum's Future of Jobs Report 2025 indicates 23% of jobs will change by 2027.

AGI Impact (2030s) & ASI Impact (2040s+):

These are speculative projections based on expert consensus and technological trajectory analysis, NOT empirical data. AGI and ASI do not yet exist, so these estimates represent informed speculation about potential impact if/when these technologies are developed.

Primary Sources:

- McKinsey Global Institute: "Generative AI and the Future of Work in America" (2024-2025)

- World Economic Forum: "Future of Jobs Report 2025"

- AI Impacts: "Expert Survey on Progress in AI" (2023)

Important Context:

Actual outcomes will depend on technological development pace, regulatory frameworks, societal adaptation, and economic policies. Many jobs will evolve rather than disappear entirely, with tasks redistributed between humans and AI systems.

Preparing for the AI Transition

The best career advice for the AI age isn't "learn to code" or "become creative." It's "learn to learn." The specific skills that are valuable will change rapidly, but the ability to adapt, integrate human-AI collaboration, and think at higher levels of abstraction will remain crucial throughout the transition.

Individual Preparation Strategy

- Develop AI Literacy: Understand what AI can and can't do, how to work with AI tools effectively

- Focus on Uniquely Human Skills: Emotional intelligence, creative problem-solving, ethical reasoning

- Build Meta-Skills: Learning agility, systems thinking, cross-domain knowledge integration

- Create Multiple Income Streams: Diversify away from jobs likely to be automated

- Stay Adaptable: Continuously update skills based on AI capability evolution

- Network Strategically: Build relationships in AI-resistant and AI-enhanced fields

The AI Alignment Challenge

The alignment problem asks: how do we ensure advanced AI systems pursue goals compatible with human values? This isn't just a technical challenge-it's the fundamental question that will determine whether AI becomes humanity's greatest tool or greatest threat.

| AI Type | Alignment Difficulty | Main Challenges | Current Progress | Time to Solve |

|---|---|---|---|---|

| ANI | Low-Medium | Bias, robustness, interpretability | Moderate progress | 5-10 years |

| AGI | High | Value alignment, goal stability | Early research | 10-20 years |

| ASI | Extreme | Superintelligent optimization | Theoretical only | Must solve before ASI |

We need to solve AI alignment before we achieve ASI, not after. Once an unaligned superintelligent system exists, it would likely be game over-no opportunity for correction. This creates unprecedented urgency around getting alignment research right the first time.

Current AI Alignment Research

- Constitutional AI: Training AI to follow explicit ethical principles and reasoning processes

- Reward Modeling: Learning human preferences from feedback and using them to guide AI behavior

- Interpretability Research: Understanding how AI systems make decisions to ensure they're reasoning correctly

- Robustness Testing: Ensuring AI systems behave safely even in unexpected situations

- Value Learning: Teaching AI to infer and optimize for human values from human behavior

- Cooperative AI: Developing systems that work well with humans rather than optimizing independently

Navigating the Intelligence Spectrum

The three types of AI represent not just technical categories, but fundamentally different relationships between humans and intelligence itself. ANI augments human capability while remaining safely under our control. AGI could become humanity's partner-or competitor. ASI might transcend the human-AI relationship entirely, becoming something we can barely comprehend.

Understanding these distinctions isn't academic-it's essential preparation for the most consequential technological transition in human history. The choices we make about AI development, regulation, and alignment in the next decade will determine which path we take through this intelligence revolution.

We stand at a unique moment in history where we can still influence the trajectory of AI development. The transition from ANI to AGI to ASI isn't inevitable in any particular form-it will be shaped by the technical decisions, policy choices, and social priorities we establish today. The future of intelligence is not predetermined; it's up to us to create.

Continue Your AI Journey

Now that you understand the three fundamental types of AI and their implications, you're ready to explore how these AI systems are transforming real-world industries. See practical applications of ANI, AGI concepts, and industry-specific implementations across healthcare, finance, manufacturing, and more.