Supervised Learning Explained: Classification and Regression

Master the foundation of production AI - from Gmail's spam detection to Tesla's Autopilot

Supervised learning powers 70% of production AI systems, from Gmail's spam detection to Tesla's object recognition. The key to success isn't choosing fancy algorithms-it's having good data and solving the right problem. PayPal's $5B fraud prevention and Google's 99.9% spam accuracy prove that simple algorithms with quality data outperform complex models built on poor foundations.

What You'll Learn

The biggest mistake in supervised learning isn't choosing the wrong algorithm-it's solving the wrong problem with the wrong data. We've seen teams spend months optimizing neural networks when a well-designed decision tree with clean labels would have delivered better business results in weeks. Problem definition and data quality are 80% of success.

Supervised Learning

Learning with a teacher - like studying flashcards with answers on the back

Complete Breakdown

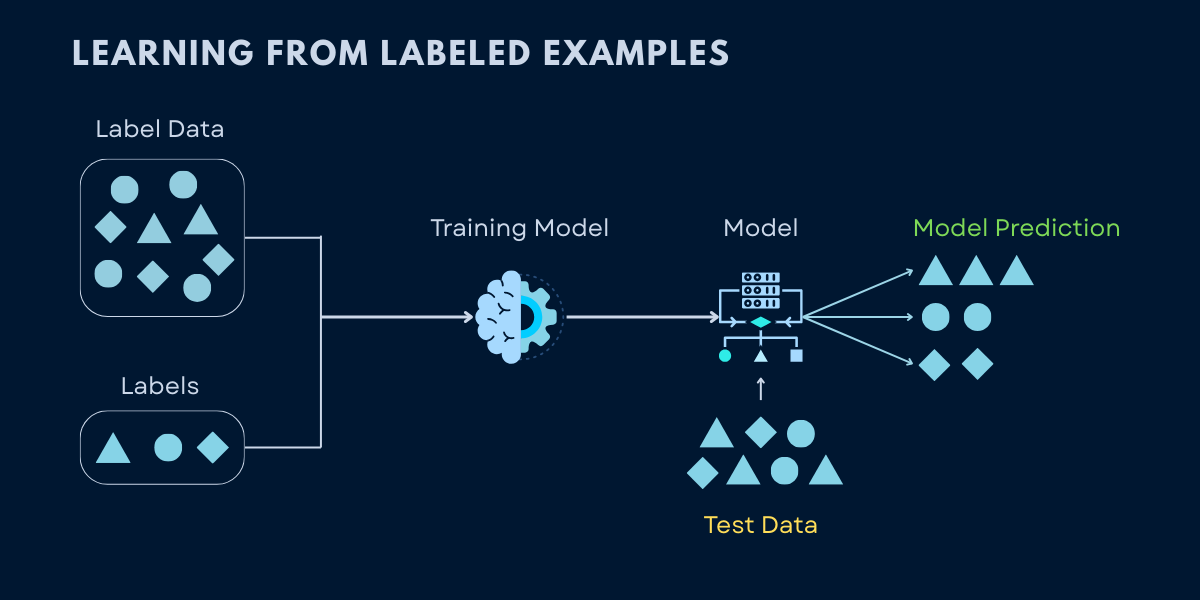

Training models on labeled datasets where input-output pairs are provided, enabling the algorithm to learn the mapping function

How It Works

- Human provides training data with correct answers (labels)

- Algorithm learns patterns by comparing its predictions to correct answers

- Model adjusts itself to minimize errors

- After training, predicts outputs for new, unseen inputs

Real-World Examples

✓ Best For

- Problems where you have historical data with known outcomes

- Classification tasks (categorizing data into groups)

- Regression tasks (predicting numerical values)

- When accuracy and interpretability are critical

⚠ Limitations

- Requires large amounts of labeled data (expensive and time-consuming)

- Can't discover patterns humans haven't already identified

- May overfit to training data if not carefully managed

- Labeling bias in training data leads to biased predictions

Common Algorithms

Authoritative Sources

How Supervised Learning Works: Training Models from Labeled Data

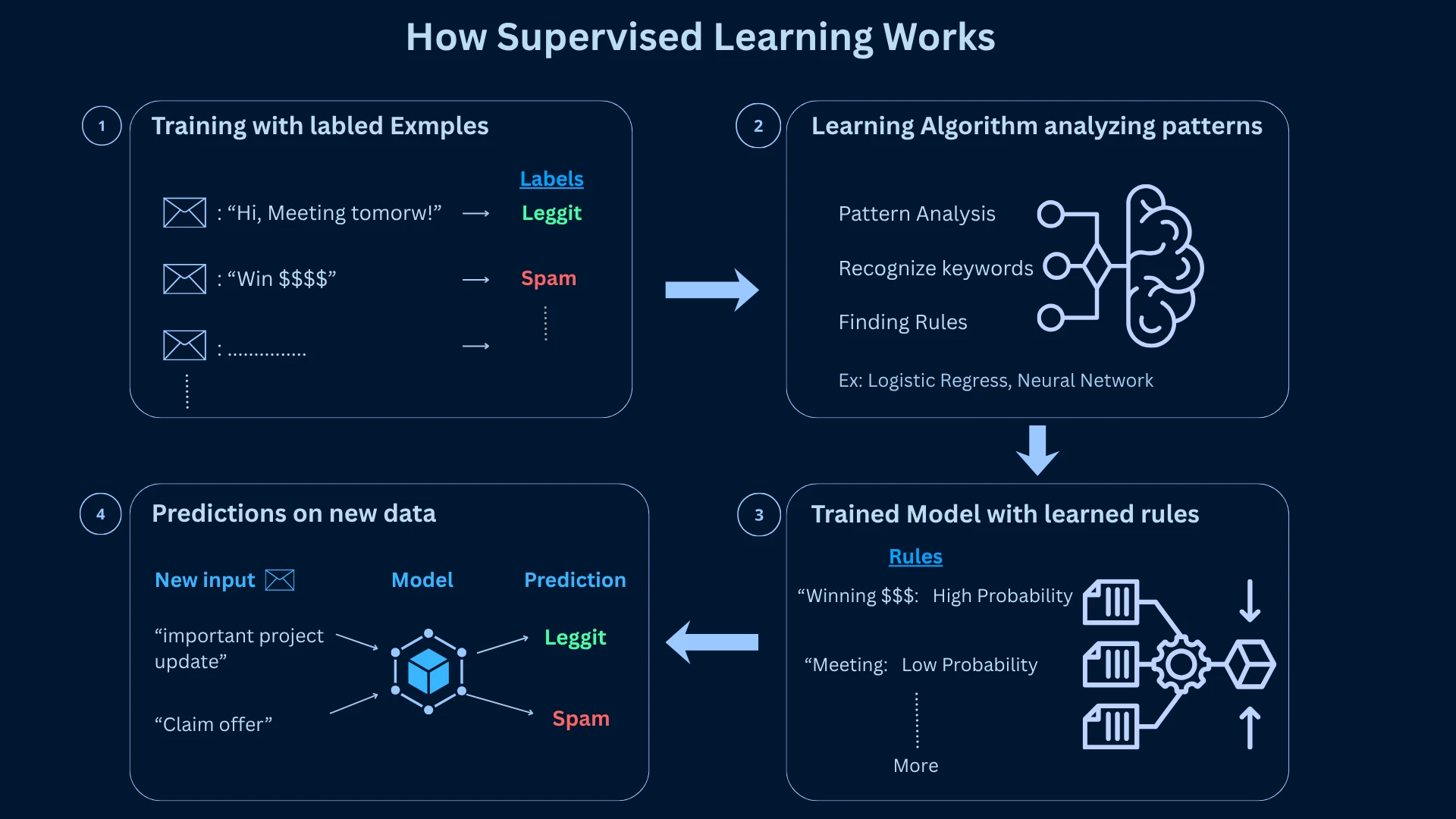

Supervised learning is machine learning's most intuitive paradigm-it learns patterns from historical examples where you already know the correct answer. Think of it as learning from a teacher who provides immediate feedback. You show the algorithm thousands of examples (emails labeled as spam or not spam), and it learns to recognize patterns that distinguish between categories or predict values.

This approach powers Gmail's spam detection, Tesla's object recognition, and PayPal's fraud prevention because it offers predictable performance with clear evaluation metrics. The "supervised" part means you supervise the learning process by providing labeled data-examples where you've already marked the correct answer.

The Supervised Learning Process: Data, Training, Prediction, and Evaluation

- Collect training data with known outputs (labeled examples) - for example, thousands of emails already marked as spam or not spam.

- Feed this data to a learning algorithm. It goes through every example and starts identifying patterns - what words, structures, or signals tend to appear in spam.

- The algorithm produces a trained model - essentially a set of rules or parameters it figured out on its own by studying those patterns.

- Use this trained model to make predictions on new, unseen data - emails it has never read before, but can now classify confidently.

The key to success is generalization-the model's ability to perform well on new data it hasn't seen during training. A model that simply memorizes the training data will fail in the real world. Google's spam filter doesn't just recognize the exact spam emails it was trained on; it generalizes to catch new spam tactics it's never encountered before.

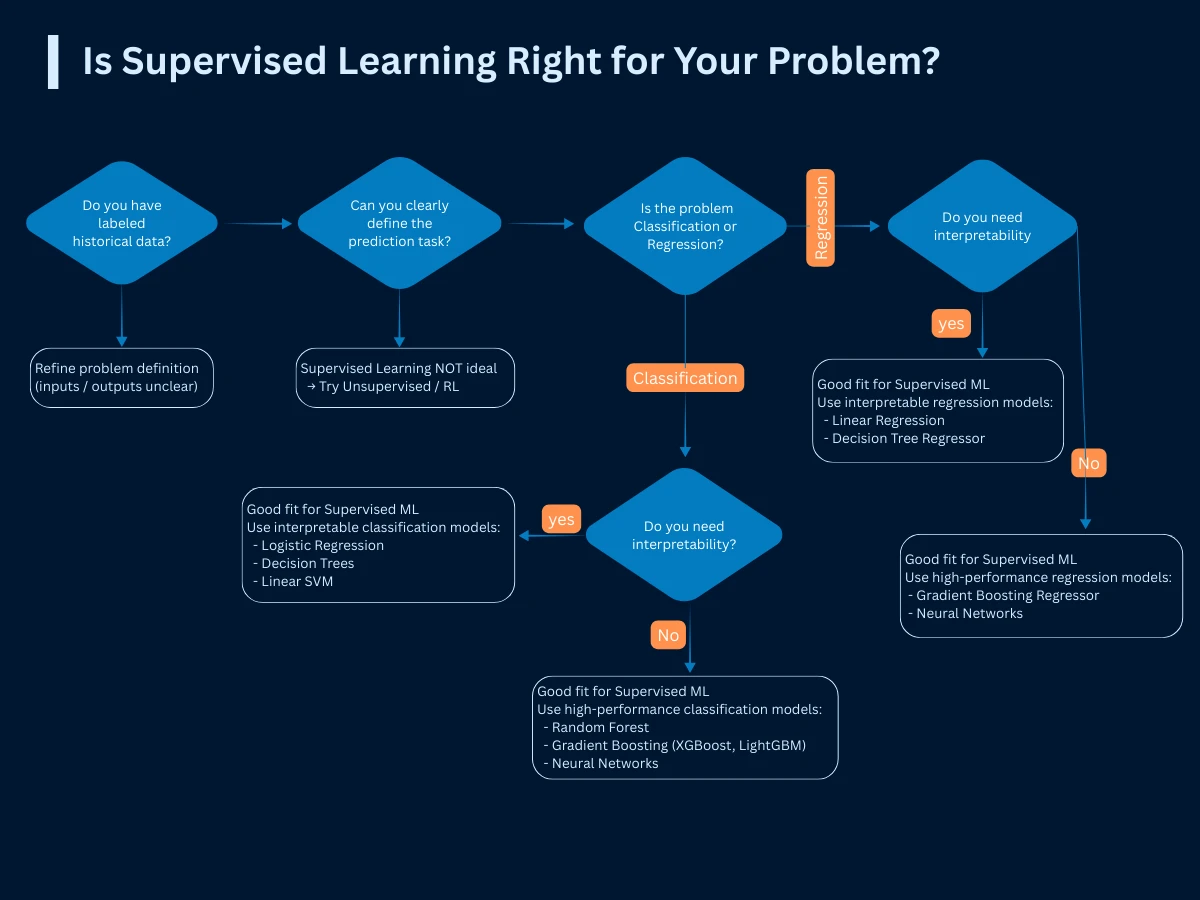

When to Use Supervised Learning: Identifying the Right Problems

Not every problem is suitable for supervised learning. The critical requirement is having labeled historical data where you know the correct answers. If you can't obtain labeled data or if your goal is to discover unknown patterns, supervised learning won't work-you'll need unsupervised or reinforcement learning instead.

| Business Question | Supervised Learning Fit | Problem Type | Success Example |

|---|---|---|---|

| Will this email be spam? | Perfect fit | Binary Classification | Gmail processes 1.5B emails daily with 99.9% accuracy |

| What will sales be next month? | Perfect fit | Regression | Amazon demand forecasting reduces inventory costs by 20% |

| Which customer will churn? | Perfect fit | Binary Classification | Netflix reduces churn by 25% with proactive targeting |

| How much should we price this item? | Perfect fit | Regression | Uber's dynamic pricing optimizes driver-rider matching |

| What's in this image? | Perfect fit | Multi-class Classification | Tesla Autopilot identifies objects with 99.99% safety record |

| What patterns exist in our data? | Poor fit | Use Unsupervised Learning | Unknown patterns require unsupervised approaches |

The 80/20 Rule

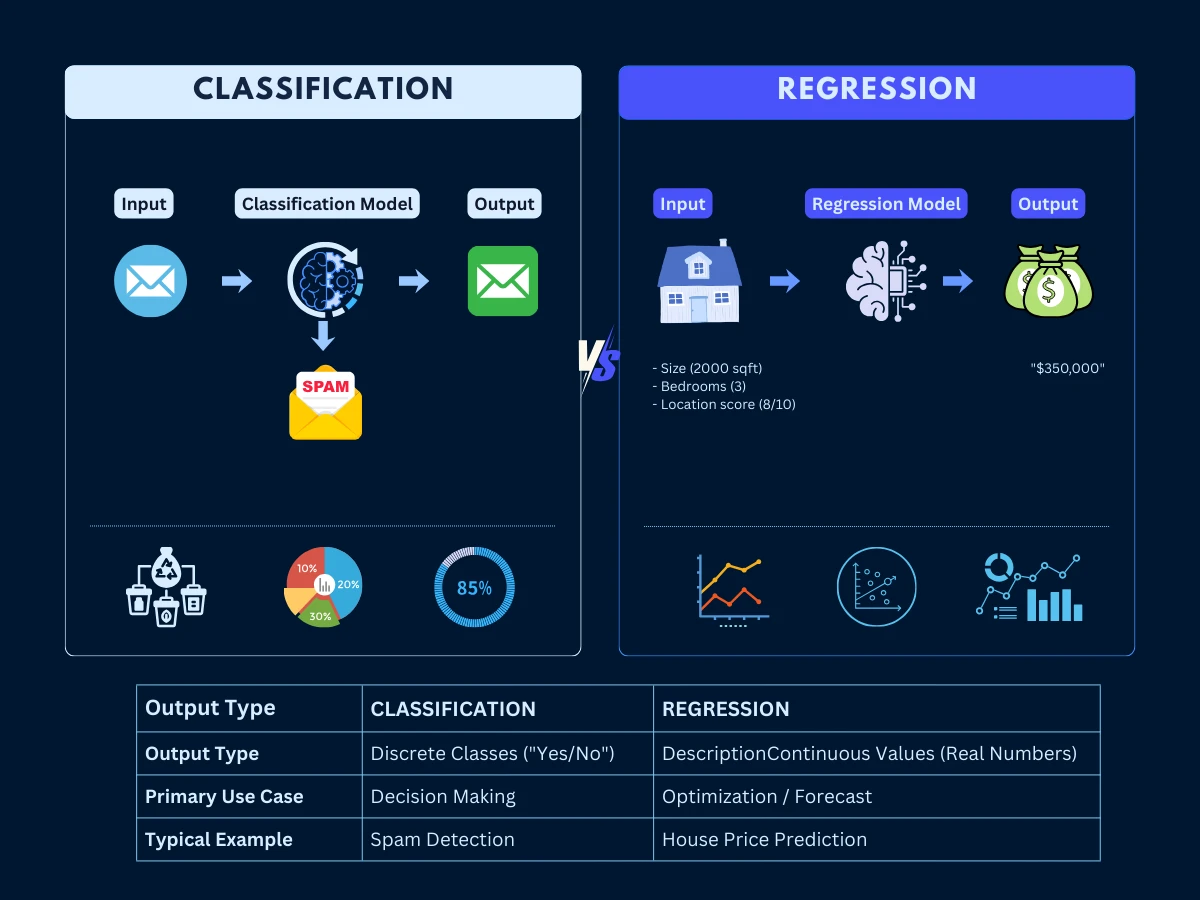

Classification vs Regression: Two Core Supervised Learning Approaches

Supervised learning splits into two fundamental categories based on what you're predicting: classification (predicting categories) and regression (predicting continuous numbers). This distinction determines your entire project approach-from data collection to evaluation metrics to business integration.

Classification: Predicting Categories

Classification answers "which category?" questions. Is this email spam or not spam? Will this customer buy or not buy? What object is in this image? The output is always a discrete category or class. Classification problems often generate immediate business value because they directly support yes/no decision-making processes.

Classifications come in three flavors: binary (two categories like spam/not spam), multi-class (multiple exclusive categories like cat/dog/bird), and multi-label (multiple non-exclusive categories like tagging a photo with "outdoor," "people," "sunset"). Gmail's spam detection is binary classification. Tesla's object recognition is multi-class classification-identifying whether an object is a car, pedestrian, cyclist, or traffic sign.

Classification in Action

Regression: Predicting Continuous Values

Regression answers "how much?" questions. What will the house price be? How many units will we sell next month? What's the expected lifetime value of this customer? The output is always a continuous number. Regression excels at forecasting, pricing, resource allocation, and performance prediction.

While less immediately intuitive than classification, regression often drives significant operational improvements and cost savings. Amazon's demand forecasting predicts exactly how many units of each product to stock, reducing inventory costs by 25%. Tesla's battery range prediction tells drivers precisely how many miles remain, achieving 95% accuracy even under varying environmental conditions.

Regression in Action

Classification vs Regression: Core Distinction

Click any card to explore detailed features, algorithms, and industry examples

The fundamental division in supervised learning: discrete categories vs continuous values. Understanding this distinction is critical for choosing the right algorithm for your problem.

Classification

The Category Predictor

Predicts discrete categories or labels. Answers "which category?" questions with clear-cut decisions between predefined classes.

Regression

The Numeric Predictor

Predicts continuous numeric values. Answers "how much?" questions with precise quantitative outputs on a continuous scale.

Deep Dives Available

Data Quality for Supervised Learning: Why Clean Labels Drive Model Accuracy

Data quality determines supervised learning success more than algorithm choice. Google's internal research shows that improving data quality by 10% delivers 3x more performance improvement than switching to a more sophisticated algorithm. Here's the framework used by industry leaders to ensure data excellence.

| Quality Dimension | Minimum Standard | Production Benchmark | Impact of Poor Quality |

|---|---|---|---|

| Label Accuracy | 95% correct labels | 98%+ for mission-critical systems | Direct performance degradation |

| Label Consistency | 90% inter-annotator agreement | 95%+ agreement with clear guidelines | Model confusion, poor generalization |

| Dataset Size | 1,000+ examples per class | 10,000+ examples for production | Overfitting, poor generalization |

| Feature Quality | < 5% missing values | < 1% missing values | Biased predictions, reduced accuracy |

| Temporal Consistency | No data leakage | Strict temporal validation | 100% production failure |

| Representation Balance | No class < 10% of data | Balanced across all segments | Bias against minority classes |

The Tesla Data Quality Secret

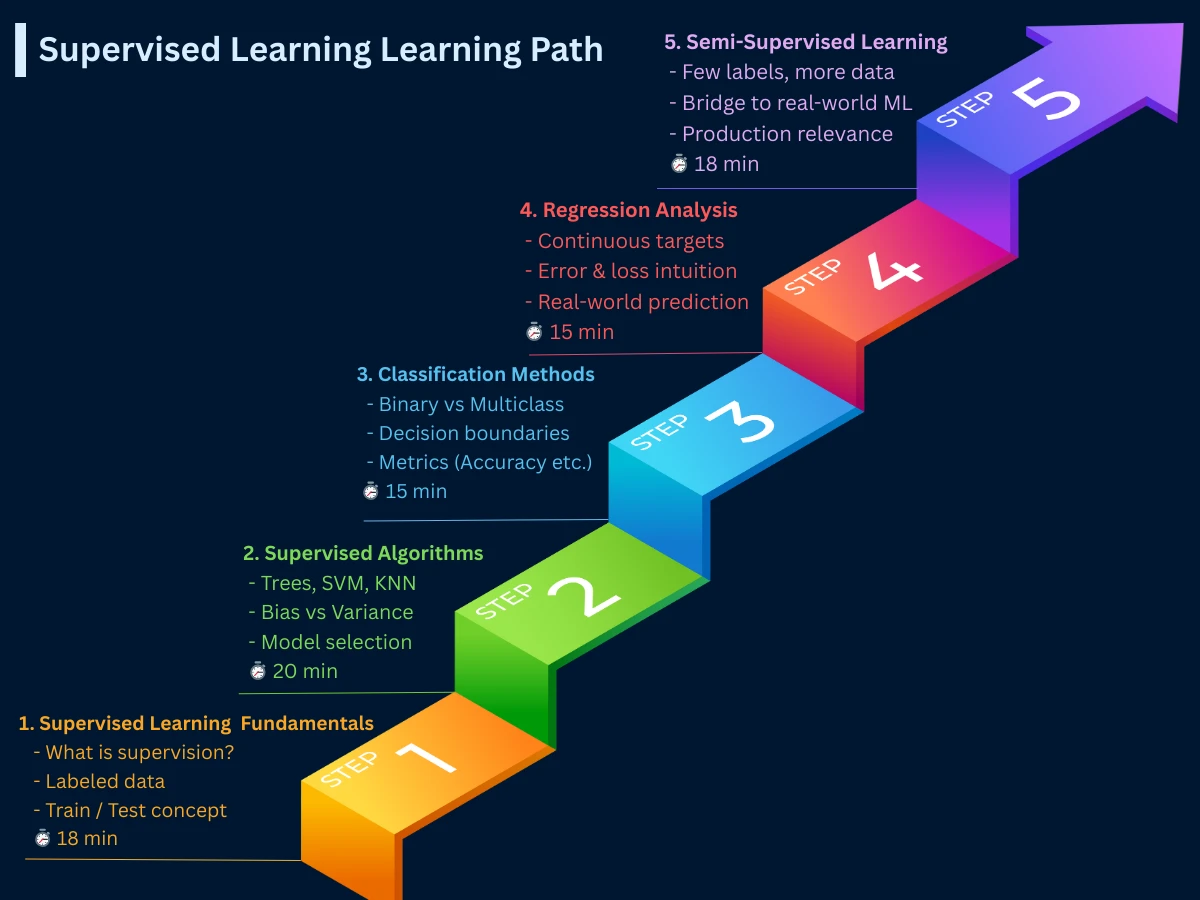

Supervised Learning Roadmap: Recommended Topics and Next Steps

Now that you understand supervised learning fundamentals, you're ready to dive deeper into specific topics. Here's the recommended learning path through our supervised learning section:

- Supervised Learning Fundamentals (this page, 18 min): Foundation concepts, classification vs regression, data quality

- Classification Methods (15 min): Binary, multi-class, multi-label classification, handling imbalanced datasets, evaluation metrics

- Regression Analysis (15 min): Linear, polynomial, non-linear regression, performance metrics, real-world applications

- Common Supervised Algorithms (20 min): Decision trees, random forests, SVM, neural networks, algorithm selection frameworks

- Semi-Supervised Learning (18 min): Combining labeled and unlabeled data for improved performance with limited labels

Supporting topics covered in other sections include model training processes, evaluation metrics, data preparation, and handling overfitting/underfitting. The complete supervised learning journey takes about 90 minutes and provides production-ready knowledge.

Common Supervised Learning Mistakes: Overfitting, Data Leakage, and Bias

Analysis of 1,500+ supervised learning projects reveals consistent failure patterns. Here are the top mistakes that derail projects and how to avoid them:

- Data Leakage: Using future information in training data. SOLUTION: Use strict time-based train/test splits and audit features carefully

- Label Noise: Inconsistent or incorrect labels causing 30-50% performance drops. SOLUTION: Use multiple annotators and conduct quality audits

- Sampling Bias: Training data that doesn't represent real-world distribution. SOLUTION: Use stratified sampling and test on diverse populations

- Ignoring Class Imbalance: Optimizing for overall accuracy when classes are imbalanced. SOLUTION: Use balanced metrics like F1-score and consider resampling

- Wrong Evaluation Metrics: Measuring technical metrics instead of business impact. SOLUTION: Align metrics with business objectives from day one

The $3B Lesson

Frequently Asked Questions

01 What's the difference between supervised and unsupervised learning?

Supervised learning uses labeled data (input-output pairs) to learn predictions, like teaching with answer keys. Unsupervised learning finds patterns in unlabeled data, like exploring without guidance. Use supervised when you know what you want to predict; use unsupervised when you want to discover hidden patterns.

02 How much labeled data do I need?

Minimum 1,000 examples per class for basic models; 10,000+ for production systems. Deep learning requires 100,000+ examples. Start small with simple models, then scale up as you collect more data. Quality matters more than quantity-1,000 high-quality labels beat 10,000 noisy ones.

03 How do I choose between classification and regression?

Ask: "Am I predicting categories or numbers?" Categories (spam/not spam, cat/dog) = classification. Continuous values (price, temperature, probability) = regression. If you need both, build separate models for each task.

04 What if I can't afford to label all my data?

Start with active learning-label only the most informative examples. Consider semi-supervised learning to leverage unlabeled data. Use transfer learning with pre-trained models. Or start with a small labeled dataset and expand based on business value.

05 How long does it take to build a supervised learning system?

Typical timeline: 12-26 weeks. Problem definition (2-4 weeks), data collection and labeling (4-8 weeks), model development (2-6 weeks), evaluation (2-4 weeks), deployment (2-4 weeks). Simple projects can be faster; complex systems take longer.

06 Do I need a PhD to implement supervised learning?

No! Modern libraries (scikit-learn, TensorFlow) make implementation accessible. You need: (1) problem formulation skills, (2) data quality understanding, (3) evaluation metric knowledge, (4) basic Python. Advanced math helps but isn't required for most applications.

07 When should I NOT use supervised learning?

Avoid supervised learning when: (1) you don't have labeled data and can't obtain it, (2) the problem is exploratory (finding patterns), (3) real-time adaptation is critical (consider reinforcement learning), (4) labeling is subjective and inconsistent, (5) the cost of labeling exceeds business value.

08 How do I know if my model is good enough?

Define success metrics aligned with business goals BEFORE training. Compare against baselines (random, simple rules, current process). Test on truly unseen data. Validate with domain experts. Pilot in production with monitoring. Good enough = meets business requirements reliably.

The companies that dominate with supervised learning don't have better algorithms-they have better problem formulation, cleaner data, and tighter integration with business processes. Master these fundamentals first, then algorithm optimization becomes straightforward.

Next Steps: Applying Supervised Learning to Real Problems

You now understand supervised learning fundamentals. Your next step is exploring classification or regression in depth, depending on your problem type.