Dimensionality Reduction

Transform high-dimensional data into interpretable lower dimensions while preserving essential information

Machine learning datasets often have hundreds or thousands of features - images have millions of pixels, genetic data has tens of thousands of genes, text documents have vocabularies of 50,000+ words. High-dimensional data is computationally expensive, hard to visualize, and suffers from the curse of dimensionality where distance metrics break down. Dimensionality reduction solves this by transforming data to fewer dimensions while preserving essential information. When Netflix recommends movies using 50 latent factors instead of ratings for 20,000 films, or when researchers visualize 30,000-gene cancer data in 2D - that's dimensionality reduction making the complex comprehensible.

What Makes This Tutorial Different

Dimensionality reduction is not just a preprocessing step - it's a lens for understanding your data. When you see high-dimensional cancer data projected to 2D and patient groups separate clearly, you're not just visualizing - you're discovering. Some of our most important findings in genomics came from dimensionality reduction revealing structure we didn't know existed. The curse of dimensionality is real, but these techniques turn that curse into opportunity.

What Is Dimensionality Reduction?

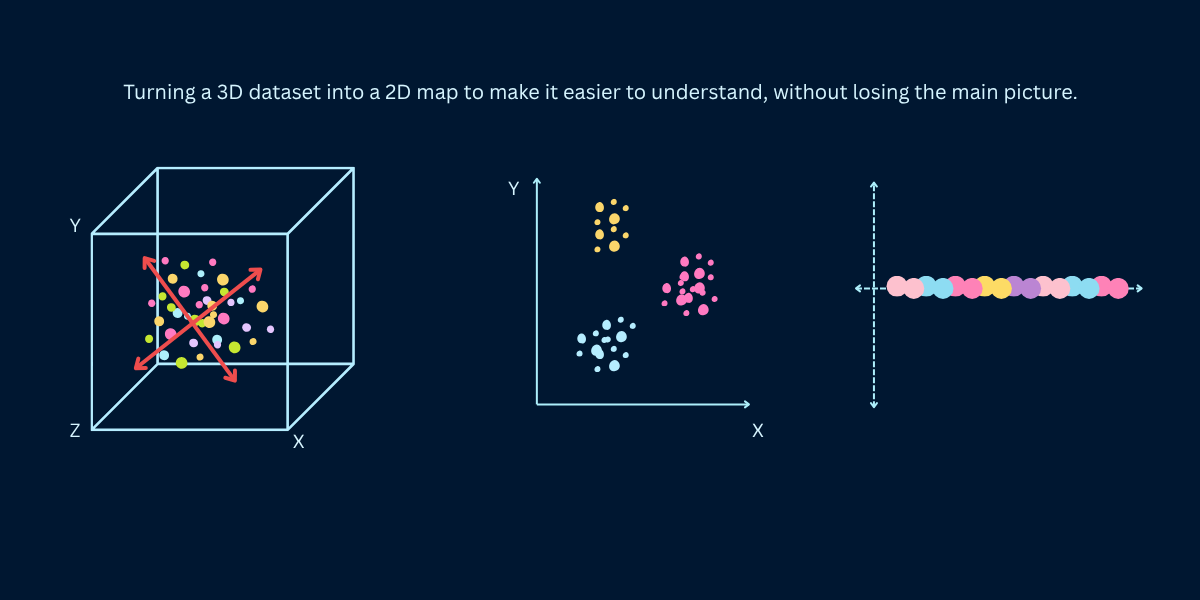

Dimensionality reduction transforms high-dimensional data (many features) into lower-dimensional data (fewer features) while retaining as much meaningful information as possible. Instead of representing each data point with 1,000 features, you might represent it with 10 or 50 features that capture the essential patterns. The goal is compression with minimal information loss - find a compact representation that preserves the data's structure, relationships, and variance.

Think of a high-resolution photograph reduced to a thumbnail. The thumbnail has far fewer pixels (lower dimension) but still conveys the image's essential content. You lose fine details but preserve the overall structure - you can still recognize faces, objects, and scenes. Good dimensionality reduction works similarly: compress data dramatically while keeping what matters for your task (classification, clustering, visualization).

Four Main Purposes

- Visualization: Reduce to 2D or 3D to visualize high-dimensional data. You can't plot 1,000-dimensional data directly, but you can project it to 2D and see clusters, outliers, and patterns. This is exploratory data analysis - understanding your data's structure before modeling.

- Feature extraction and preprocessing: Reduce dimensions before feeding data to machine learning algorithms. Benefits: faster training, less memory, reduced overfitting, removal of noise and redundancy. Transform 10,000 sparse features into 100 dense meaningful features.

- Noise reduction: High-dimensional data often has noisy features. Dimensionality reduction focuses on directions of high variance (signal) while discarding low-variance directions (noise). The reduced representation is often cleaner than the original.

- Interpretability: Sometimes reduced dimensions have semantic meaning. In text analysis, latent dimensions might represent topics. In genetics, principal components might correspond to biological pathways. Lower dimensions can be more interpretable than raw features.

Why Dimensionality Reduction Matters

The Curse of Dimensionality

As you add more dimensions to your data, strange things happen that break machine learning algorithms. Imagine going from a flat map (2D) to a 3D cube to a 100D space - the rules change in surprising ways. Points drift apart, your data becomes lonely in vast empty space, and algorithms struggle to find patterns. This problem is so common it has a name: the curse of dimensionality. Understanding it shows why reducing dimensions helps so much.

- Everything becomes equally far apart: In high dimensions, all your data points end up roughly the same distance from each other. It's like everyone in a city suddenly living the same distance from downtown - the concept of 'near' and 'far' stops being useful. Algorithms that rely on finding nearest neighbors or measuring distances just stop working.

- Your data becomes tiny and isolated: Think about filling a line with 10 points, then filling a square with 10 points - they're more spread out. Now fill a cube, then a 100-dimensional space. Those same 10 points become incredibly sparse, floating alone in massive empty space. Even a million data points feel small in high dimensions.

- Everything runs slower and costs more: More dimensions mean more calculations, more memory, more waiting. Every distance calculation, every matrix operation takes longer. Reducing from 10,000 dimensions to 100 can make your model train 100 times faster - from hours to minutes.

- Models memorize instead of learn: With 10,000 features but only 1,000 examples, your model has more knobs to turn than examples to learn from. It starts memorizing the training data perfectly, including all the noise and errors, but fails on new data. Fewer dimensions force the model to learn real patterns.

When More Features Hurt Performance

| Problem | Low Dimensions (2-10) | Medium Dimensions (10-100) | High Dimensions (100-10000) |

|---|---|---|---|

| Training Speed | Fast (seconds) | Medium (minutes) | Slow (hours to days) |

| Memory Requirements | Minimal (MB) | Moderate (GB) | Large (10s-100s GB) |

| Samples Needed | Hundreds | Thousands | Millions |

| Distance Metrics | Meaningful | Somewhat meaningful | Nearly meaningless |

| Overfitting Risk | Low | Medium | High |

| Visualization | Direct plotting | 3D or pairs plots | Impossible without reduction |

Linear vs Non-Linear Dimensionality Reduction

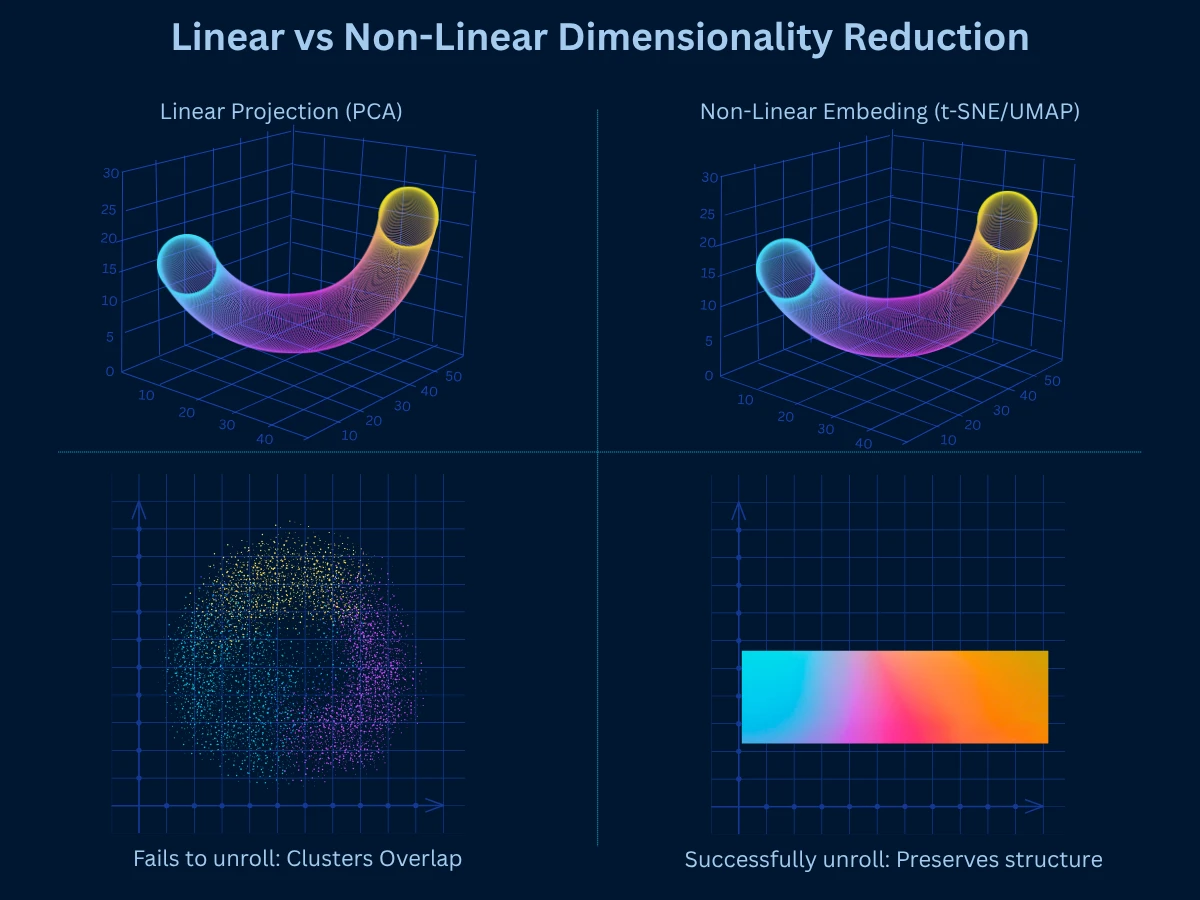

Dimensionality reduction methods fall into two categories based on how they transform data: linear methods assume data lies on or near a linear subspace, while non-linear methods can capture curved manifolds and complex relationships. Understanding this distinction guides your choice of technique.

| Aspect | Linear Methods (PCA, LDA, SVD) | Non-Linear Methods (t-SNE, UMAP, Autoencoders) |

|---|---|---|

| Assumption | Data lies on linear subspace | Data lies on non-linear manifold |

| Relationships Captured | Linear correlations only | Complex non-linear relationships |

| Speed | Fast (even on large datasets) | Slower (except UMAP) |

| Scalability | Excellent (millions of samples) | Limited (thousands to hundreds of thousands) |

| Interpretability | High (components have clear meaning) | Low (embedding dimensions are abstract) |

| Reversibility | Can reconstruct original data | Usually one-way transformation |

| Best For | Preprocessing, noise reduction, feature extraction | Visualization, discovering non-linear patterns |

| Example | Reduce 1000 features to 50 before classification | Visualize 10,000-gene dataset in 2D |

Start Linear, Go Non-Linear If Needed

PCA: Principal Component Analysis

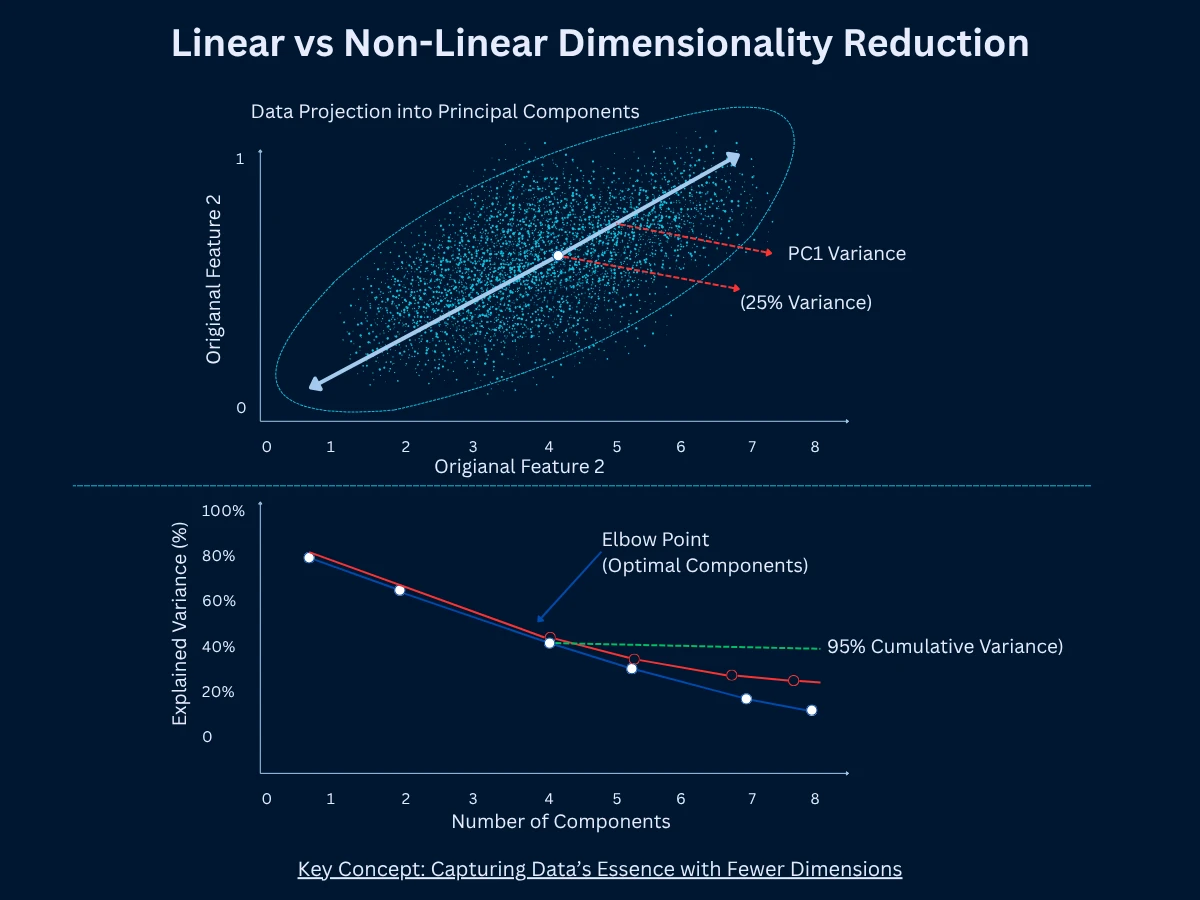

PCA is the most popular way to reduce dimensions. It finds new directions called principal components that capture maximum variance (spread) in your data. Think of variance as how much your data spreads out - high variance means data points are scattered widely. PCA draws the first component through the direction of greatest variance, then the second component orthogonal (at a right angle) to the first, capturing the next most variance, and so on. You keep only the top K components that explain most of the variation. PCA is linear (works with straight-line relationships), fast, and deterministic (always gives the same result).

How PCA Works

Standardize the Data

Scale all features to have mean of 0 and standard deviation of 1. This ensures no feature dominates just because it has larger numbers. Example: if age ranges 0-100 but income ranges 0-1000000, without standardization, income variations would overwhelm age. Standardization puts them on equal footing.

Compute the Covariance Matrix

Calculate how each feature varies with every other feature. Covariance measures whether features move together (positive covariance) or opposite directions (negative covariance). High covariance indicates redundancy - features contain overlapping information that PCA can compress into fewer components.

Calculate Eigenvectors and Eigenvalues

Find the principal directions (eigenvectors) and their importance (eigenvalues). Eigenvectors are the new axes pointing in directions of maximum variance. Eigenvalues are numbers telling you how much variance each direction captures. The largest eigenvalue corresponds to the first principal component (most important direction).

Sort Components by Eigenvalues

Rank eigenvectors by their eigenvalues from largest to smallest. This orders principal components by importance - how much variance each explains. The top components typically capture most variation, while lower components capture noise and minor details you can discard.

Select Top K Components

Choose the first K principal components that explain sufficient variance. Common practice: keep components explaining 95% of total variance. If 3 components explain 95% of variance in 100-dimensional data, you reduce from 100 to 3 dimensions while retaining 95% of information.

Transform Data to New Basis

Project original data onto the selected principal components. Each data point gets new coordinates in the reduced space defined by the K components. This transformation preserves maximum variance from the original space in fewer dimensions - the core goal of PCA.

The beauty of PCA is that it's deterministic (always same result), fast (scales to millions of samples), and the components are ordered by importance. You can plot explained variance to decide how many components to keep. If 5 components explain 95% of variance, you've reduced dimensionality dramatically with minimal information loss.

- How it works: Find directions of maximum variance, project data onto top K directions, producing K-dimensional representation

- Strengths: Very fast, scales to millions of samples, deterministic results, interpretable components, can reconstruct original data, removes correlated features

- Limitations: Only captures linear relationships, assumes high variance equals importance, sensitive to feature scaling, cannot capture curved manifolds

- Best for: Preprocessing before ML algorithms, noise reduction, feature extraction, data compression, when linear structure exists

- Common uses: Image compression, face recognition (eigenfaces), gene expression analysis, financial portfolio analysis, general preprocessing

Eigenfaces: PCA in Action

1

from sklearn.decomposition import PCA

2

from sklearn.datasets import load_digits

3

from sklearn.preprocessing import StandardScaler

4

import numpy as np

5

6

# Load digits dataset (64 features - 8x8 pixel images)

7

digits = load_digits()

8

X = digits.data # 1797 samples, 64 features

9

y = digits.target

10

11

# Standardize features (important for PCA)

12

scaler = StandardScaler()

13

X_scaled = scaler.fit_transform(X)

14

15

# Apply PCA

16

pca = PCA(n_components=20) # Reduce 64 -> 20 dimensions

17

X_pca = pca.fit_transform(X_scaled)

18

19

print(f"Original shape: {X.shape}") # Output: (1797, 64)

20

print(f"Reduced shape: {X_pca.shape}") # Output: (1797, 20)

21

22

# Analyze explained variance

23

explained_var = pca.explained_variance_ratio_

24

cumulative_var = np.cumsum(explained_var)

25

26

print(f"\nExplained variance by component:")

27

for i in range(5):

28

print(f" PC{i+1}: {explained_var[i]:.3f} ({explained_var[i]*100:.1f}%)")

29

30

# Output:

31

# PC1: 0.122 (12.2%) <- First component captures 12% of variance

32

# PC2: 0.093 (9.3%)

33

# PC3: 0.084 (8.4%)

34

# PC4: 0.066 (6.6%)

35

# PC5: 0.050 (5.0%)

36

37

print(f"\nCumulative variance explained:")

38

print(f" 5 components: {cumulative_var[4]:.3f} (41%)")

39

print(f" 10 components: {cumulative_var[9]:.3f} (67%)")

40

print(f" 20 components: {cumulative_var[19]:.3f} (87%)")

41

42

print(f"\nResult: 20 components capture 87% of variance from 64 features!")

43

print(f"Dimensionality reduced by 69% with only 13% information loss")

t-SNE: Visualization Specialist

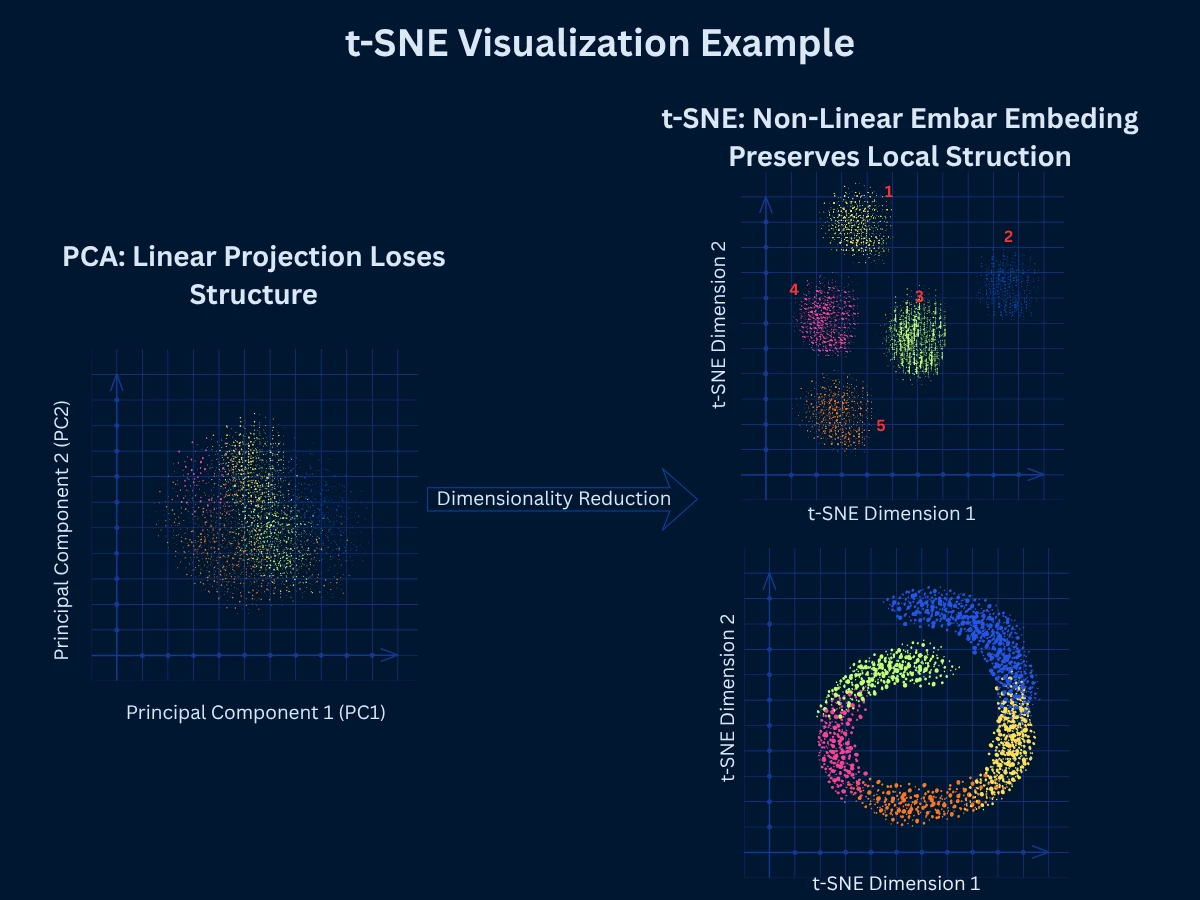

t-SNE (t-Distributed Stochastic Neighbor Embedding) is a non-linear technique specifically designed for visualizing high-dimensional data in 2D or 3D. It excels at preserving local structure - points that are close in high-dimensional space remain close in the low-dimensional embedding. This creates beautiful visualizations where clusters are clearly separated, making t-SNE the go-to choice for exploratory data visualization in fields from genomics to NLP.

Think of t-SNE like drawing a neighborhood map. If your house is next to Sarah's house and far from the grocery store in real life, the map keeps your house next to Sarah's (local structure preserved). But the map might shrink or expand the distance to the grocery store to fit the page - that's okay because you mainly care about seeing which houses are neighbors. Similarly, t-SNE ensures similar data points stay clustered together in the visualization, even if it means slightly distorting how far apart different clusters appear.

Unlike PCA which preserves global variance (overall spread), t-SNE focuses on preserving neighborhoods. It converts distances to probabilities - the probability that point A picks point B as its neighbor should be similar in both high and low dimensions. The algorithm minimizes the difference between these probability distributions using gradient descent. This emphasis on local structure produces clear cluster separation but can distort global structure (distances between clusters are less meaningful).

- How it works: Compute pairwise similarities in high dimensions, initialize random low-dimensional embedding, iteratively adjust embedding to preserve neighborhood probabilities

- Strengths: Excellent 2D/3D visualizations, preserves local structure beautifully, reveals clusters clearly, handles non-linear relationships, works with various distance metrics

- Limitations: Slow on large datasets (> 10,000 samples), non-deterministic (different runs give different results), cannot embed new data (no inverse transform), distorts global structure and distances

- Best for: Visualizing high-dimensional data in 2D/3D, exploring cluster structure, presentations and publications, small to medium datasets

- Common uses: Single-cell RNA sequencing visualization, word embedding visualization, image dataset exploration, high-dimensional data exploration

t-SNE Interpretation Pitfalls

1

from sklearn.manifold import TSNE

2

from sklearn.datasets import load_digits

3

from sklearn.preprocessing import StandardScaler

4

import numpy as np

5

import time

6

7

# Load digits dataset (64 features, 10 classes)

8

digits = load_digits()

9

X = digits.data[:1000] # Use subset for speed (t-SNE is slow)

10

y = digits.target[:1000]

11

12

# Standardize

13

scaler = StandardScaler()

14

X_scaled = scaler.fit_transform(X)

15

16

# Apply t-SNE with default perplexity

17

print("Running t-SNE (this takes 30-60 seconds)...")

18

start = time.time()

19

20

tsne = TSNE(

21

n_components=2, # Reduce to 2D for visualization

22

perplexity=30, # Balance local vs global structure

23

random_state=42, # For reproducibility

24

n_iter=1000 # Number of iterations

25

)

26

X_tsne = tsne.fit_transform(X_scaled)

27

28

elapsed = time.time() - start

29

print(f"t-SNE completed in {elapsed:.1f} seconds")

30

31

print(f"\nOriginal shape: {X.shape}") # (1000, 64)

32

print(f"Embedded shape: {X_tsne.shape}") # (1000, 2)

33

34

# Analyze cluster separation by class

35

print(f"\nCluster separation (per digit class):")

36

for digit in range(5):

37

class_points = X_tsne[y == digit]

38

centroid = class_points.mean(axis=0)

39

print(f" Digit {digit}: centroid at ({centroid[0]:.1f}, {centroid[1]:.1f})")

40

41

# Output shows distinct centroids for each digit class:

42

# Digit 0: centroid at (-15.2, 8.3)

43

# Digit 1: centroid at (22.1, -5.7)

44

# Digit 2: centroid at (-8.4, -12.1)

45

# ...

46

47

print(f"\nResult: t-SNE creates visually separated 2D clusters")

48

print(f"Perfect for visualization, but took {elapsed:.1f}s for 1000 samples")

UMAP: Modern Scalable Embedding

UMAP (Uniform Manifold Approximation and Projection) is a modern alternative to t-SNE that's faster, more scalable, and better at preserving global structure while maintaining t-SNE's excellent local structure preservation. UMAP uses manifold learning and topological data analysis to build a high-dimensional graph representation, then optimizes a low-dimensional layout. It has become the preferred choice for many applications that previously used t-SNE.

Extending the map analogy: t-SNE is like a detailed city map that perfectly shows which houses are neighbors but might not accurately show distances between different neighborhoods. UMAP is like a more balanced map - it still keeps neighbors together, but also tries to preserve the actual distances between neighborhoods. If downtown is twice as far from the suburbs as the suburbs are from the airport, UMAP attempts to maintain those relative distances in the visualization. This makes UMAP better when you need to understand relationships between clusters, not just within them.

UMAP's key advantages over t-SNE are speed (10-100x faster), scalability (handles millions of points), better global structure preservation (distances between clusters are more meaningful), and the ability to transform new data. These improvements make UMAP practical for interactive exploration and production systems, not just static visualizations. However, it requires more parameter tuning and is less established than t-SNE.

- How it works: Build fuzzy topological representation of high-dimensional data, optimize low-dimensional graph layout to match high-dimensional topology

- Strengths: Much faster than t-SNE, scales to millions of samples, preserves both local and global structure, can transform new data, flexible distance metrics

- Limitations: More parameters to tune (n_neighbors, min_dist), less established than t-SNE, harder to interpret parameters, implementation differences across libraries

- Best for: Large-scale visualization, when you need both local and global structure, production systems requiring new data transformation, modern data science workflows

- Common uses: Single-cell genomics, large image datasets, text embeddings, interactive data exploration, preprocessing for clustering

| Aspect | t-SNE | UMAP |

|---|---|---|

| Speed on 10k samples | Minutes | Seconds |

| Speed on 1M samples | Impractical | Minutes to hours |

| Local Structure | Excellent | Excellent |

| Global Structure | Poor (distorted) | Good (preserved) |

| Deterministic | No (random init) | No (but more stable) |

| Transform New Data | No | Yes |

| Main Parameter | Perplexity (5-50) | n_neighbors (5-100) |

| Typical Use | Static visualizations, publications | Interactive exploration, production |

| Maturity | Established (2008) | Newer (2018) |

When to Use UMAP vs t-SNE

1

from sklearn.manifold import TSNE

2

from umap import UMAP

3

from sklearn.datasets import load_digits

4

from sklearn.preprocessing import StandardScaler

5

import time

6

7

# Load digits dataset

8

digits = load_digits()

9

X = digits.data[:1500] # 1500 samples

10

y = digits.target[:1500]

11

12

# Standardize

13

scaler = StandardScaler()

14

X_scaled = scaler.fit_transform(X)

15

16

# Run t-SNE

17

print("Running t-SNE...")

18

start_tsne = time.time()

19

tsne = TSNE(n_components=2, random_state=42)

20

X_tsne = tsne.fit_transform(X_scaled)

21

time_tsne = time.time() - start_tsne

22

23

# Run UMAP

24

print("Running UMAP...")

25

start_umap = time.time()

26

umap_model = UMAP(n_components=2, random_state=42)

27

X_umap = umap_model.fit_transform(X_scaled)

28

time_umap = time.time() - start_umap

29

30

# Compare results

31

print(f"\nPerformance Comparison:")

32

print(f"{'Method':<10} {'Time':<12} {'Speed'}")

33

print("-" * 40)

34

print(f"{'t-SNE':<10} {time_tsne:>8.1f}s")

35

print(f"{'UMAP':<10} {time_umap:>8.1f}s {time_tsne/time_umap:.1f}x faster")

36

37

# Output:

38

# Performance Comparison:

39

# Method Time Speed

40

# ----------------------------------------

41

# t-SNE 45.2s

42

# UMAP 3.1s 14.6x faster

43

44

# Test UMAP's ability to transform new data

45

new_data = X_scaled[1500:1550] # 50 new samples

46

X_new_umap = umap_model.transform(new_data)

47

48

print(f"\nUMAP can transform new data: {X_new_umap.shape}") # (50, 2)

49

print(f"t-SNE cannot - must retrain entire model")

50

51

print(f"\nConclusion:")

52

print(f"- UMAP is {time_tsne/time_umap:.1f}x faster ({time_umap:.1f}s vs {time_tsne:.1f}s)")

53

print(f"- UMAP can embed new data (production-ready)")

54

print(f"- Both produce high-quality 2D visualizations")

55

print(f"- Use UMAP for large datasets and production systems")

Autoencoders: Deep Learning Approach

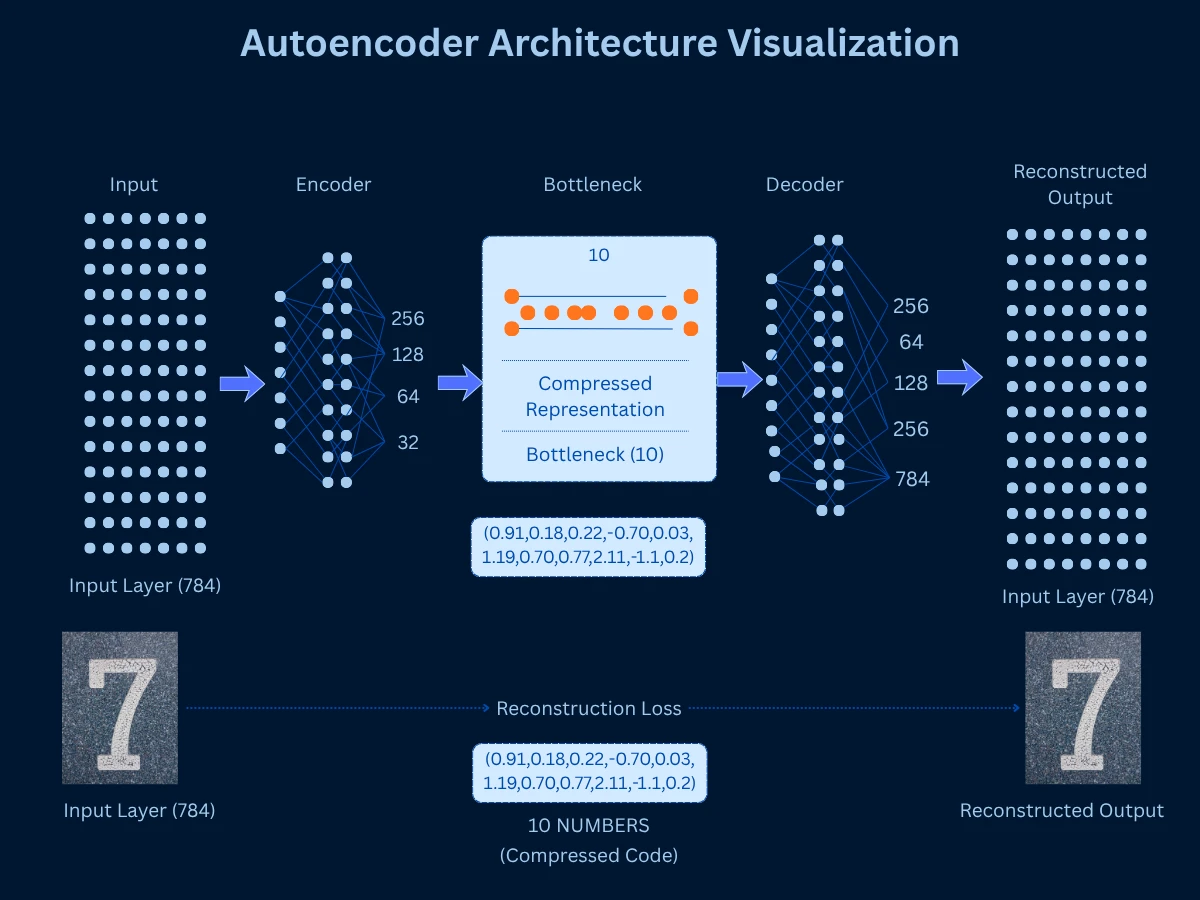

Autoencoders are neural networks trained to compress data to a low-dimensional bottleneck layer, then reconstruct the original data from that compressed representation. The bottleneck layer serves as the dimensionality-reduced representation. By learning to reconstruct data through a narrow bottleneck, the autoencoder must learn an efficient compressed encoding that captures essential information. This is non-linear dimensionality reduction powered by deep learning.

Think of autoencoders like explaining a movie to a friend. You can't repeat every scene word-for-word (high-dimensional original data). Instead, you compress it to key plot points and characters (bottleneck layer), then your friend reconstructs the story in their mind (decoder). The better you are at picking essential information for that summary, the better the reconstruction. Similarly, the autoencoder learns which features are essential by training to minimize the difference between original and reconstructed data. If it can rebuild accurate images from just 10 numbers, those 10 numbers must capture what really matters.

The architecture consists of an encoder (compresses input to bottleneck) and decoder (reconstructs from bottleneck). Training minimizes reconstruction error - how different is the output from the input? Once trained, you discard the decoder and use the encoder to transform new data to low dimensions. Variations include sparse autoencoders (enforce sparsity), variational autoencoders (probabilistic encoding), and denoising autoencoders (learn robust features).

- How it works: Train neural network to compress data through bottleneck and reconstruct it, use bottleneck layer activations as reduced representation

- Strengths: Captures complex non-linear patterns, flexible architecture (deep networks), can transform new data, learns task-specific representations, state-of-the-art for many applications

- Limitations: Requires large datasets (thousands+ samples), slow to train, many hyperparameters to tune, requires deep learning expertise, less interpretable than PCA

- Best for: Large datasets with complex patterns, when you have computational resources, image/audio/text data, when linear methods fail

- Common uses: Image compression and denoising, anomaly detection, generative models (VAEs), feature learning for deep learning, recommendation systems

Other Notable Methods

Beyond PCA, t-SNE, UMAP, and autoencoders, several specialized dimensionality reduction methods exist for specific use cases.

- LDA (Linear Discriminant Analysis): Unlike PCA which ignores labels, LDA uses your class labels to find dimensions that maximize separation between classes. If you have cat and dog images, LDA finds the directions where cats and dogs are most different from each other. This makes it excellent for preprocessing data before classification. Think of it as dimensionality reduction specifically designed to help classifiers work better.

- SVD (Singular Value Decomposition): The mathematical engine that powers PCA under the hood. While PCA is the concept, SVD is the calculation method. Often used directly on text data where documents are represented as sparse word-count matrices. For example, Netflix uses SVD for movie recommendations - it finds patterns in the sparse user-rating matrix. Extremely efficient with data that has lots of zeros.

- Isomap: Handles data that lives on curved surfaces rather than flat spaces. Instead of measuring straight-line distances (Euclidean), it measures distances along the curved surface (geodesic distances) - like measuring walking distance on a globe rather than tunneling through the Earth. Imagine a Swiss roll of data: PCA would flatten it poorly, but Isomap unrolls it smoothly. Slower than modern methods but mathematically elegant.

- MDS (Multidimensional Scaling): Tries to place points in low dimensions so that distances between them match the original high-dimensional distances as closely as possible. You give it a distance matrix (how far apart everything is), and it creates a map. Classical MDS gives the same result as PCA. Used in psychology to visualize survey responses and in marketing to map brand perceptions - helps answer questions like 'which brands do consumers see as similar?'

- Factor Analysis: Assumes your observed features are caused by hidden underlying factors. PCA says 'find directions of variation,' while Factor Analysis says 'find hidden causes.' Common in social sciences - for example, test scores in math, science, and reading might be caused by underlying 'quantitative ability' and 'verbal ability' factors. Helps discover the hidden structure behind correlated measurements.

- Random Projection: The speed demon of dimensionality reduction. Instead of carefully calculating optimal directions like PCA, it just projects data onto random directions. Sounds crazy, but mathematics proves it works well for very high dimensions (Johnson-Lindenstrauss lemma). Used when you have millions of dimensions and need results fast - trading optimality for speed. Popular in large-scale machine learning and real-time systems.

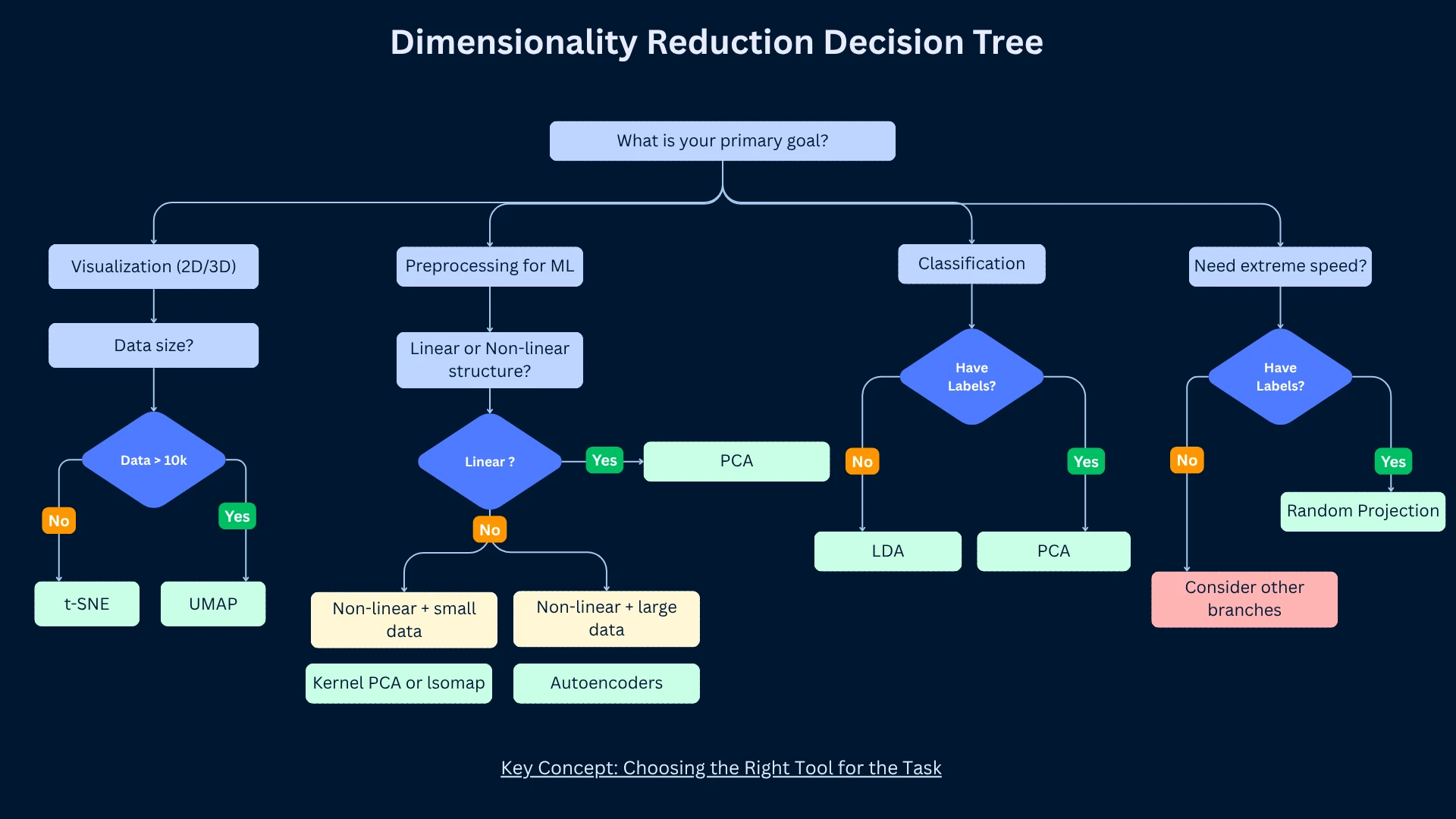

Choosing the Right Dimensionality Reduction Method

No single method works best for all problems. The right choice depends on your goals (visualization vs preprocessing), data characteristics (linear vs non-linear structure), dataset size, computational resources, and interpretability requirements.

| Choose This | When | Typical Workflow |

|---|---|---|

| PCA | Preprocessing for ML, linear structure, need speed and interpretability | Reduce 1000 features to 50, train classifier on reduced data |

| t-SNE | 2D/3D visualization, small dataset, beautiful publication figures needed | Visualize 10,000-gene cancer dataset to see patient clusters |

| UMAP | Large-scale visualization, interactive exploration, need global structure | Explore 1M document embeddings, cluster discovery |

| Autoencoders | Complex non-linear data, large dataset, deep learning expertise available | Learn compact image representations for similarity search |

| LDA | Classification task, want to maximize class separation, have labels | Reduce features while preserving class discriminability |

| Random Projection | Very high dimensions, need extreme speed, approximate solution acceptable | Quick dimensionality reduction for nearest neighbor search |

The 80/20 Rule for Dimensionality Reduction

Practical Guidelines for Dimensionality Reduction

How Many Components to Keep?

Choosing the number of components is a balance between compression and information retention. Too few components lose important information. Too many defeat the purpose of dimensionality reduction. Several techniques help find the sweet spot.

- Explained variance threshold (PCA): Keep components explaining 90-95% of variance. Plot cumulative explained variance, find where curve plateaus. This is the most common approach for PCA.

- Elbow method (PCA): Plot explained variance vs component number. Look for elbow where adding components provides diminishing returns. Similar to choosing K in clustering.

- Cross-validation: Try different numbers of components, measure downstream task performance (classification accuracy, clustering quality). Choose components maximizing performance.

- Domain knowledge: Sometimes problem domain suggests component count. Text analysis might use 50-300 topics, image recognition 100-500 features, gene expression 10-50 pathways.

- Fixed target (visualization): For t-SNE/UMAP, you usually want exactly 2 or 3 dimensions for plotting. No choice needed - the goal dictates dimensionality.

- Rule of thumb: For preprocessing before ML, reduce to 10-50 dimensions for interpretability, or maintain dimensions providing 95% explained variance, whichever is smaller.

1

from sklearn.decomposition import PCA

2

from sklearn.datasets import load_digits

3

from sklearn.preprocessing import StandardScaler

4

import numpy as np

5

6

# Load and standardize data

7

digits = load_digits()

8

scaler = StandardScaler()

9

X_scaled = scaler.fit_transform(digits.data)

10

11

# Fit PCA with all components to analyze variance

12

pca_full = PCA()

13

pca_full.fit(X_scaled)

14

15

# Get explained variance

16

explained_var = pca_full.explained_variance_ratio_

17

cumulative_var = np.cumsum(explained_var)

18

19

print("Choosing Optimal Number of Components:")

20

print("=" * 60)

21

22

# Method 1: 95% variance threshold

23

n_95 = np.argmax(cumulative_var >= 0.95) + 1

24

print(f"\n1. Variance Threshold Method (95%):")

25

print(f" Components needed: {n_95}")

26

print(f" Variance explained: {cumulative_var[n_95-1]:.3f}")

27

28

# Method 2: Elbow method - find where slope changes significantly

29

slopes = np.diff(explained_var)

30

elbow = np.argmax(slopes < 0.01) + 1 # Slope drops below 1%

31

print(f"\n2. Elbow Method:")

32

print(f" Elbow at component: {elbow}")

33

print(f" Variance explained: {cumulative_var[elbow-1]:.3f}")

34

35

# Show variance breakdown

36

print(f"\n3. Variance Breakdown:")

37

thresholds = [0.80, 0.90, 0.95, 0.99]

38

for threshold in thresholds:

39

n_comp = np.argmax(cumulative_var >= threshold) + 1

40

print(f" {int(threshold*100)}% variance: {n_comp:2d} components")

41

42

# Output:

43

# Choosing Optimal Number of Components:

44

# ============================================================

45

#

46

# 1. Variance Threshold Method (95%):

47

# Components needed: 29

48

# Variance explained: 0.951

49

#

50

# 2. Elbow Method:

51

# Elbow at component: 12

52

# Variance explained: 0.754

53

#

54

# 3. Variance Breakdown:

55

# 80% variance: 8 components

56

# 90% variance: 18 components

57

# 95% variance: 29 components

58

# 99% variance: 41 components

59

60

print(f"\nRecommendation:")

61

print(f"- For visualization: 2-3 components")

62

print(f"- For preprocessing (balanced): {elbow} components (elbow method)")

63

print(f"- For high fidelity: {n_95} components (95% variance)")

64

print(f"\nReduced from {X_scaled.shape[1]} to {elbow}-{n_95} dimensions!")

Essential Preprocessing Steps

- Standardization (critical for PCA, t-SNE, UMAP): Center features to mean zero, scale to unit variance. Use StandardScaler. Without this, large-scale features dominate. ALWAYS standardize unless features already have comparable scales.

- Handle missing values: Impute missing values before dimensionality reduction (mean imputation, median, KNN imputation). Most methods don't handle missing data natively.

- Remove low-variance features: Features with near-zero variance provide no information. Drop them before PCA to avoid numerical issues and speed up computation.

- Handle outliers: Extreme outliers can distort PCA. Consider robust scaling or outlier removal for sensitive applications. t-SNE and UMAP are more robust to outliers.

- Consider feature selection first: If you have 10,000 features and only 100 samples, use feature selection to reduce to 500-1000 features before applying dimensionality reduction. This prevents overfitting and speeds computation.

Interpreting Reduced Dimensions

- PCA component interpretation: Examine feature loadings - which original features contribute most to each component. PC1 might represent 'size' if all features have similar positive loadings. PC2 might represent 'contrast' if features have mixed positive/negative loadings.

- Visualization interpretation: For t-SNE/UMAP, focus on cluster presence and separation. Don't interpret cluster sizes or inter-cluster distances. Run multiple times to verify patterns are consistent, not random artifacts.

- Reconstruction error: For PCA and autoencoders, compute reconstruction error - how well can you recover original data from reduced representation? Low error indicates good compression.

- Downstream task performance: The ultimate test - does dimensionality reduction improve or maintain performance on your actual task (classification, clustering, etc.)? If accuracy drops significantly, you're losing critical information.

- Domain validation: Show results to domain experts. Do clusters make sense? Do principal components correspond to known biological/business factors? Dimensionality reduction should reveal meaningful patterns, not artifacts.

Common Pitfalls to Avoid

Real-World Applications

Dimensionality reduction enables applications across every field dealing with high-dimensional data. Understanding these use cases helps you recognize opportunities in your own work.

| Domain | Application | Method Used | Impact |

|---|---|---|---|

| Genomics | Visualizing single-cell RNA sequencing (30,000 genes) | PCA, t-SNE, UMAP | Discovered new cell types and disease subtypes |

| Computer Vision | Face recognition (eigenfaces) | PCA | Reduced face matching time by 100x while maintaining accuracy |

| NLP | Word embeddings visualization | t-SNE, UMAP | Revealed semantic relationships in 300D word vectors |

| Recommendation | Netflix collaborative filtering | SVD (Matrix Factorization) | Compressed 20,000 movies to 50 factors, improved recommendations |

| Astronomy | Galaxy classification from spectra | PCA, Autoencoders | Reduced 1000-wavelength spectra to 10 features for classification |

| Finance | Portfolio risk analysis | PCA (Factor Analysis) | Identified 5-10 risk factors explaining 90% of stock variance |

| Healthcare | Medical image compression and analysis | PCA, Autoencoders | Reduced MRI storage by 90% without diagnostic quality loss |

| Manufacturing | Quality control from sensor data | PCA | Reduced 200 sensor readings to 10 principal components for anomaly detection |

| Marketing | Customer segmentation from behavior | PCA, t-SNE | Reduced 500 features to 20 for clustering, improved targeting by 30% |

| Neuroscience | Brain activity pattern discovery | PCA, ICA | Identified neural circuits from fMRI data (100,000 voxels to 50 networks) |

How Google Uses Dimensionality Reduction

Frequently Asked Questions

01 Should I apply dimensionality reduction before or after train-test split?

Fit on training data only, transform both train and test. This prevents data leakage. Correct workflow: (1) Split data into train/test, (2) Fit PCA on training data only - compute mean, standard deviation, principal components from training data, (3) Transform both training and test data using parameters learned from training. WRONG: fitting on all data before split leaks information from test set into training, inflating performance estimates.

02 Can dimensionality reduction improve model performance?

Yes, especially when you have high-dimensional data with limited samples. Benefits: (1) Reduces overfitting by removing noisy features and reducing model complexity, (2) Speeds training by reducing computational cost, (3) Improves generalization by focusing on signal vs noise, (4) Enables models that struggle with high dimensions (k-NN, SVM). However, for models with built-in regularization (random forests, gradient boosting) on low-dimensional data, dimensionality reduction may not help. Always test both with and without reduction.

03 What's the difference between dimensionality reduction and feature selection?

Feature selection: Choose a subset of original features (e.g., select 50 out of 1000 features). Keeps features unchanged. Methods: univariate filtering, recursive feature elimination, L1 regularization. Dimensionality reduction: Create new features as combinations of originals (e.g., 50 principal components from 1000 features). Transforms features to new space. Use feature selection when interpretability matters; use dimensionality reduction for maximum compression.

04 Why does PCA sometimes make clusters less separable?

PCA maximizes variance, not class separability. If class-distinguishing features have low variance, PCA will discard them. Example: two classes differ only in a low-variance feature - PCA's first components capture high-variance features unrelated to classes. SOLUTION: (1) Use supervised dimensionality reduction like LDA if you have labels, (2) Try more components, (3) Standardize features so variance differences aren't just scale differences, (4) Consider non-linear methods if classes are separated by non-linear boundaries.

05 Can I use dimensionality reduction for feature engineering?

Yes! Dimensionality reduction creates new features that can improve model performance. Workflow: (1) Apply dimensionality reduction to create K new features (principal components, embeddings), (2) Use these K features as input to your model, or (3) Concatenate reduced features with original features for ensemble approach. Example: reduce 1000 features to 50 with PCA, use those 50 as input to random forest. This is especially effective for neural networks.

06 How do I handle categorical features for dimensionality reduction?

Standard PCA, t-SNE, UMAP expect numerical features. For categorical features: (1) One-hot encoding: Convert categories to binary features, then apply dimensionality reduction, (2) Target encoding: Replace categories with mean target value for supervised problems, (3) Embeddings: Use entity embeddings (autoencoders) to learn low-dimensional representations, (4) Gower distance: Use distance metrics designed for mixed data types, (5) Separate handling: Apply dimensionality reduction only to numerical features, keep categorical as-is.

07 Why are my t-SNE results different every time I run it?

t-SNE is non-deterministic due to random initialization and stochastic gradient descent. SOLUTIONS: (1) Set random seed for reproducibility during development (random_state parameter), (2) Run multiple times (5-10 runs) and look for consistent patterns - if clusters appear in all runs, they're real, (3) Initialize with PCA for more stable results, (4) Use UMAP which is more deterministic due to better initialization. Remember: exact positions change, but relative structure (clusters, neighborhoods) should be consistent.

08 When should I use kernel PCA instead of regular PCA?

Kernel PCA applies the kernel trick to capture non-linear relationships while maintaining PCA's framework. Use kernel PCA when: (1) Data has non-linear structure that linear PCA misses, (2) You want PCA's interpretability with non-linear power, (3) Dataset is small-medium size (kernel PCA is computationally expensive), (4) You need to reconstruct data (some kernels allow approximate reconstruction). For large datasets or when reconstruction isn't needed, consider UMAP or autoencoders instead.

Ready to Dive Deeper?

You now understand the most important dimensionality reduction techniques, when to use linear vs non-linear methods, how each major algorithm works, and practical guidelines for application. Your next step is exploring specific algorithms in depth or applying these techniques to your own high-dimensional data.

Your Learning Path

Dimensionality reduction is not just a preprocessing step - it's a lens for understanding your data. When you see high-dimensional cancer data projected to 2D and patient groups separate clearly, you're not just visualizing - you're discovering. Some of our most important findings in genomics came from dimensionality reduction revealing structure we didn't know existed. The curse of dimensionality is real, but these techniques turn that curse into opportunity.