Association Rules Mining

Discover Hidden Patterns in Transaction Data

"Customers who bought diapers also bought baby formula." Amazon's recommendation engine makes billions from this simple insight. Association rule mining discovers these if-then patterns automatically from transaction data - identifying which items co-occur in purchases without any labeled training data. From optimizing store layouts to detecting fraud patterns to predicting disease symptoms, you'll master the algorithms (Apriori, FP-Growth), core metrics (support, confidence, lift), and real-world applications that make association rules one of the most profitable unsupervised learning techniques in production.

Association rule mining transformed retail and e-commerce by revealing hidden patterns in transaction data. The Apriori algorithm we developed showed that computational efficiency and statistical rigor could coexist. Today's recommendation engines generating billions in revenue trace their roots to these early pattern mining techniques. The lesson: simple rules, when discovered at scale from real data, create immense business value.

What Are Association Rules?

An association rule is a pattern that says: "If A, then B" - written as A → B. The left side (A) is called the AntecedentAntecedentThe "IF" part of an association rule - the item or group of items on the left side. If the antecedent is present in a transaction, the rule predicts what else might appear.Example:In the rule bread, butter -> milk, the antecedent is "bread, butter" - these are the items that trigger the prediction. (the "if" part), and the right side (B) is the ConsequentConsequentThe "THEN" part of an association rule - the item or group of items on the right side. This is what the rule predicts will appear alongside the antecedent.Example:In the rule bread, butter -> milk, the consequent is "milk" - this is what the rule predicts the customer will also buy. (the "then" part). For example: bread, butter → milk means "customers who buy bread and butter also tend to buy milk." The rule does not claim causation - it only shows Co-occurrenceCo-occurrenceWhen two or more items appear together in the same transaction. Association rules measure how often items co-occur, not whether one causes the other.Example:Diapers and baby formula often co-occur in the same shopping basket - not because one causes the other, but because parents buy both at once., meaning these items appear in purchases together.

Rules come from Frequent ItemsetFrequent ItemsetAn itemset that appears in at least a minimum number (or percentage) of transactions. Only frequent itemsets are used to generate rules - rare combinations are filtered out as noise.Example:If bread and milk appear together in 15% of all transactions and the minimum threshold is 10%, then {bread, milk} is a frequent itemset. - groups of ItemsetItemsetA group of one or more items that appear together in a transaction. A 2-itemset has two items, a 3-itemset has three, and so on.Example:{bread, milk} is a 2-itemset. {bread, milk, eggs} is a 3-itemset. Both count as one "basket" from a single shopping trip. that show up together often in your data. For example, the 3-itemset bread, milk, eggs can produce three different rules:

- bread, milk → eggs - customers buying bread and milk also buy eggs

- bread → milk, eggs - customers buying bread also buy milk and eggs

- milk, eggs → bread - customers buying milk and eggs also buy bread

Each rule is then scored with metrics like support, confidence, and lift to judge whether the pattern is strong enough to act on.

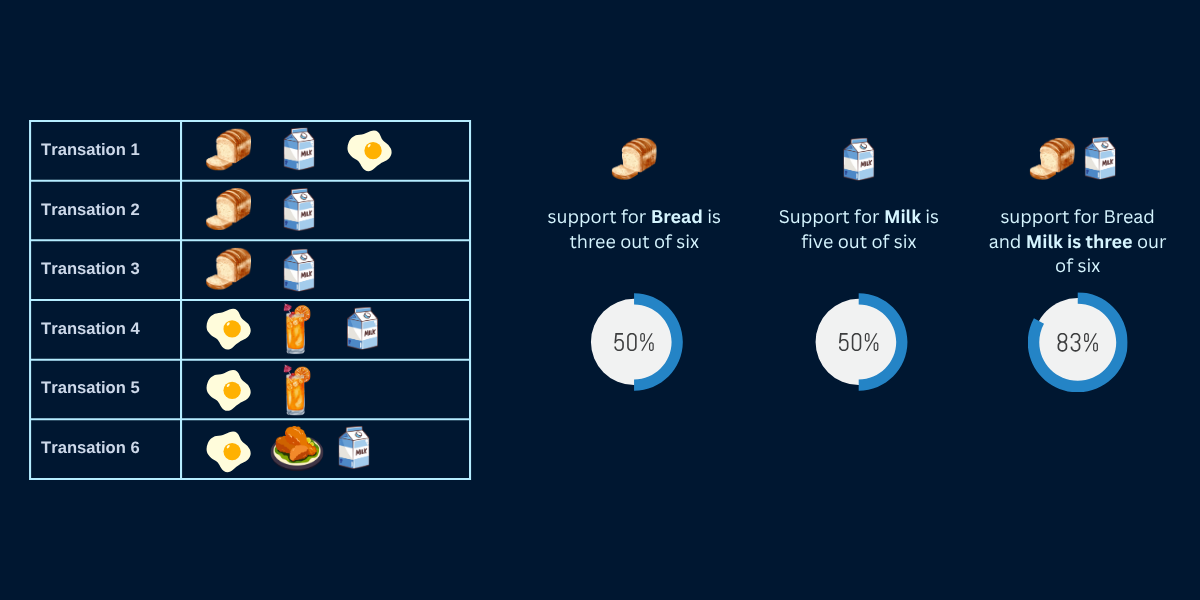

Understanding Transaction Data

Association rule mining works on transaction databases where each row is a transaction containing a set of items. A grocery store transaction might include bread, milk, eggs, butter. A medical record might list diabetes, hypertension, high cholesterol. A web session might show homepage, product page, cart, checkout. Each item is simply marked as present or not - the algorithm does not care how many you bought or in what order.

| Transaction ID | Items Purchased |

|---|---|

| T001 | bread, milk, eggs |

| T002 | bread, butter, jam |

| T003 | milk, eggs, cheese |

| T004 | bread, milk, butter |

| T005 | bread, milk, eggs, butter |

From this database, the algorithm identifies frequent itemsets (bread and milk appear together in T001, T004, T005) and generates rules (bread → milk with 60% confidence means 60% of bread purchases also include milk). The goal is finding hidden patterns that humans would miss in large datasets with thousands of items and millions of transactions.

Key Metrics: Support, Confidence, and Lift

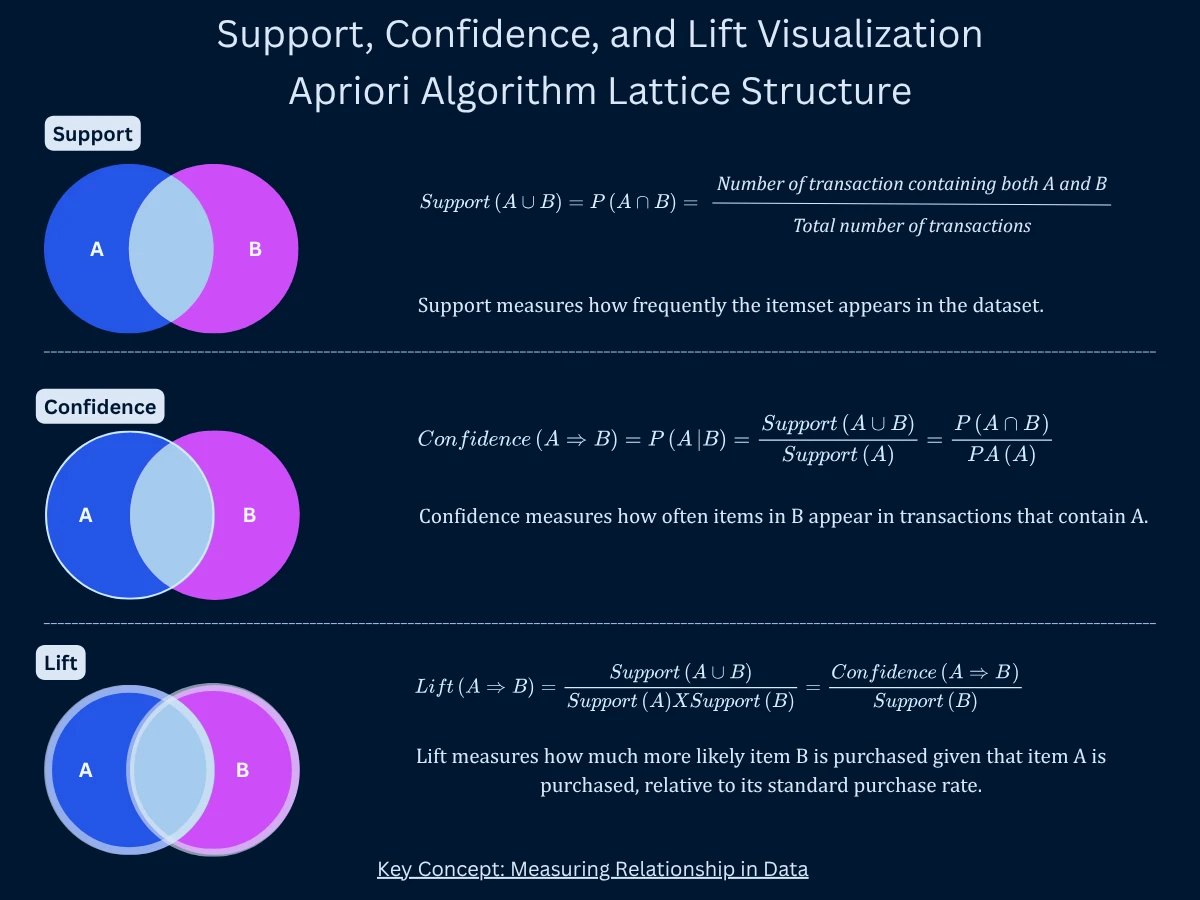

Association rules are evaluated with three core metrics: support measures frequency, confidence measures reliability, and lift measures correlation strength. Understanding these metrics is critical for filtering useful rules from spurious correlations.

Support: Frequency of Occurrence

Support is the proportion of transactions containing an itemset. For rule A → B, support is calculated as transactions containing both A and B divided by total transactions. Example: If bread and milk appear together in 150 of 1000 transactions, support is 15%. High support means the pattern is common and statistically significant. Low support patterns (less than 1%) are often discarded as noise unless domain experts confirm they are meaningful.

Support Formula

Confidence: Reliability of the Rule

Confidence measures how often B appears in transactions that already contain A. Here is how to calculate it step by step, using 1000 total transactions:

- Support(A → B) = Support(bread → milk) = 150 appearances / 1000 transactions = 15%

- Support(A) = Support(bread) = 300 appearances / 1000 transactions = 30%

- Confidence = Support(A → B) / Support(A) = 15% / 30% = 50%

That 50% means half of all customers who bought bread also bought milk. High confidence (above 60-70%) indicates a strong predictive relationship.

Confidence Formula

However, confidence alone can be misleading. If milk is extremely popular (appearing in 80% of all transactions), then 50% confidence is actually below the baseline expectation. This is why lift is needed.

Lift: Correlation Strength

Lift measures how much more likely B is to be purchased when A is purchased, compared to B being purchased randomly. Here is how to calculate it step by step, continuing from our bread and milk example:

- Confidence(A → B) = Confidence(bread → milk) = 50% (calculated above)

- Support(B) = Support(milk) = 400 appearances / 1000 transactions = 40%

- Lift(A → B) = Confidence(A → B) / Support(B) = 50% / 40% = 1.25

A lift of 1.25 means customers who bought bread are 25% more likely to buy milk than a random customer. Here is how to interpret any lift value:

- Lift = 1 - the two items are independent, buying A has no effect on B

- Lift > 1 - buying A makes B more likely, a positive association

- Lift < 1 - buying A actually makes B less likely, a negative association

Rules with lift above 2-3 are considered strong correlations worth acting on.

Lift Formula

| Metric | Formula | Interpretation | Typical Threshold |

|---|---|---|---|

| Support | Count(A and B) / Total | How often itemset appears | > 1-5% |

| Confidence | Support(A,B) / Support(A) | How reliable the rule is | > 60-70% |

| Lift | Confidence / Support(B) | Strength of correlation | > 1.5-2.0 |

| Conviction | (1-Support(B)) / (1-Confidence) | Rule strength vs randomness | > 1.2 |

| Leverage | Support(A,B) - Support(A)xSupport(B) | Improvement over independence | > 0.01 |

1

import pandas as pd

2

from collections import Counter

3

4

# Sample transaction data (grocery store)

5

transactions = [

6

['bread', 'milk', 'eggs'],

7

['bread', 'butter', 'jam'],

8

['milk', 'eggs', 'cheese'],

9

['bread', 'milk', 'butter'],

10

['bread', 'milk', 'eggs', 'butter'],

11

['milk', 'cheese'],

12

['bread', 'butter', 'eggs'],

13

['bread', 'milk'],

14

['milk', 'eggs'],

15

['bread', 'milk', 'butter', 'eggs']

16

]

17

18

total_transactions = len(transactions)

19

print(f"Total transactions: {total_transactions}\n")

20

21

# Calculate support for individual items

22

item_counts = Counter()

23

for transaction in transactions:

24

for item in transaction:

25

item_counts[item] += 1

26

27

print("Item Support:")

28

for item, count in item_counts.most_common():

29

support = count / total_transactions

30

print(f" {item}: {count}/{total_transactions} = {support:.2%}")

31

32

# Output:

33

# bread: 7/10 = 70.00%

34

# milk: 8/10 = 80.00%

35

# eggs: 6/10 = 60.00%

36

# butter: 5/10 = 50.00%

37

38

# Calculate metrics for rule: bread -> milk

39

bread_count = item_counts['bread'] # 7

40

milk_count = item_counts['milk'] # 8

41

42

# Count transactions with both bread AND milk

43

bread_and_milk = sum(1 for t in transactions if 'bread' in t and 'milk' in t)

44

print(f"\nRule: bread -> milk")

45

print(f"Bread appears in {bread_count} transactions")

46

print(f"Milk appears in {milk_count} transactions")

47

print(f"Both appear together in {bread_and_milk} transactions\n")

48

49

# Support: P(bread AND milk)

50

support = bread_and_milk / total_transactions

51

print(f"Support = {bread_and_milk}/{total_transactions} = {support:.2%}")

52

53

# Confidence: P(milk | bread) = P(bread AND milk) / P(bread)

54

confidence = bread_and_milk / bread_count

55

print(f"Confidence = {bread_and_milk}/{bread_count} = {confidence:.2%}")

56

57

# Lift: Confidence / P(milk)

58

milk_support = milk_count / total_transactions

59

lift = confidence / milk_support

60

print(f"Lift = {confidence:.3f} / {milk_support:.3f} = {lift:.2f}")

61

62

print(f"\nInterpretation:")

63

print(f"- {support:.0%} of all transactions contain both bread and milk")

64

print(f"- {confidence:.0%} of bread buyers also buy milk")

65

print(f"- Buying bread makes you {lift:.2f}x more likely to buy milk")

66

print(f"- Lift > 1 indicates positive correlation (actionable rule!)")

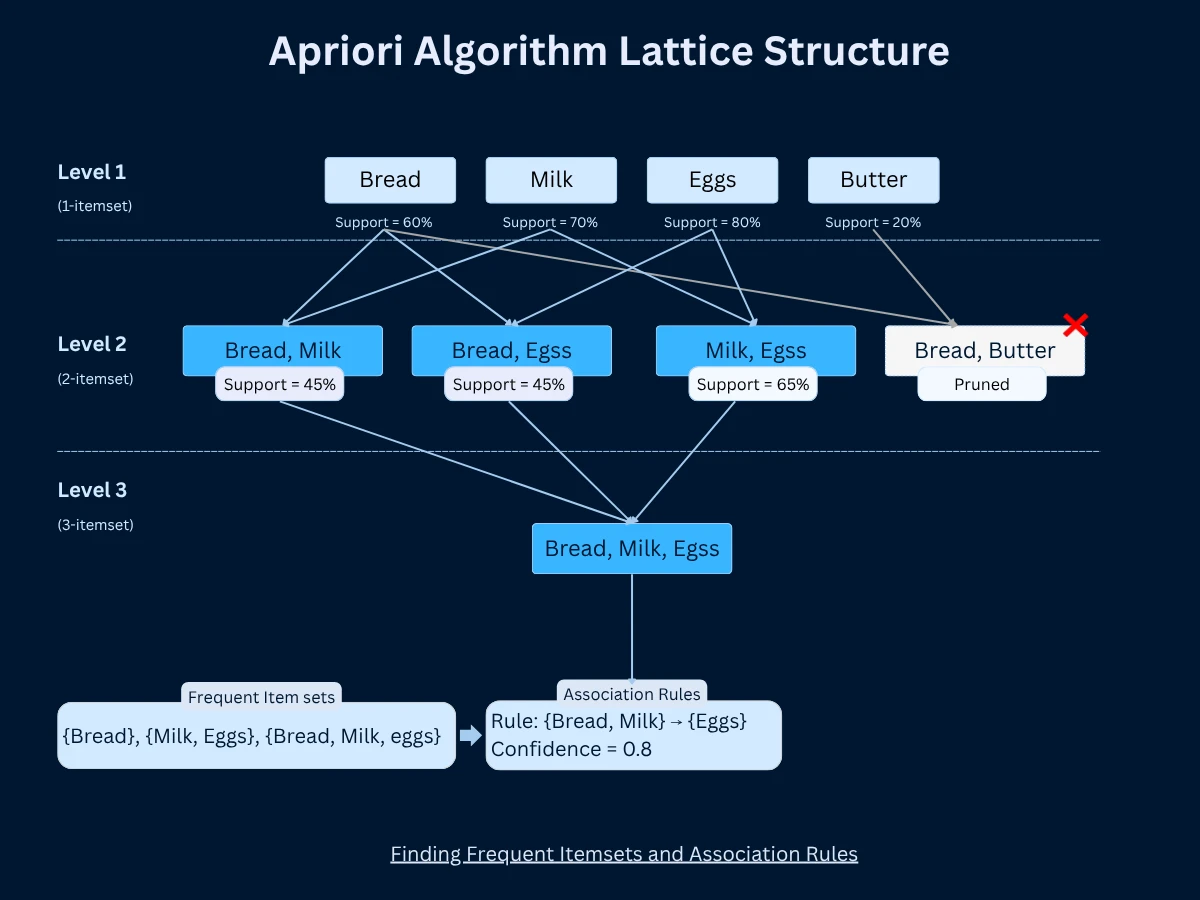

The Apriori Algorithm

Apriori is the foundational algorithm for association rule mining, introduced by Agrawal and Srikant in 1994. It is built on one simple but powerful idea: if a group of items is frequent, every smaller group within it must also be frequent. The reverse is also true - if a group is rare, any larger group containing it will be even rarer. This lets the algorithm skip millions of combinations without ever checking them.

The algorithm runs in two phases. With 1000 items there are potentially 2^1000 possible combinations - far too many to check one by one. The two-phase approach keeps this manageable:

- Frequent itemset generation - scan all transactions and find every group of items that meets the minimum support threshold. Any group that falls below the threshold is dropped immediately, along with all larger groups that contain it.

- Rule generation - take those frequent groups and create all possible if-then rules from them, keeping only the rules that meet the minimum confidence threshold.

Set Minimum Support Threshold

Define minimum support (e.g., 2% of transactions). Only itemsets appearing in at least this percentage of transactions will be considered frequent. This threshold filters out rare combinations that lack statistical significance.

Generate Candidate 1-Itemsets

Count frequency of each individual item across all transactions. Example: bread appears in 300 of 1000 transactions (30% support). Filter out items below minimum support threshold.

Generate Candidate 2-Itemsets

Combine frequent 1-itemsets into pairs and count their co-occurrence. Example: bread and milk appear together in 150 transactions (15% support). Filter out pairs below threshold.

Generate Larger Itemsets

Iteratively build 3-itemsets from frequent 2-itemsets, 4-itemsets from frequent 3-itemsets, and so on. Stop when no new frequent itemsets can be generated. This is the bottleneck for large datasets.

Generate Association Rules

From each frequent itemset, create all possible rules and calculate confidence. Example: From bread, milk, eggs create rules like: bread → milk, eggs or milk → bread, eggs. Each rule gets a confidence score.

Filter by Confidence and Lift

Keep only rules exceeding minimum confidence (e.g., 60%) and lift > 1. Rules with lift <= 1 indicate negative or no correlation. Final output is a ranked list of high-quality association rules ready for business application.

The key insight is pruning: if bread has 1% support (below 2% threshold), there is no need to check bread with milk, bread with eggs, etc. because they will all have support less than or equal to 1%. This reduces billions of candidate checks to thousands. However, Apriori still requires multiple database scans (one per level) and generates many candidates, making it slow for large datasets or low support thresholds.

Apriori in Action

1

from mlxtend.frequent_patterns import apriori, association_rules

2

from mlxtend.preprocessing import TransactionEncoder

3

import pandas as pd

4

5

# Transaction data (list of lists)

6

transactions = [

7

['milk', 'bread', 'butter'],

8

['beer', 'bread', 'diapers', 'eggs'],

9

['milk', 'diapers', 'beer', 'chips'],

10

['bread', 'milk', 'diapers', 'beer'],

11

['bread', 'milk', 'diapers', 'chips'],

12

['beer', 'chips'],

13

['milk', 'diapers', 'beer', 'butter'],

14

['bread', 'butter', 'milk'],

15

['diapers', 'beer', 'chips'],

16

['milk', 'bread', 'butter', 'eggs']

17

]

18

19

# Step 1: Convert to one-hot encoded DataFrame

20

te = TransactionEncoder()

21

te_array = te.fit(transactions).transform(transactions)

22

df = pd.DataFrame(te_array, columns=te.columns_)

23

24

print("One-hot encoded transaction data:")

25

print(df.head())

26

print(f"\nShape: {df.shape[0]} transactions, {df.shape[1]} unique items\n")

27

28

# Step 2: Find frequent itemsets using Apriori

29

# min_support=0.3 means itemset must appear in 30% of transactions

30

frequent_itemsets = apriori(df, min_support=0.3, use_colnames=True)

31

frequent_itemsets['length'] = frequent_itemsets['itemsets'].apply(lambda x: len(x))

32

33

print("Frequent Itemsets (support >= 30%):")

34

print(frequent_itemsets.sort_values('support', ascending=False))

35

print(f"\nFound {len(frequent_itemsets)} frequent itemsets\n")

36

37

# Output shows:

38

# support itemsets length

39

# 7 0.8 (beer) 1

40

# 6 0.7 (milk) 1

41

# 5 0.6 (diapers) 1

42

# 3 0.5 (bread) 1

43

# 12 0.5 (beer, diapers) 2

44

# 11 0.4 (milk, bread) 2

45

46

# Step 3: Generate association rules

47

rules = association_rules(frequent_itemsets, metric="confidence", min_threshold=0.6)

48

49

# Calculate lift and filter

50

rules = rules[rules['lift'] > 1.0] # Only positive correlations

51

52

print("Association Rules (confidence >= 60%, lift > 1):")

53

print(rules[['antecedents', 'consequents', 'support', 'confidence', 'lift']]

54

.sort_values('lift', ascending=False)

55

.to_string(index=False))

56

57

# Output:

58

# antecedents consequents support confidence lift

59

# (diapers) (beer) 0.50 0.83 1.04

60

# (bread) (milk) 0.40 0.80 1.14

61

# (beer) (diapers) 0.50 0.62 1.04

62

63

print(f"\nTop rule: {rules.iloc[0]['antecedents']} -> {rules.iloc[0]['consequents']}")

64

print(f" Support: {rules.iloc[0]['support']:.1%}")

65

print(f" Confidence: {rules.iloc[0]['confidence']:.1%}")

66

print(f" Lift: {rules.iloc[0]['lift']:.2f}")

67

print(f"\nInterpretation: Customers buying diapers have 83% chance of buying beer!")

68

print(f"This is the famous 'beer and diapers' retail pattern.")

Advanced Mining Algorithms

While Apriori is the classic algorithm, several modern alternatives offer better performance for specific use cases. These algorithms use different data structures and search strategies to overcome Apriori limitations.

FP-Growth: Faster Pattern Mining

FP-Growth (Frequent Pattern Growth) solves the same problem as Apriori but in a smarter way. Apriori generates millions of candidate combinations and tests each one - slow and memory-heavy. FP-Growth skips all of that. Instead, it compresses your entire transaction database into a single tree structure called an FP-tree, then mines patterns directly from the tree. It only needs to read your data twice, making it 10 to 100 times faster than Apriori on large datasets.

Here is how FP-Growth works step by step:

- First scan - read all transactions once and count how often each item appears. Drop any item that falls below the minimum support threshold.

- Build the FP-tree - read the data a second time and insert each transaction into the tree, with items sorted by frequency. The most common items sit near the top; rarer items branch lower. Transactions that share common items reuse the same branch, so the tree stays compact even for millions of transactions.

- Mine the tree - for each item, trace all the paths in the tree that contain it. These paths reveal which other items appear alongside it, giving you the frequent patterns directly - no candidate generation needed.

When to Use FP-Growth over Apriori

1

from mlxtend.frequent_patterns import fpgrowth, association_rules

2

from mlxtend.preprocessing import TransactionEncoder

3

import pandas as pd

4

import time

5

6

# Larger transaction dataset for performance comparison

7

transactions = [

8

['laptop', 'mouse', 'keyboard', 'monitor'],

9

['laptop', 'mouse', 'usb_drive'],

10

['phone', 'charger', 'case'],

11

['laptop', 'mouse', 'keyboard'],

12

['phone', 'charger', 'headphones'],

13

['laptop', 'monitor', 'keyboard', 'mouse'],

14

['tablet', 'keyboard', 'stylus'],

15

['phone', 'case', 'charger'],

16

['laptop', 'mouse', 'usb_drive', 'keyboard'],

17

['monitor', 'hdmi_cable', 'keyboard']

18

] * 100 # Repeat 100 times for 1000 transactions

19

20

# Prepare data

21

te = TransactionEncoder()

22

te_array = te.fit(transactions).transform(transactions)

23

df = pd.DataFrame(te_array, columns=te.columns_)

24

25

print(f"Dataset: {len(transactions)} transactions, {len(te.columns_)} unique items\n")

26

27

# Compare Apriori vs FP-Growth performance

28

from mlxtend.frequent_patterns import apriori

29

30

min_support = 0.05 # 5% minimum support

31

32

# Benchmark Apriori

33

print("Running Apriori...")

34

start = time.time()

35

frequent_apriori = apriori(df, min_support=min_support, use_colnames=True)

36

time_apriori = time.time() - start

37

print(f" Time: {time_apriori:.3f}s")

38

print(f" Found {len(frequent_apriori)} frequent itemsets\n")

39

40

# Benchmark FP-Growth

41

print("Running FP-Growth...")

42

start = time.time()

43

frequent_fpgrowth = fpgrowth(df, min_support=min_support, use_colnames=True)

44

time_fpgrowth = time.time() - start

45

print(f" Time: {time_fpgrowth:.3f}s")

46

print(f" Found {len(frequent_fpgrowth)} frequent itemsets\n")

47

48

# Performance comparison

49

speedup = time_apriori / time_fpgrowth

50

print(f"Performance Summary:")

51

print(f" Apriori: {time_apriori:.3f}s")

52

print(f" FP-Growth: {time_fpgrowth:.3f}s")

53

print(f" Speedup: {speedup:.1f}x faster\n")

54

55

# Generate rules from FP-Growth results

56

rules = association_rules(frequent_fpgrowth, metric="confidence", min_threshold=0.6)

57

rules = rules[rules['lift'] > 1.2] # Filter by lift

58

59

print(f"Association Rules (confidence >= 60%, lift > 1.2):")

60

top_rules = rules.nlargest(5, 'lift')[['antecedents', 'consequents',

61

'support', 'confidence', 'lift']]

62

print(top_rules.to_string(index=False))

63

64

# Output example:

65

# Performance Summary:

66

# Apriori: 0.145s

67

# FP-Growth: 0.012s

68

# Speedup: 12.1x faster

69

#

70

# Association Rules (confidence >= 60%, lift > 1.2):

71

# antecedents consequents support confidence lift

72

# (laptop) (mouse) 0.60 0.86 1.43

73

# (keyboard) (mouse) 0.50 0.71 1.18

74

# (phone) (charger) 0.30 1.00 3.33

75

76

print(f"\nConclusion: FP-Growth is {speedup:.1f}x faster than Apriori")

77

print(f"Advantage grows with larger datasets and lower support thresholds")

ECLAT: Vertical Data Format

ECLAT takes a completely different approach to storing your data. Apriori and FP-Growth both work with the traditional format - each row is a transaction containing a list of items. ECLAT flips this around. Instead of "transaction contains items", it stores "item appears in these transactions." Each item gets its own list of transaction IDs showing exactly where it appeared. This makes calculating support trivial - you just count how many transaction IDs two items share.

Here is how ECLAT works step by step:

- Build TID-sets - scan the database once and create a transaction ID list for each item. For example: bread appears in [T001, T002, T004, T005] and milk appears in [T001, T003, T004, T005].

- Calculate support by intersection - to find Support(bread and milk), simply find the overlap of both lists: T001 and T004 and T005 = 3 transactions. No need to scan the full database again - just compare two lists.

- Mine using depth-first search - unlike Apriori which uses breadth-first search (checking all pairs before any triples), ECLAT follows one item combination all the way down before backtracking. This uses less memory and is faster when transactions are long and items rarely overlap.

Step 3 uses Depth-First SearchDepth-First SearchAn approach where you follow one path all the way to the end before going back and trying another. You go deep before you go wide.Example:Like exploring a maze by always going forward until you hit a dead end, then backtracking - rather than checking every possible first turn before going deeper. rather than the Breadth-First SearchBreadth-First SearchAn approach where you explore all options at the current level before going any deeper. You go wide before you go deep.Example:Like checking every room on floor 1 of a building before moving to floor 2 - rather than going straight to the top floor via one staircase. that Apriori uses - this is what gives ECLAT its memory efficiency advantage.

When to Use ECLAT

CHARM: Reducing Output Size

Apriori, FP-Growth, and ECLAT all have the same problem: they generate an enormous number of rules, most of which are redundant. CHARM (Closed Association Rule Mining) solves this by only mining Closed ItemsetClosed ItemsetAn itemset where no larger group of items appears in exactly the same set of transactions. It is the most complete version of a pattern - keeping only closed itemsets removes redundant subsets without losing any information.Example:If {bread, milk} and {bread, milk, eggs} both appear in exactly the same 150 transactions, then {bread, milk} is redundant - {bread, milk, eggs} is the closed itemset and tells you everything you need. - the most complete version of each pattern. If a smaller group of items always appears in exactly the same transactions as a larger group, CHARM keeps only the larger one and discards the redundant smaller one. The result is a much smaller, cleaner set of rules with zero information lost.

Here is a simple example of why this matters:

- Suppose {bread, milk} appears in exactly 150 transactions.

- {bread, milk, eggs} also appears in exactly those same 150 transactions.

- CHARM keeps only {bread, milk, eggs} and drops {bread, milk} - it is redundant because it adds nothing new.

- Instead of generating rules from both itemsets, you only generate rules from the closed one - cutting output by up to 90% on real datasets.

CHARM combines a depth-first search with a clever dual search across both itemsets and their transaction lists at the same time. This lets it identify and discard redundant itemsets as it goes, rather than generating everything first and filtering afterwards.

When to Use CHARM

Choosing the Right Algorithm

| Algorithm | Strategy | Database Scans | Best For | Common Uses |

|---|---|---|---|---|

| Apriori | Breadth-first, candidate generation | Multiple (one per level) | Medium datasets, teaching/baseline | Market basket analysis, cross-selling recommendations, inventory co-location, exploratory pattern discovery |

| FP-Growth | Divide-and-conquer, FP-tree | 2 scans (frequency + tree build) | Large dense datasets, low support | Clickstream analysis, frequent pattern mining, recommendation systems, large-scale retail analytics |

| ECLAT | Depth-first, vertical format | 1 scan (build TID-sets) | Sparse datasets, long transactions | Document analysis, bioinformatics, web log mining, genomic sequence analysis |

| CHARM | Hybrid closed itemsets | 1-2 scans | Reducing output size | Finding maximal patterns, reducing redundant rules, high-dimensional data |

For most applications, start with a library implementation (scikit-learn MLxtend, R arules package) which typically use Apriori or FP-Growth. For datasets with millions of transactions, consider FP-Growth or ECLAT with distributed computing frameworks like Apache Spark MLlib. For online learning or streaming data, use incremental algorithms like CARMA or SWIM.

Evaluating and Filtering Rules

A typical mining run generates thousands of rules. Most are redundant, spurious, or unactionable. Effective filtering and ranking is essential to extract business value.

Filtering Strategies

- Multi-metric thresholds: Require minimum support (1-5%), confidence (60-70%), and lift (greater than 1.5-2.0). This eliminates rare, unreliable, and uncorrelated rules.

- Redundancy removal: If A → B and A,C → B have similar metrics, keep only the simpler rule A → B unless C adds significant lift.

- Closed and maximal itemsets: Instead of all frequent itemsets, mine only closed itemsets (no superset with same support) or maximal itemsets (no frequent superset). Reduces output by 10-100x.

- Domain constraints: Apply business logic - exclude rules with items from same product category (milk → cheese is obvious), enforce directionality (low-margin item → high-margin item for upselling).

- Statistical significance: Use chi-square test or Fisher exact test to verify the association is not due to random chance. Filter rules with p-value > 0.05.

Ranking and Prioritization

After filtering, rank rules by business impact. High-lift rules with moderate support are often more valuable than high-support rules with low lift. Consider rule novelty - surprising rules that contradict domain assumptions are worth investigating (either genuine insights or data quality issues). Actionability matters - a rule is only useful if you can change store layout, recommendations, or marketing based on it.

- Rank by lift x support: This balances correlation strength with frequency. High lift but 0.01% support is not actionable.

- Segment by product category: Generate separate rule sets for different departments (electronics, groceries, pharmacy) to make recommendations department-specific.

- Time-based analysis: Compare rules across seasons, promotions, or time periods. Holiday shopping patterns differ from regular patterns.

- Customer segmentation: Mine rules separately for customer segments (new vs returning, high-value vs low-value) for personalized recommendations.

1

from mlxtend.frequent_patterns import apriori, association_rules

2

from mlxtend.preprocessing import TransactionEncoder

3

import pandas as pd

4

5

# Generate rules from realistic transaction data

6

transactions = [

7

['milk', 'bread', 'butter'],

8

['beer', 'diapers', 'chips', 'wipes'],

9

['milk', 'eggs', 'bread'],

10

['laptop', 'mouse', 'keyboard'],

11

['beer', 'diapers', 'chips'],

12

['milk', 'bread', 'eggs', 'butter'],

13

['laptop', 'mouse', 'usb_drive'],

14

['beer', 'chips', 'diapers', 'wipes'],

15

['bread', 'butter', 'milk'],

16

['laptop', 'keyboard', 'mouse']

17

] * 20 # 200 transactions

18

19

te = TransactionEncoder()

20

df = pd.DataFrame(te.fit(transactions).transform(transactions), columns=te.columns_)

21

22

# Mine rules with relaxed thresholds (generates many rules)

23

frequent = apriori(df, min_support=0.1, use_colnames=True)

24

rules = association_rules(frequent, metric="confidence", min_threshold=0.3)

25

26

print(f"Initial rules generated: {len(rules)}\n")

27

28

# STEP 1: Multi-metric filtering

29

rules_filtered = rules[

30

(rules['support'] >= 0.15) & # At least 15% of transactions

31

(rules['confidence'] >= 0.6) & # At least 60% confidence

32

(rules['lift'] > 1.2) # Positive correlation (lift > 1.2)

33

]

34

print(f"After multi-metric filtering: {len(rules_filtered)} rules")

35

print(f" Removed {len(rules) - len(rules_filtered)} low-quality rules\n")

36

37

# STEP 2: Remove redundant rules

38

# If A->B and A,C->B have similar lift, keep simpler rule

39

rules_filtered['antecedent_len'] = rules_filtered['antecedents'].apply(lambda x: len(x))

40

rules_sorted = rules_filtered.sort_values(['consequents', 'lift', 'antecedent_len'],

41

ascending=[True, False, True])

42

rules_dedup = rules_sorted.drop_duplicates(subset=['consequents'], keep='first')

43

44

print(f"After redundancy removal: {len(rules_dedup)} rules")

45

print(f" Removed {len(rules_filtered) - len(rules_dedup)} redundant rules\n")

46

47

# STEP 3: Rank by combined score (lift x support)

48

rules_dedup['score'] = rules_dedup['lift'] * rules_dedup['support']

49

rules_final = rules_dedup.sort_values('score', ascending=False)

50

51

# Display top actionable rules

52

print("Top 5 Actionable Rules (ranked by lift x support):")

53

print("=" * 80)

54

for idx, row in rules_final.head(5).iterrows():

55

ant = ', '.join(list(row['antecedents']))

56

con = ', '.join(list(row['consequents']))

57

print(f"\nRule: {ant} -> {con}")

58

print(f" Support: {row['support']:.1%} | Confidence: {row['confidence']:.1%} | "

59

f"Lift: {row['lift']:.2f} | Score: {row['score']:.3f}")

60

61

# Business interpretation

62

if row['lift'] >= 2.0:

63

print(f" Strength: STRONG - {con} is {row['lift']:.1f}x more likely with {ant}")

64

elif row['lift'] >= 1.5:

65

print(f" Strength: MODERATE - Consider bundling or cross-sell")

66

else:

67

print(f" Strength: WEAK - Monitor but may not justify action")

68

69

# Output example:

70

# Top 5 Actionable Rules:

71

# ================================================================================

72

#

73

# Rule: diapers, wipes -> beer

74

# Support: 15.0% | Confidence: 100.0% | Lift: 2.50 | Score: 0.375

75

# Strength: STRONG - beer is 2.5x more likely with diapers, wipes

76

#

77

# Rule: laptop -> mouse

78

# Support: 30.0% | Confidence: 100.0% | Lift: 1.67 | Score: 0.500

79

# Strength: MODERATE - Consider bundling or cross-sell

80

81

print(f"\nFiltering Pipeline Summary:")

82

print(f" Initial rules: {len(rules)}")

83

print(f" After filtering: {len(rules_final)} ({len(rules_final)/len(rules)*100:.0f}%)")

84

print(f" Reduction: {len(rules) - len(rules_final)} rules eliminated")

Interpreting and Acting on Rules

- Validate with domain experts: Show top rules to business stakeholders. Do they make sense? Are they actionable? Rules that surprise experts are most valuable (novel insights) or most suspect (spurious correlations).

- Test causality carefully: Association does not equal causation. ice_cream_sales and swimming_pool_visits correlate but neither causes the other (both caused by hot weather). Test interventions before assuming causality.

- A/B test recommendations: Before rolling out rule-based recommendations broadly, A/B test with small customer segment. Measure impact on sales, engagement, satisfaction.

- Monitor rule stability: Re-mine periodically (monthly/quarterly). If rules change dramatically, investigate why - seasonal shifts, product changes, marketing campaigns.

- Combine with other insights: Use association rules alongside customer segmentation, price elasticity analysis, inventory optimization. Rules provide one lens on customer behavior, not the complete picture.

Real-World Applications

Association rule mining extends far beyond retail market basket analysis. Any domain with transactional or co-occurrence data can benefit.

| Industry | Transaction Type | Example Rules | Business Impact |

|---|---|---|---|

| E-commerce | Product purchases | laptop → laptop bag, mouse | Cross-sell recommendations, bundle pricing, homepage layout optimization |

| Healthcare | Diagnosis codes, medications | diabetes, obesity → hypertension | Comorbidity detection, preventive care, medication interaction warnings |

| Telecom | Service subscriptions | unlimited_data → family_plan, insurance | Upsell strategies, churn prevention, package bundling |

| Banking | Transaction types | mortgage → home_insurance, property_tax_account | Product recommendations, fraud detection, customer lifetime value optimization |

| Streaming | Content views | stranger_things → black_mirror, dark | Recommendation engines, content acquisition, thumbnail personalization |

| Web Analytics | Page views | pricing_page → demo_request, trial_signup | Conversion funnel optimization, content strategy, navigation design |

| Manufacturing | Defect patterns | vibration_sensor_failure → bearing_wear, alignment_issue | Predictive maintenance, quality control, root cause analysis |

| Education | Course enrollments | calculus_1 → physics_1, linear_algebra | Course sequencing, degree planning, student success prediction |

| Genomics | Gene expressions | gene_A, gene_B → disease_susceptibility | Biomarker discovery, drug target identification, personalized medicine |

| Fraud Detection | Transaction patterns | foreign_IP, new_device, large_withdrawal → fraud | Real-time fraud scoring, alert generation, transaction blocking |

In healthcare, association rules help identify symptom combinations that predict diseases, medication interactions that cause adverse events, and comorbidity patterns for preventive care. In fraud detection, unusual transaction patterns (foreign IP address + new device + large withdrawal) trigger alerts. In content recommendation, viewing one show strongly predicts viewing another, driving 80% of Netflix viewing according to their engineering blogs.

Practical Guidelines for Association Rule Mining

Choosing Support and Confidence Thresholds

Threshold selection is more art than science, varying by dataset size and business context. Start conservatively (support 5%, confidence 70%, lift 2.0) and relax thresholds if too few rules are found. For large datasets (millions of transactions), lower support to 0.5-1% to catch rare but valuable patterns. For small datasets (thousands of transactions), raise support to 10-20% to ensure statistical significance.

- Support: Too high misses niche patterns (premium product associations). Too low generates noise and spurious correlations. Typical range: 1-5% for large datasets, 5-20% for small datasets.

- Confidence: Too high restricts to obvious rules (batteries → electronics is trivial). Too low includes unreliable rules. Typical range: 60-80%.

- Lift: Always require lift > 1 (positive correlation). For actionable rules, require lift > 1.5-2.0. Rules with lift 3-5+ are strong candidates for intervention.

- Iteration: Run with multiple threshold combinations. Compare rule sets. Validate top rules from each run with domain experts before deployment.

Data Preparation and Quality

- Clean transaction data: Remove returns, cancelled orders, test transactions. Each transaction should represent a completed purchase or event.

- Handle item granularity: Too specific (SKU level: Organic_Whole_Milk_1gal_Brand_A) generates too many rules. Too general (Dairy) loses insights. Find the right product category level.

- Minimum transaction length: Single-item transactions provide no co-occurrence information. Filter transactions with less than 2-3 items if dataset is large enough.

- Time windows: Define what constitutes a transaction - web session (30 min window), shopping trip (same day), patient visit, semester enrollment. This impacts which items can co-occur.

- Item encoding: Ensure consistent item names. Bread, bread_loaf, BREAD should map to same item. Use product IDs rather than names when possible.

1

import pandas as pd

2

from mlxtend.frequent_patterns import apriori, association_rules

3

from mlxtend.preprocessing import TransactionEncoder

4

5

# SCENARIO: Load real grocery transaction data from CSV

6

# CSV format: TransactionID, Item

7

# Example rows:

8

# 1001, Milk

9

# 1001, Bread

10

# 1001, Eggs

11

# 1002, Beer

12

# 1002, Chips

13

14

# Simulate loading from CSV (in practice: pd.read_csv('transactions.csv'))

15

data = {

16

'TransactionID': [1, 1, 1, 2, 2, 3, 3, 3, 3, 4, 4, 5, 5, 5],

17

'Item': ['milk', 'bread', 'eggs', 'beer', 'chips', 'milk', 'bread',

18

'butter', 'eggs', 'laptop', 'mouse', 'milk', 'bread', 'butter']

19

}

20

df_raw = pd.DataFrame(data)

21

22

print("Raw transaction data:")

23

print(df_raw.head(10))

24

print(f"\nTotal rows: {len(df_raw)}, Unique transactions: {df_raw['TransactionID'].nunique()}\n")

25

26

# STEP 1: Clean and normalize item names

27

df_raw['Item'] = df_raw['Item'].str.lower().str.strip() # Lowercase, remove spaces

28

29

# STEP 2: Group by transaction to create transaction lists

30

transactions_list = df_raw.groupby('TransactionID')['Item'].apply(list).tolist()

31

32

print("Transactions grouped by ID:")

33

for i, transaction in enumerate(transactions_list[:5], 1):

34

print(f" Transaction {i}: {transaction}")

35

36

# STEP 3: Filter short transactions (optional quality check)

37

min_items = 2

38

transactions_filtered = [t for t in transactions_list if len(t) >= min_items]

39

40

print(f"\nFiltered transactions (>= {min_items} items):")

41

print(f" Before: {len(transactions_list)} transactions")

42

print(f" After: {len(transactions_filtered)} transactions")

43

print(f" Removed: {len(transactions_list) - len(transactions_filtered)} single-item transactions\n")

44

45

# STEP 4: Convert to one-hot encoding for association rule mining

46

te = TransactionEncoder()

47

te_array = te.fit(transactions_filtered).transform(transactions_filtered)

48

df_encoded = pd.DataFrame(te_array, columns=te.columns_)

49

50

print("One-hot encoded data (ready for Apriori):")

51

print(df_encoded.head())

52

print(f"\nShape: {df_encoded.shape[0]} transactions, {df_encoded.shape[1]} unique items\n")

53

54

# STEP 5: Mine association rules

55

frequent_itemsets = apriori(df_encoded, min_support=0.4, use_colnames=True)

56

rules = association_rules(frequent_itemsets, metric="confidence", min_threshold=0.7)

57

rules = rules[rules['lift'] > 1.0]

58

59

print(f"Association rules found: {len(rules)}")

60

if len(rules) > 0:

61

print("\nTop rules:")

62

print(rules[['antecedents', 'consequents', 'support', 'confidence', 'lift']]

63

.head(3).to_string(index=False))

64

65

# Output example:

66

# Raw transaction data:

67

# TransactionID Item

68

# 0 1 milk

69

# 1 1 bread

70

# 2 1 eggs

71

# ...

72

#

73

# Transactions grouped by ID:

74

# Transaction 1: ['milk', 'bread', 'eggs']

75

# Transaction 2: ['beer', 'chips']

76

# Transaction 3: ['milk', 'bread', 'butter', 'eggs']

77

#

78

# One-hot encoded data (ready for Apriori):

79

# beer bread butter chips eggs laptop milk mouse

80

# 0 False True True False False False True False

81

# 1 False True False False True False True False

82

#

83

# Association rules found: 3

84

# Top rules:

85

# antecedents consequents support confidence lift

86

# (bread) (milk) 0.60 0.75 1.25

87

# (eggs) (milk) 0.60 1.00 1.67

88

89

print("\nData preparation complete! Ready for production deployment.")

Tools and Implementation

Most data science libraries provide association rule mining implementations. Python's MLxtend library offers Apriori and FP-Growth with a simple API - it is the easiest starting point. R's arules package is feature-rich and includes visualization tools. For large-scale data, Apache Spark MLlib provides distributed FP-Growth. Cloud platforms (AWS, GCP, Azure) also offer managed services for pattern mining.

Implementation Tip

1

from mlxtend.frequent_patterns import apriori, association_rules

2

from mlxtend.preprocessing import TransactionEncoder

3

import pandas as pd

4

import matplotlib.pyplot as plt

5

import seaborn as sns

6

import networkx as nx

7

8

# Generate association rules

9

transactions = [

10

['laptop', 'mouse', 'keyboard'],

11

['phone', 'charger', 'case'],

12

['laptop', 'mouse', 'usb_drive'],

13

['tablet', 'keyboard', 'stylus'],

14

['laptop', 'monitor', 'keyboard', 'mouse'],

15

['phone', 'case', 'screen_protector'],

16

['laptop', 'mouse'],

17

['phone', 'charger', 'headphones'],

18

] * 5 # 40 transactions

19

20

te = TransactionEncoder()

21

df = pd.DataFrame(te.fit(transactions).transform(transactions), columns=te.columns_)

22

23

frequent = apriori(df, min_support=0.2, use_colnames=True)

24

rules = association_rules(frequent, metric="confidence", min_threshold=0.5)

25

rules = rules[rules['lift'] > 1.1]

26

27

print(f"Generated {len(rules)} association rules\n")

28

29

# VISUALIZATION 1: Scatter plot - Support vs Confidence colored by Lift

30

plt.figure(figsize=(10, 6))

31

scatter = plt.scatter(rules['support'], rules['confidence'],

32

c=rules['lift'], s=rules['lift']*30,

33

alpha=0.6, cmap='viridis', edgecolors='black')

34

plt.colorbar(scatter, label='Lift')

35

plt.xlabel('Support', fontsize=12)

36

plt.ylabel('Confidence', fontsize=12)

37

plt.title('Association Rules: Support vs Confidence (sized by Lift)', fontsize=14)

38

plt.grid(True, alpha=0.3)

39

plt.tight_layout()

40

# plt.savefig('rules_scatter.png', dpi=300)

41

plt.show()

42

43

# VISUALIZATION 2: Network graph showing item relationships

44

G = nx.DiGraph()

45

46

# Add nodes and edges from top 10 rules

47

top_rules = rules.nlargest(10, 'lift')

48

for idx, row in top_rules.iterrows():

49

antecedents = list(row['antecedents'])

50

consequents = list(row['consequents'])

51

52

# Add edges with lift as weight

53

for ant in antecedents:

54

for con in consequents:

55

G.add_edge(ant, con, weight=row['lift'],

56

confidence=row['confidence'])

57

58

# Draw network

59

plt.figure(figsize=(12, 8))

60

pos = nx.spring_layout(G, k=2, iterations=50)

61

62

# Node sizes based on degree (how many connections)

63

node_sizes = [300 * G.degree(node) for node in G.nodes()]

64

65

# Edge widths based on lift

66

edges = G.edges()

67

weights = [G[u][v]['weight'] for u, v in edges]

68

edge_widths = [w * 2 for w in weights]

69

70

# Draw

71

nx.draw_networkx_nodes(G, pos, node_size=node_sizes,

72

node_color='lightblue', edgecolors='black', linewidths=2)

73

nx.draw_networkx_labels(G, pos, font_size=10, font_weight='bold')

74

nx.draw_networkx_edges(G, pos, width=edge_widths, alpha=0.6,

75

edge_color='gray', arrows=True, arrowsize=20,

76

arrowstyle='->', connectionstyle='arc3,rad=0.1')

77

78

plt.title('Product Association Network (edge width = lift strength)', fontsize=14)

79

plt.axis('off')

80

plt.tight_layout()

81

# plt.savefig('association_network.png', dpi=300, bbox_inches='tight')

82

plt.show()

83

84

# VISUALIZATION 3: Heatmap of top antecedent-consequent pairs

85

# Prepare data for heatmap

86

top_15 = rules.nlargest(15, 'lift').copy()

87

top_15['rule'] = top_15.apply(

88

lambda x: f"{list(x['antecedents'])[0]} -> {list(x['consequents'])[0]}", axis=1

89

)

90

91

plt.figure(figsize=(10, 8))

92

metrics_df = top_15[['rule', 'support', 'confidence', 'lift']].set_index('rule')

93

sns.heatmap(metrics_df.T, annot=True, fmt='.2f', cmap='YlOrRd',

94

cbar_kws={'label': 'Metric Value'}, linewidths=0.5)

95

plt.title('Top 15 Association Rules: Metric Comparison', fontsize=14)

96

plt.xlabel('Rules', fontsize=12)

97

plt.ylabel('Metrics', fontsize=12)

98

plt.xticks(rotation=45, ha='right')

99

plt.tight_layout()

100

# plt.savefig('rules_heatmap.png', dpi=300, bbox_inches='tight')

101

plt.show()

102

103

print("\nVisualization complete!")

104

print("Three charts created:")

105

print(" 1. Scatter plot: Support vs Confidence (colored by lift)")

106

print(" 2. Network graph: Item association relationships")

107

print(" 3. Heatmap: Top rules metric comparison")

108

print("\nUse these visualizations to communicate findings to stakeholders.")

1

from mlxtend.frequent_patterns import apriori, association_rules

2

from mlxtend.preprocessing import TransactionEncoder

3

import pandas as pd

4

import pickle

5

from datetime import datetime

6

7

class AssociationRuleRecommender:

8

"""Production-ready recommendation system using association rules."""

9

10

def __init__(self, min_support=0.02, min_confidence=0.6, min_lift=1.5):

11

self.min_support = min_support

12

self.min_confidence = min_confidence

13

self.min_lift = min_lift

14

self.rules = None

15

self.items_list = None

16

17

def train(self, transactions):

18

"""Train the recommender on historical transaction data."""

19

print(f"Training on {len(transactions)} transactions...")

20

21

# Encode transactions

22

te = TransactionEncoder()

23

te_array = te.fit(transactions).transform(transactions)

24

df = pd.DataFrame(te_array, columns=te.columns_)

25

self.items_list = list(te.columns_)

26

27

# Mine rules

28

frequent = apriori(df, min_support=self.min_support, use_colnames=True)

29

self.rules = association_rules(frequent, metric="confidence",

30

min_threshold=self.min_confidence)

31

self.rules = self.rules[self.rules['lift'] >= self.min_lift]

32

33

# Sort by lift for quick lookup

34

self.rules = self.rules.sort_values('lift', ascending=False)

35

36

print(f" Found {len(self.rules)} high-quality rules")

37

return self

38

39

def recommend(self, cart_items, top_n=5):

40

"""Generate recommendations based on current cart contents."""

41

if not self.rules or len(self.rules) == 0:

42

return []

43

44

cart_set = set(cart_items)

45

recommendations = []

46

47

# Find rules where all antecedents are in cart

48

for _, rule in self.rules.iterrows():

49

antecedents = set(rule['antecedents'])

50

consequents = set(rule['consequents'])

51

52

# Check if rule antecedents match cart items

53

if antecedents.issubset(cart_set):

54

# Recommend consequents not already in cart

55

new_items = consequents - cart_set

56

for item in new_items:

57

recommendations.append({

58

'item': item,

59

'confidence': rule['confidence'],

60

'lift': rule['lift'],

61

'reason': f"Often bought with {', '.join(antecedents)}"

62

})

63

64

# Deduplicate and rank by lift * confidence

65

seen = set()

66

unique_recs = []

67

for rec in recommendations:

68

if rec['item'] not in seen:

69

rec['score'] = rec['lift'] * rec['confidence']

70

unique_recs.append(rec)

71

seen.add(rec['item'])

72

73

# Return top N recommendations

74

return sorted(unique_recs, key=lambda x: x['score'], reverse=True)[:top_n]

75

76

def save(self, filepath):

77

"""Save trained model to disk."""

78

with open(filepath, 'wb') as f:

79

pickle.dump({

80

'rules': self.rules,

81

'items_list': self.items_list,

82

'params': {

83

'min_support': self.min_support,

84

'min_confidence': self.min_confidence,

85

'min_lift': self.min_lift

86

}

87

}, f)

88

print(f"Model saved to {filepath}")

89

90

@classmethod

91

def load(cls, filepath):

92

"""Load trained model from disk."""

93

with open(filepath, 'rb') as f:

94

data = pickle.load(f)

95

model = cls(**data['params'])

96

model.rules = data['rules']

97

model.items_list = data['items_list']

98

print(f"Model loaded from {filepath}")

99

return model

100

101

102

# EXAMPLE USAGE

103

# Training phase (offline, nightly batch job)

104

historical_transactions = [

105

['laptop', 'mouse', 'keyboard'],

106

['phone', 'charger', 'case'],

107

['laptop', 'mouse', 'monitor'],

108

['tablet', 'keyboard', 'stylus'],

109

['laptop', 'mouse', 'keyboard', 'usb_drive'],

110

] * 100 # 500 transactions

111

112

recommender = AssociationRuleRecommender(

113

min_support=0.05,

114

min_confidence=0.6,

115

min_lift=1.2

116

)

117

recommender.train(historical_transactions)

118

recommender.save('recommender_model.pkl')

119

120

# Production phase (real-time API)

121

# Load model once at server startup

122

loaded_recommender = AssociationRuleRecommender.load('recommender_model.pkl')

123

124

# User adds items to cart - generate recommendations

125

customer_cart = ['laptop', 'monitor']

126

recommendations = loaded_recommender.recommend(customer_cart, top_n=3)

127

128

print(f"\nCustomer cart: {customer_cart}")

129

print(f"Recommendations:")

130

for i, rec in enumerate(recommendations, 1):

131

print(f" {i}. {rec['item']}")

132

print(f" Confidence: {rec['confidence']:.0%} | Lift: {rec['lift']:.2f}")

133

print(f" Reason: {rec['reason']}")

134

135

# Output:

136

# Customer cart: ['laptop', 'monitor']

137

# Recommendations:

138

# 1. mouse

139

# Confidence: 90% | Lift: 1.80

140

# Reason: Often bought with laptop

141

# 2. keyboard

142

# Confidence: 75% | Lift: 1.50

143

# Reason: Often bought with laptop

144

# 3. usb_drive

145

# Confidence: 60% | Lift: 1.35

146

# Reason: Often bought with laptop, monitor

147

148

print(f"\nProduction-ready! Deploy as REST API or embed in e-commerce platform.")

Frequently Asked Questions

01 How do association rules differ from collaborative filtering?

Association rules: Find general patterns across all transactions. Does not consider individual preferences. Example: diapers -> beer applies to all diaper buyers. Collaborative filtering: Personalized recommendations based on user similarity. Considers individual history. Example: recommend movies based on viewers with similar taste. Use association rules for general product placement and bundling. Use collaborative filtering for personalized recommendations. They are complementary - Amazon uses both.

02 Can association rules handle non-binary data?

Standard association rule mining assumes binary presence/absence. For quantities: Discretize into bins: milk_1-2_units, milk_3+_units. For numerical features: Use quantitative association rules or ARFF extensions. For sequences: Use sequential pattern mining (considers order: A then B then C). For timestamps: Use temporal association rules. Most libraries support only binary data, so discretization is the practical approach for other data types.

03 Why are support and confidence not enough to evaluate rules?

Support and confidence can be misleading without lift. Example: milk appears in 80% of transactions. Rule bread -> milk with 80% confidence sounds strong but lift is only 1.0 (80% / 80% = 1) meaning no correlation - milk is just popular. Confidence ignores the base rate of the consequent. Always check lift > 1 to confirm positive correlation. Also consider conviction (handles asymmetry), leverage (improvement over independence), and statistical tests (chi-square) for robust evaluation.

04 How many transactions are needed for association rule mining?

Minimum depends on number of unique items and desired support threshold. For 100 items and 5% support, need at least 1000-2000 transactions for statistical significance. For 1000 items and 1% support, need 50,000-100,000 transactions. General rule: transactions should be 100-1000x the number of unique items. For rare pattern discovery, need even more data. Always verify rule significance with statistical tests when working with smaller datasets.

05 Can Apriori handle millions of transactions and thousands of items?

Apriori struggles with scale. For millions of transactions and low support thresholds, candidate generation explodes. Practical limits: up to 100,000 transactions with 1000 items on a single machine. For larger datasets, use FP-Growth (10-100x faster), distributed algorithms (Spark FP-Growth for billions of transactions), or sampling approaches. Alternatively, increase minimum support threshold to reduce candidates, though this may miss rare but valuable patterns.

06 How to handle seasonal or temporal patterns?

Association rules are static snapshots of transaction patterns. For temporal analysis: Segment by time: Mine separate rule sets for different periods (holiday vs regular, Q1 vs Q4). Compare rule evolution: Track how rule metrics change over time. Temporal association rules: Extended algorithms that find patterns like A and B within 24 hours. Event-based mining: Condition on external events (promotion periods, weather, sporting events) and mine rules per condition.

07 What causes spurious or meaningless rules?

Common causes: Confounding variables: ice cream and sunscreen correlate due to summer weather, not direct relationship. Data quality issues: Duplicate transactions, test data, returns not removed. Product hierarchy artifacts: All items in Electronics category co-occur because they share the parent category. Threshold too low: 0.1% support generates thousands of random rare patterns. Always validate unexpected rules with domain experts and check for data quality issues before acting on them.

08 Should I use association rules or predictive models?

They serve different purposes. Association rules: Unsupervised pattern discovery. Find all interesting co-occurrences. No target variable. Explainable if-then format. Best for exploratory analysis, recommendation systems, and generating hypotheses. Predictive models: Supervised learning with target variable. Optimize for prediction accuracy. Less interpretable (especially deep learning). Best for forecasting, classification, and regression tasks. Use association rules when you want to understand customer behavior broadly. Use predictive models when you have a specific outcome to predict.

Association rule mining is about finding actionable knowledge in large datasets. The goal is not to find all patterns, but to find patterns that surprise domain experts and lead to business decisions.